吴裕雄 python 神经网络——TensorFlow 卷积神经网络手写数字图片识别

2024-09-02 00:40:58

import os

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data INPUT_NODE = 784

OUTPUT_NODE = 10

LAYER1_NODE = 500 def get_weight_variable(shape, regularizer):

weights = tf.get_variable("weights", shape, initializer=tf.truncated_normal_initializer(stddev=0.1))

if(regularizer != None):

tf.add_to_collection('losses', regularizer(weights))

return weights def inference(input_tensor, regularizer):

with tf.variable_scope('layer1'):

weights = get_weight_variable([INPUT_NODE, LAYER1_NODE], regularizer)

biases = tf.get_variable("biases", [LAYER1_NODE], initializer=tf.constant_initializer(0.0))

layer1 = tf.nn.relu(tf.matmul(input_tensor, weights) + biases) with tf.variable_scope('layer2'):

weights = get_weight_variable([LAYER1_NODE, OUTPUT_NODE], regularizer)

biases = tf.get_variable("biases", [OUTPUT_NODE], initializer=tf.constant_initializer(0.0))

layer2 = tf.matmul(layer1, weights) + biases

return layer2 BATCH_SIZE = 100

LEARNING_RATE_BASE = 0.8

LEARNING_RATE_DECAY = 0.99

REGULARIZATION_RATE = 0.0001

TRAINING_STEPS = 30000

MOVING_AVERAGE_DECAY = 0.99

MODEL_SAVE_PATH = "F:\\TensorFlowGoogle\\201806-github\\datasets\\MNIST_data\\"

MODEL_NAME = "mnist_model" def train(mnist):

# 定义输入输出placeholder。

x = tf.placeholder(tf.float32, [None, INPUT_NODE], name='x-input')

y_ = tf.placeholder(tf.float32, [None, OUTPUT_NODE], name='y-input')

regularizer = tf.contrib.layers.l2_regularizer(REGULARIZATION_RATE)

y = inference(x, regularizer)

global_step = tf.Variable(0, trainable=False) # 定义损失函数、学习率、滑动平均操作以及训练过程。

variable_averages = tf.train.ExponentialMovingAverage(MOVING_AVERAGE_DECAY, global_step)

variables_averages_op = variable_averages.apply(tf.trainable_variables())

cross_entropy = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=y, labels=tf.argmax(y_, 1))

cross_entropy_mean = tf.reduce_mean(cross_entropy)

loss = cross_entropy_mean + tf.add_n(tf.get_collection('losses'))

learning_rate = tf.train.exponential_decay(LEARNING_RATE_BASE,global_step,mnist.train.num_examples / BATCH_SIZE, LEARNING_RATE_DECAY,staircase=True)

train_step = tf.train.GradientDescentOptimizer(learning_rate).minimize(loss, global_step=global_step)

with tf.control_dependencies([train_step, variables_averages_op]):

train_op = tf.no_op(name='train')

# 初始化TensorFlow持久化类。

saver = tf.train.Saver()

with tf.Session() as sess:

tf.global_variables_initializer().run()

for i in range(TRAINING_STEPS):

xs, ys = mnist.train.next_batch(BATCH_SIZE)

_, loss_value, step = sess.run([train_op, loss, global_step], feed_dict={x: xs, y_: ys})

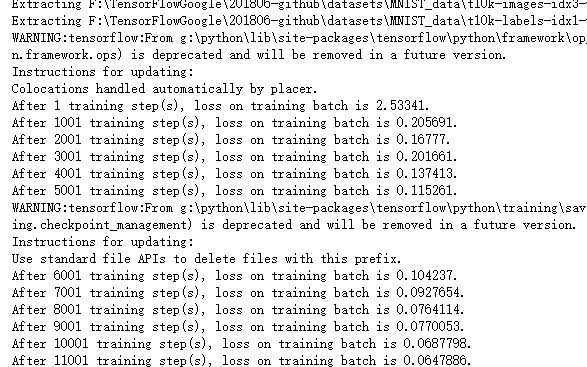

if i % 1000 == 0:

print("After %d training step(s), loss on training batch is %g." % (step, loss_value))

saver.save(sess, os.path.join(MODEL_SAVE_PATH, MODEL_NAME), global_step=global_step) def main(argv=None):

mnist = input_data.read_data_sets("F:\\TensorFlowGoogle\\201806-github\\datasets\\MNIST_data\\", one_hot=True)

train(mnist) if __name__ == '__main__':

main()

最新文章

- WEB应用中的普通Java程序如何读取资源文件

- C# 多线程同步和线程通信

- 数据库防火墙如何防范SQL注入行为

- [Java] 过滤文件夹

- UVa 10420 List of Conquests

- Python基础 列表

- NGINX实现IF语句里的AND,OR多重判断

- 声明式编程思想和EEPlat

- 基于PaaS和SaaS研发的商业云平台实战 转 (今后所有的IT行业会持续集成,往虚拟化方向更快更深的发展,商业化才是这些技术的最终目的)

- AI 人工智能 探索 (十)

- JQuery之 serialize() 及serializeArray() 实例介绍

- 基于Azkaban的任务定时调度实践

- 公众号用户发送消息后台PHP回复没有反应的解决办法

- 为什么 kubernetes 天然适合微服务

- C# 在PDF中绘制动态图章

- python编写shell脚本

- Apache web服务器(LAMP架构)

- uoj【UNR #3】To Do Tree 【贪心】

- Verilog HDL语言实现的单周期CPU设计(全部代码及其注释)

- 关于DLNA