Spark集群安装与配置

一、Scala安装

1.https://www.scala-lang.org/download/2.11.12.html下载并复制到/home/jun下解压

[jun@master ~]$ cd scala-2.12./

[jun@master scala-2.12.]$ ls -l

total

drwxrwxr-x. jun jun Apr : bin

drwxrwxr-x. jun jun Apr : doc

drwxrwxr-x. jun jun Apr : lib

drwxrwxr-x. jun jun Apr : man

2.启动Scala并使用Scala Shell

[jun@master scala-2.12.]$ bin/scala

Welcome to Scala 2.12. (Java HotSpot(TM) -Bit Server VM, Java 1.8.0_171).

Type in expressions for evaluation. Or try :help. scala> println("hello,world")

hello,world scala> *

res1: Int = scala> *res1

res2: Int = scala> :help

All commands can be abbreviated, e.g., :he instead of :help.

:completions <string> output completions for the given string

:edit <id>|<line> edit history

:help [command] print this summary or command-specific help

:history [num] show the history (optional num is commands to show)

:h? <string> search the history

:imports [name name ...] show import history, identifying sources of names

:implicits [-v] show the implicits in scope

:javap <path|class> disassemble a file or class name

:line <id>|<line> place line(s) at the end of history

:load <path> interpret lines in a file

:paste [-raw] [path] enter paste mode or paste a file

:power enable power user mode

:quit exit the interpreter

:replay [options] reset the repl and replay all previous commands

:require <path> add a jar to the classpath

:reset [options] reset the repl to its initial state, forgetting all session entries

:save <path> save replayable session to a file

:sh <command line> run a shell command (result is implicitly => List[String])

:settings <options> update compiler options, if possible; see reset

:silent disable/enable automatic printing of results

:type [-v] <expr> display the type of an expression without evaluating it

:kind [-v] <type> display the kind of a type. see also :help kind

:warnings show the suppressed warnings from the most recent line which had any scala> :quit

3.将Scala安装包复制到slave节点

二、Spark集群的安装与配置

采用Hadoop Yarn模式安装Spark

1.http://spark.apache.org/downloads.html下载spark-2.3.1-bin-hadoop2.7.tgz.gz并赋值到/home/jun下解压

[jun@master ~]$ cd spark-2.3.-bin-hadoop2./

[jun@master spark-2.3.-bin-hadoop2.]$ ls -l

total

drwxrwxr-x. jun jun Jun : bin

drwxrwxr-x. jun jun Jun : conf

drwxrwxr-x. jun jun Jun : data

drwxrwxr-x. jun jun Jun : examples

drwxrwxr-x. jun jun Jun : jars

drwxrwxr-x. jun jun Jun : kubernetes

-rw-rw-r--. jun jun Jun : LICENSE

drwxrwxr-x. jun jun Jun : licenses

-rw-rw-r--. jun jun Jun : NOTICE

drwxrwxr-x. jun jun Jun : python

drwxrwxr-x. jun jun Jun : R

-rw-rw-r--. jun jun Jun : README.md

-rw-rw-r--. jun jun Jun : RELEASE

drwxrwxr-x. jun jun Jun : sbin

drwxrwxr-x. jun jun Jun : yarn

2.配置Linux环境变量

#spark

export HADOOP_CONF_DIR=$HADOOP_HOME/etc/hadoop

export HDFS_CONF_DIR=$HADOOP_HOME/etc/hadoop

export YARN_CONF_DIR=$HADOOP_HOME/etc/hadoop

3.配置spark-env.sh环境变量,注意三个计算机上都必须要这样配置才行

复制默认配置文件并使用gedit打开

[jun@master conf]$ cp ~/spark-2.3.-bin-hadoop2./conf/spark-env.sh.template ~/spark-2.3.-bin-hadoop2./conf/spark-env.sh

[jun@master conf]$ gedit ~/spark-2.3.-bin-hadoop2./conf/spark-env.sh

增加下面的配置

export SPARK_MASTER_IP=192.168.1.100

export JAVA_HOME=/usr/java/jdk1..0_171/

export SCALA_HOME=/home/jun/scala-2.12./

4.修改Spark的slaves文件

使用gedit打开文件

[jun@master conf]$ cp ~/spark-2.3.-bin-hadoop2./conf/slaves.template slaves

[jun@master conf]$ gedit ~/spark-2.3.-bin-hadoop2./conf/slaves

删除默认的localhost并增加下面的配置

# A Spark Worker will be started on each of the machines listed below.

slave0

slave1

5.将Spark复制到Slave节点

三、Spark集群的启动与验证

1.启动Spark集群

首先确保Hadoop集群处于启动状态,然后执行启动脚本

[jun@master conf]$ /home/jun/spark-2.3.-bin-hadoop2./sbin/start-all.sh

starting org.apache.spark.deploy.master.Master, logging to /home/jun/spark-2.3.-bin-hadoop2./logs/spark-jun-org.apache.spark.deploy.master.Master--master.out

slave0: starting org.apache.spark.deploy.worker.Worker, logging to /home/jun/spark-2.3.-bin-hadoop2./logs/spark-jun-org.apache.spark.deploy.worker.Worker--slave0.out

slave1: starting org.apache.spark.deploy.worker.Worker, logging to /home/jun/spark-2.3.-bin-hadoop2./logs/spark-jun-org.apache.spark.deploy.worker.Worker--slave1.out

2.验证启动状态

(1)通过jps查看进程,可以看到master节点上增加了Master进程,而slave节点上增加了Worker进程

[jun@master conf]$ jps

ResourceManager

SecondaryNameNode

Master

NameNode

Jps [jun@slave0 ~]$ jps

DataNode

Worker

NodeManager

Jps [jun@slave1 ~]$ jps

DataNode

Worker

NodeManager

Jps

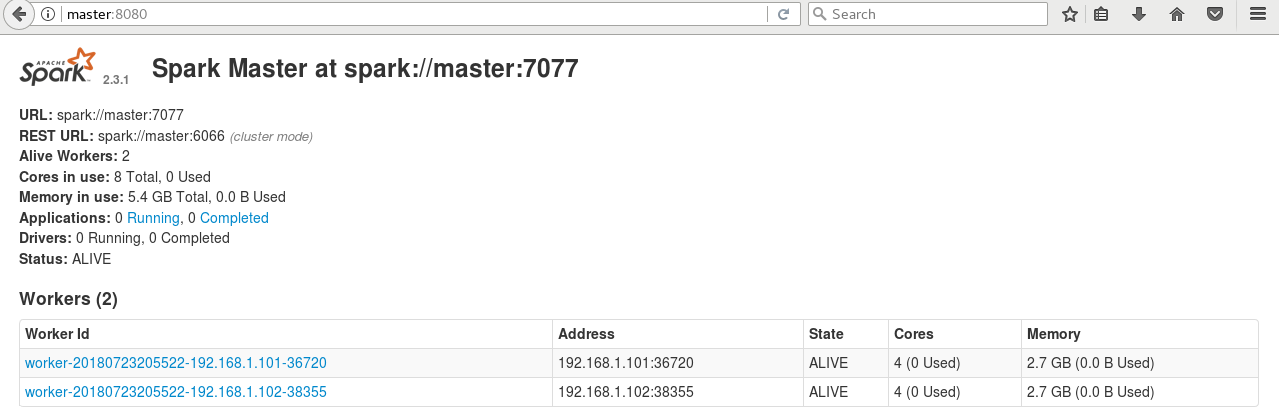

(2)通过Web查看系统状态,输入http://master:8080

(3)通过终端命令行向Spark集群提交计算程序

为了直接在目录下加载jar包,先将示例程序jar包复制到Spark主安装目录下

[jun@master conf]$ cp /home/jun/spark-2.3.-bin-hadoop2./examples/jars/spark-examples_2.-2.3..jar /home/jun/spark-2.3.-bin-hadoop2./

执行SparkPi程序

[jun@master spark-2.3.-bin-hadoop2.]$ bin/spark-submit --class org.apache.spark.examples.SparkPi --master yarn-cluster --num-executors --driver-memory 512m --executor-memory 512m --executor-cores spark-examples_2.-2.3..jar

这个时候报了一个错误,意思就是container要用2.3G内存,而实际的虚拟内存只有2.1G。Yarn默认的虚拟内存和物理内存比例是2.1,也就是说虚拟内存是2.1G,小于了需要的内存2.2G。解决的办法是把拟内存和物理内存比例增大,在yarn-site.xml中增加一个设置:

diagnostics: Application application_1532350446978_0001 failed times due to AM Container for appattempt_1532350446978_0001_000002 exited with exitCode: -

Failing this attempt.Diagnostics: Container [pid=,containerID=container_1532350446978_0001_02_000001] is running beyond virtual memory limits. Current usage: 289.7 MB of GB physical memory used; 2.3 GB of 2.1 GB virtual memory used. Killing container.

关闭Yarn然后在配置文件中增加下面的配置,然后重启Yarn

<property>

<name>yarn.nodemanager.vmem-pmem-ratio</name>

<value>2.5</value>

</property>

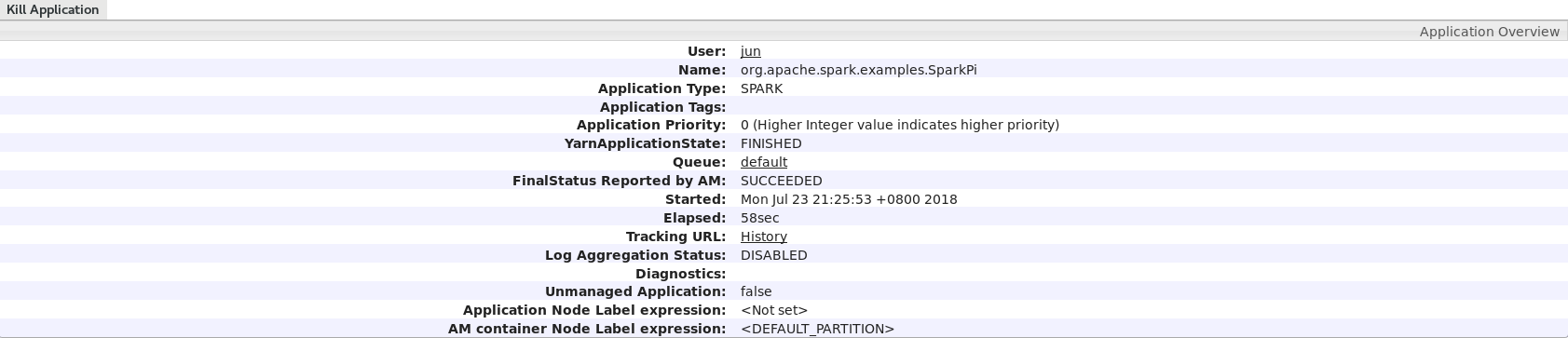

再次运行SparkPi程序,在final status可以看到运行成功!

-- :: INFO Client: -

client token: N/A

diagnostics: N/A

ApplicationMaster host: 192.168.1.101

ApplicationMaster RPC port:

queue: default

start time:

final status: SUCCEEDED

tracking URL: http://master:18088/proxy/application_1532352327714_0001/

user: jun

-- :: INFO ShutdownHookManager: - Shutdown hook called

-- :: INFO ShutdownHookManager: - Deleting directory /tmp/spark-1ed5bee9-1aa7--b3ec-80ff2b153192

-- :: INFO ShutdownHookManager: - Deleting directory /tmp/spark-7349a4e3--4d09-91ff-e1e48cb59b46

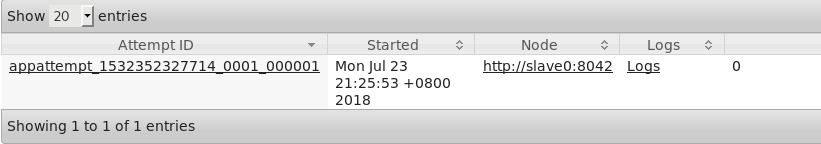

在tracking URL上右键然后选择open link即可在浏览器看到运行状态

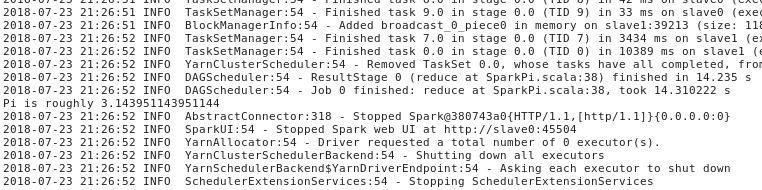

点击logs,然后点击stdout,可以看到运行结果Pi is roughly 3.143951143951144

最新文章

- Windows 10 IoT Serials 3 - Windows 10 IoT Core Ardunio Wiring Mode

- WAP端 经验记录2

- java输出MYSQL数据库里面的数据最简单的实例

- iOS开发-UIColor转UIIamge方法

- smartcomb:用php实现的web模块拼合器

- iOS开发——OC篇&协议篇/NSCoder/NSCoding/NSCoping

- 基于SSH的数据库中图片的读写

- 全局键盘钩子(WH_KEYBOARD)

- POJ2796/DP/单调栈

- Myeclipse8.5 反编译插件 jad 安装(转)

- IntelliJ IDEA 2017.1.4 x64配置说明

- Storm中重要对象的生命周期

- leetcode — two-sum-iii-data-structure-design

- [UE4]关卡蓝图

- ASP.NET文件下载各种方式比较:对性能的影响、对大文件的支持、对断点续传和多线程下载的支持

- 梯度下降法实现-python[转载]

- 为什么需要API网关?

- Html解析

- 微信小程序页面3秒后自动跳转

- NIO-3网络通信(非阻塞)