pytorch学习笔记(8)--搭建简单的神经网络以及Sequential的使用

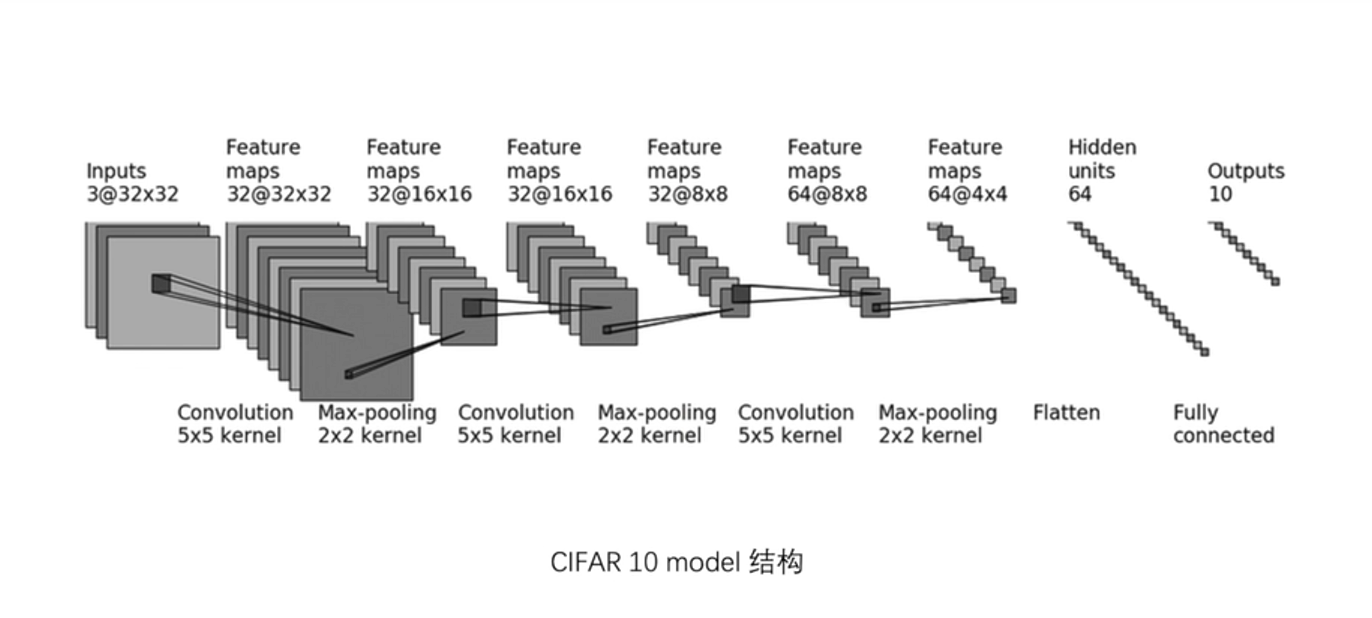

1、神经网络图

输入图像是3通道的32×32的,先后经过卷积层(5×5的卷积核)、最大池化层(2×2的池化核)、卷积层(5×5的卷积核)、最大池化层(2×2的池化核)、卷积层(5×5的卷积核)、最大池化层(2×2的池化核)、拉直、全连接层的处理,最后输出的大小为10。

注:(1)通道变化时通过调整卷积核的个数(即输出通道)来实现的,再nn.conv2d的参数中有out_channel这个参数就是对应输出通道

(2)32个3*5*5的卷积核,然后input对其一个个卷积得到32个32*32------通道数变不变看用几个卷积核

(3)最大池化不改变通道channel数

代码输入:

# file : nn_sequential.py

# time : 2022/8/2 上午9:11

# function : 实现一个简单的神经网络

import torch

from torch import nn

from torch.nn import Conv2d, MaxPool2d, Flatten, Linear class Tudui(nn.Module):

def __init__(self):

super(Tudui, self).__init__()

# stride 默认为1 所以不写也可

self.conv1 = Conv2d(in_channels=3, out_channels=32, kernel_size=5, stride=1, padding=2)

self.maxpool1 = MaxPool2d(kernel_size=2)

self.conv2 = Conv2d(in_channels=32, out_channels=32, kernel_size=5, stride=1, padding=2)

self.maxpool2 = MaxPool2d(kernel_size=2)

self.conv3 = Conv2d(in_channels=32, out_channels=64, kernel_size=5, stride=1, padding=2)

self.maxpool3 = MaxPool2d(kernel_size=2)

self.flatten = Flatten()

self.linear1 = Linear(in_features=1024, out_features=64)

self.linear2 = Linear(in_features=64, out_features=10) def forward(self, x):

x = self.conv1(x)

x = self.maxpool1(x)

x = self.conv2(x)

x = self.maxpool2(x)

x = self.conv3(x)

x = self.maxpool3(x)

x = self.flatten(x)

x = self.linear1(x)

x = self.linear2(x)

return x tudui = Tudui()

# 输出网络的结构情况

print(tudui)

# bitch_size = 64 ,channel通道=3,尺寸32*32

input = torch.ones((64, 3, 32, 32))

output = tudui(input)

print(output.shape) # 输出output尺寸

输出:

Tudui(

(conv1): Conv2d(3, 32, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(maxpool1): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

(conv2): Conv2d(32, 32, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(maxpool2): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

(conv3): Conv2d(32, 64, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(maxpool3): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

(flatten): Flatten(start_dim=1, end_dim=-1)

(linear1): Linear(in_features=1024, out_features=64, bias=True)

(linear2): Linear(in_features=64, out_features=10, bias=True)

)

torch.Size([64, 10])

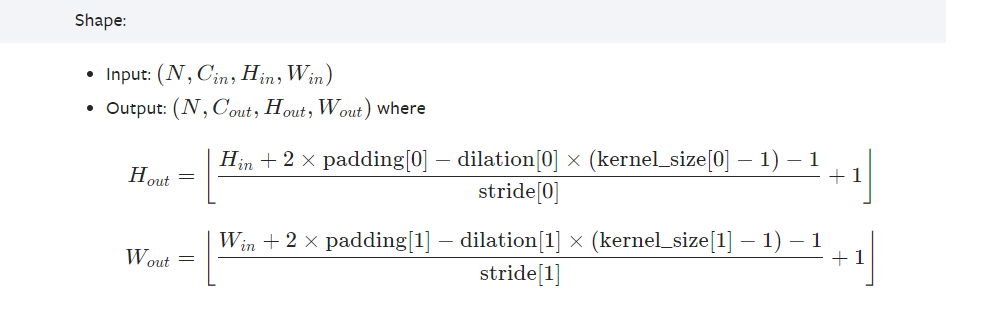

补充说明:

其中Hout=32,Hin(输入的高)=32,dilation[0]=1(默认设置为1),kernel_size[0]=5,将其带入到Hout的公式,

计算过程如下:

32 =((32+2×padding[0]-1×(5-1)-1)/stride[0])+1,简化之后的式子为:

27+2×padding[0]=31×stride[0],其中stride[0]=1,所以padding[0]=2(注若stride[0]=2则padding[0]很大舍去)

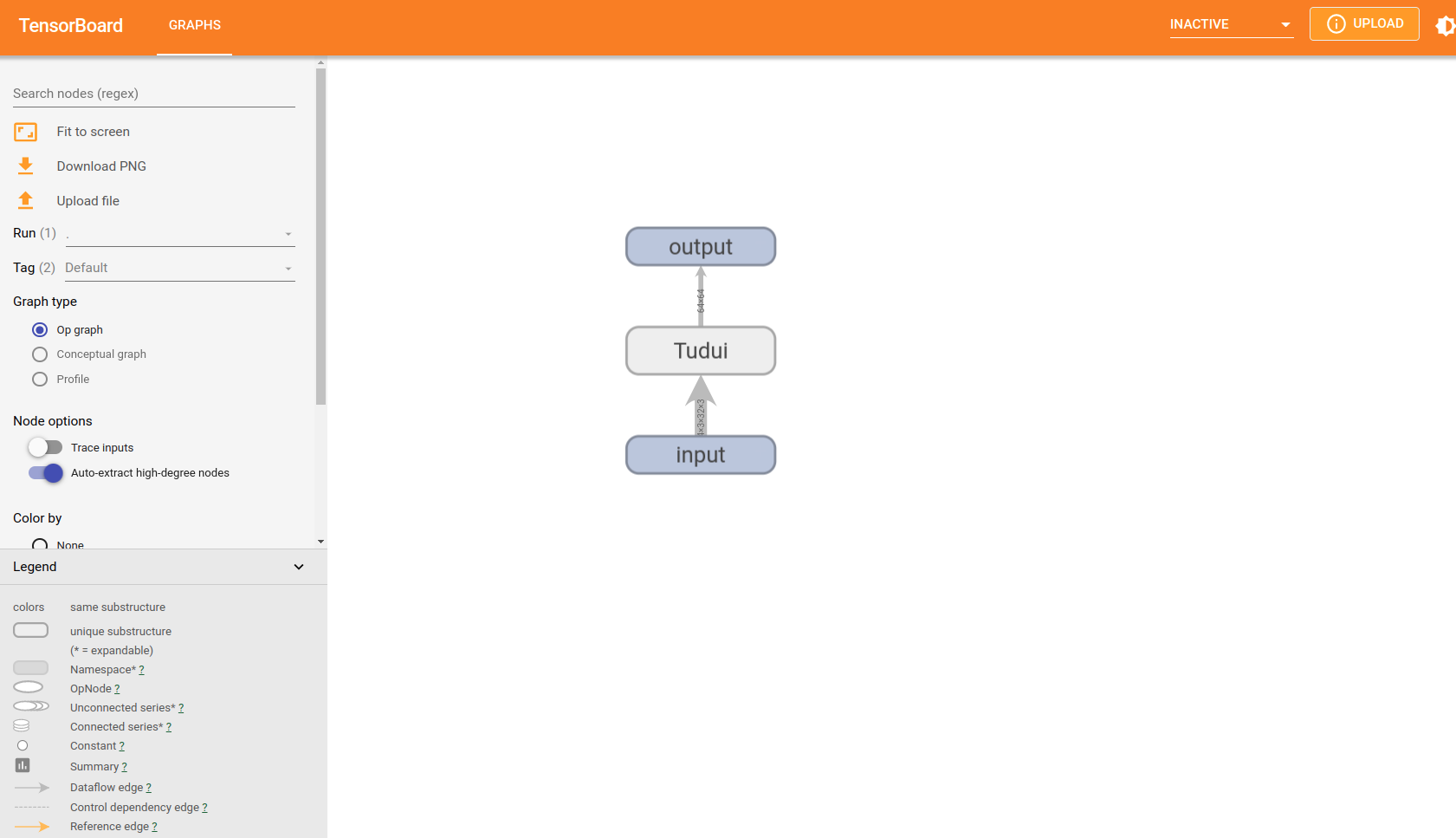

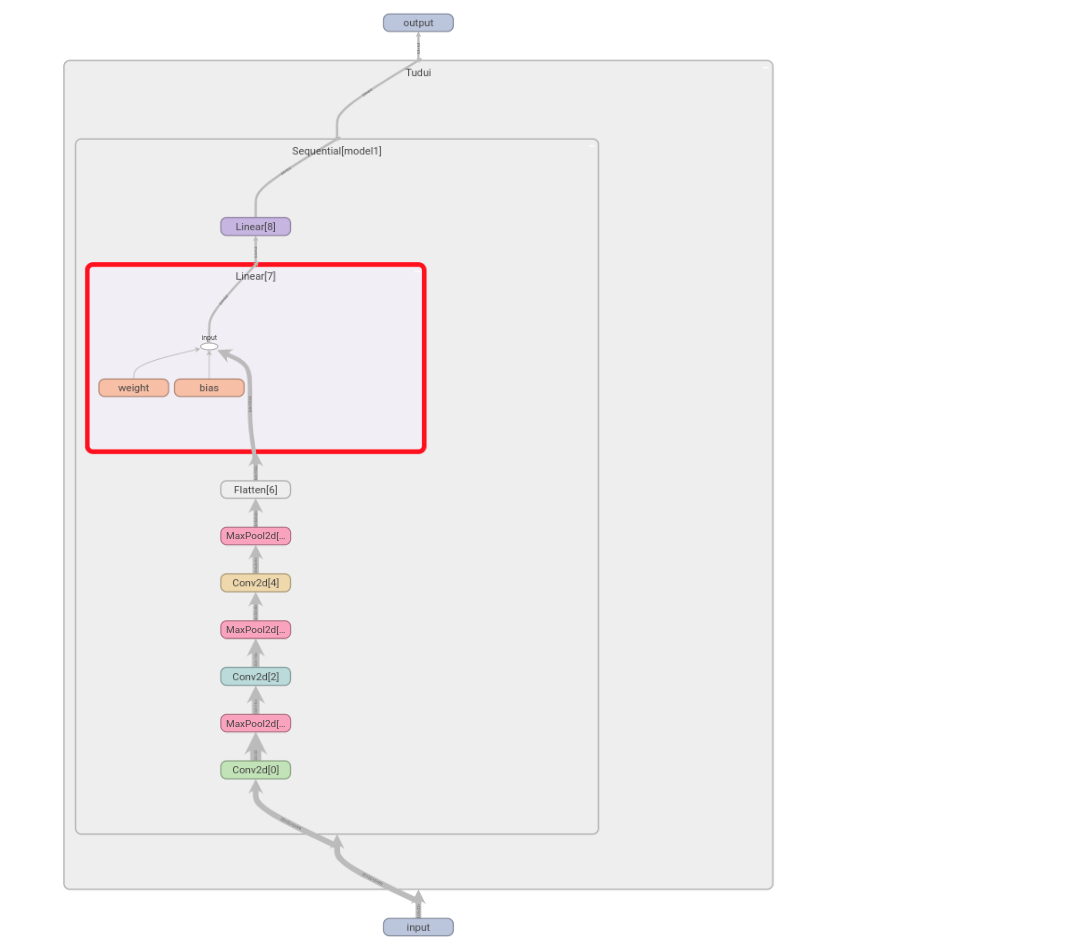

2、Sequential

Sequential是一个时序容器。Modules 会以他们传入的顺序被添加到容器中。包含在PyTorch官网中torch.nn模块中的Containers中,在神经网络搭建的过程中如果使用Sequential,代码更简洁

现以Sequential搭建上述一模一样的神经网络,并借助tensorboard显示计算图的具体信息。代码如下:

# file : nn_sequential.py

# time : 2022/8/2 上午9:11

# function : Sequential

import torch

from torch import nn

from torch.nn import Conv2d, MaxPool2d, Flatten, Linear, Sequential

from torch.utils.tensorboard import SummaryWriter class Tudui(nn.Module):

def __init__(self):

super(Tudui, self).__init__()

self.model1 = Sequential(

Conv2d(3, 32, 5, padding=2),

MaxPool2d(2),

Conv2d(32, 32, 5, padding=2),

MaxPool2d(2),

Conv2d(32, 64, 5, padding=2),

MaxPool2d(2),

Flatten(),

Linear(1024, 64),

Linear(64, 64)

) def forward(self, x):

x = self.model1(x)

return x tudui = Tudui()

print(tudui) input = torch.ones((64, 3, 32, 32))

output = tudui(input)

print(output.shape) writer = SummaryWriter("../logs")

writer.add_graph(tudui, input)

writer.close()

输出:

Tudui(

(model1): Sequential(

(0): Conv2d(3, 32, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(1): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

(2): Conv2d(32, 32, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(3): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

(4): Conv2d(32, 64, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))

(5): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

(6): Flatten(start_dim=1, end_dim=-1)

(7): Linear(in_features=1024, out_features=64, bias=True)

(8): Linear(in_features=64, out_features=64, bias=True)

)

)

torch.Size([64, 64])

双击打开查看具体节点信息:

最新文章

- jquery animate 动画效果使用解析

- 【leetcode】Max Points on a Line

- R中list对象属性以及具有list性质的对象

- unix

- poj 1085 Triangle War 博弈论+记忆化搜索

- [原]poj-2680-Choose the best route-dijkstra(基础最短路)

- linux下对符合条件的文件大小做汇总统计的简单命令

- J2EE之ANT

- sql server把一个表中数据复制到另一个表

- UVa 825 - Walking on the Safe Side

- 2、hibernate七步走完成增删改查

- php中获取各种路径

- stdafx文件介绍

- #define宏与const的区别

- usb_camera

- mescroll在vue中的应用

- numpy中的复合数组

- 29.Spring-基础.md

- Python 安装requests和MySQLdb

- linux ps 命令的查看