线性回归代码实现(matlab)

2024-09-05 02:41:00

1 代价函数实现(cost function)

function J = computeCost(X, y, theta)

%COMPUTECOST Compute cost for linear regression

% J = COMPUTECOST(X, y, theta) computes the cost of using theta as the

% parameter for linear regression to fit the data points in X and y % Initialize some useful values

m = length(y); % number of training examples % You need to return the following variables correctly

J = 0; % ====================== YOUR CODE HERE ======================

% Instructions: Compute the cost of a particular choice of theta

% You should set J to the cost. predictions = X * theta;

sqrErrors = (predictions-y) .^ 2; J = 1/(2*m) * sum(sqrErrors); % ========================================================================= end

1.1 详细解释

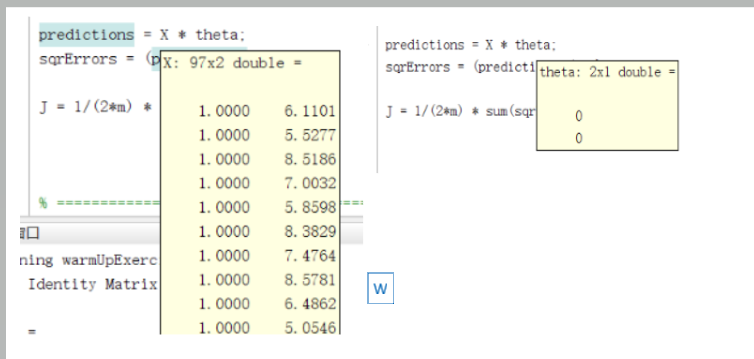

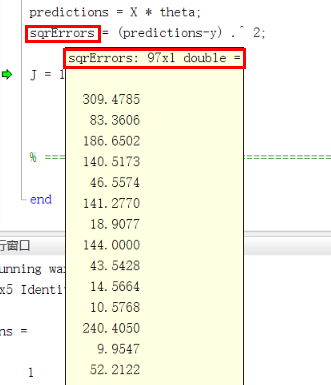

转化成了向量(矩阵)形式,如果用其他的语言,用循环应该可以实现

predictions = X * theta; % 这里的大X是矩阵

sqrErrors = (predictions-y) .^ 2;

2 梯度下降

function [theta, J_history] = gradientDescent(X, y, theta, alpha, num_iters)

%GRADIENTDESCENT Performs gradient descent to learn theta

% theta = GRADIENTDESENT(X, y, theta, alpha, num_iters) updates theta by

% taking num_iters gradient steps with learning rate alpha % Initialize some useful values

m = length(y); % number of training examples

J_history = zeros(num_iters, 1); for iter = 1:num_iters % ====================== YOUR CODE HERE ======================

% Instructions: Perform a single gradient step on the parameter vector

% theta.

%

% Hint: While debugging, it can be useful to print out the values

% of the cost function (computeCost) and gradient here.

%

theta_temp = theta;

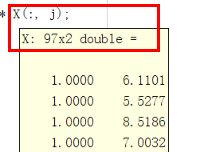

for j = 1:size(X, 2)

theta_temp(j) = theta(j)-alpha*(1/m)*(X*theta - y)' * X(:, j);

end

theta = theta_temp; % ============================================================ % Save the cost J in every iteration

J_history(iter) = computeCost(X, y, theta); end end

2.1 解释

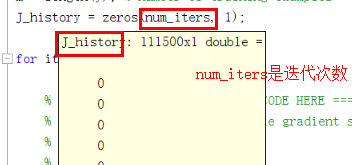

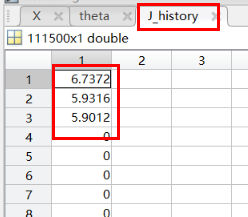

J_history = zeros(num_iters, 1);

theta_temp = theta;

把theta存起来。保证同时更新

for j = 1:size(X, 2)

theta_temp(j) = theta(j)-alpha*(1/m)*(X*theta - y)' * X(:, j);

end

更新theta

(X*theta - y)' 是转置

(X*theta - y)' * X(:, j);

这步是求和,相当于sum

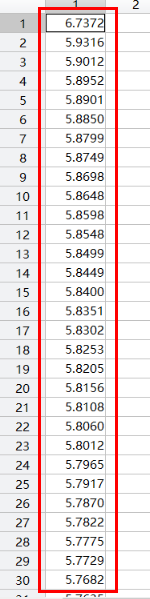

J_history(iter) = computeCost(X, y, theta);

记录代价函数

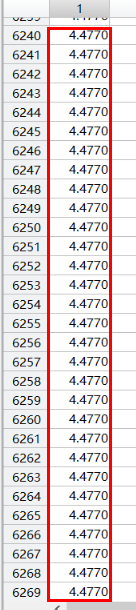

因为随着迭代次数的增加,代价函数收敛。theta也就确定了。

代价函数的是降低,同时theta也在变化

到后面代价函数的值已经不变化了。到收敛了

最新文章

- JSON对象格式美化

- 关闭form上chrome的autofill

- web开发技术-过滤器

- VR技术的探索阶段

- gulp 基本使用

- AngulaJs+Web Api Cors 跨域访问失败的解决办法

- Bonbo Git Server

- 能源项目xml文件标签释义--DataSource

- Css选择器的优先级

- Angularjs总结(一)表单验证

- COM编程入门第二部分——深入COM服务器

- Linux的几个概念,常用命令学习

- 《JS权威指南学习总结--8.8.4 记忆函数》

- ci公共模型类

- immutable日常操作之深入API

- solrcloud(solr集群版)安装与配置

- 如何用Go语言实现汉诺塔算法

- Linux内存描述之内存节点node--Linux内存管理(二)

- 关于dede后台登陆后一片空白以及去除版权

- nginx-相关功能分析 第四章