在MNIST数据集,实现多个功能的tensorflow程序

2024-10-19 08:57:07

使用带指数衰减的学习率的设置、使用正则化来避免过拟合,使用滑动平均模型使得最终模型更加健壮。

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

INPUT_NODE = 784 # 输入节点

OUTPUT_NODE = 10 # 输出节点

LAYER1_NODE = 500 # 隐藏层数

BATCH_SIZE = 100 # 每次batch打包的样本个数

# 模型相关的参数

LEARNING_RATE_BASE = 0.8

LEARNING_RATE_DECAY = 0.99

REGULARAZTION_RATE = 0.0001

TRAINING_STEPS = 5000

MOVING_AVERAGE_DECAY = 0.99

def inference(input_tensor, avg_class, weights1, biases1, weights2, biases2):

# 不使用滑动平均类

if avg_class == None:

layer1 = tf.nn.relu(tf.matmul(input_tensor, weights1) + biases1)

return tf.matmul(layer1, weights2) + biases2

else:

# 使用滑动平均类

layer1 = tf.nn.relu(tf.matmul(input_tensor, avg_class.average(weights1)) + avg_class.average(biases1))

return tf.matmul(layer1, avg_class.average(weights2)) + avg_class.average(biases2)

def train(mnist):

x = tf.placeholder(tf.float32, [None, INPUT_NODE], name='x-input')

y_ = tf.placeholder(tf.float32, [None, OUTPUT_NODE], name='y-input')

# 生成隐藏层的参数。

weights1 = tf.Variable(tf.truncated_normal([INPUT_NODE, LAYER1_NODE], stddev=0.1))

biases1 = tf.Variable(tf.constant(0.1, shape=[LAYER1_NODE]))

# 生成输出层的参数。

weights2 = tf.Variable(tf.truncated_normal([LAYER1_NODE, OUTPUT_NODE], stddev=0.1))

biases2 = tf.Variable(tf.constant(0.1, shape=[OUTPUT_NODE]))

# 计算不含滑动平均类的前向传播结果

y = inference(x, None, weights1, biases1, weights2, biases2)

# 定义训练轮数及相关的滑动平均类

global_step = tf.Variable(0, trainable=False)

variable_averages = tf.train.ExponentialMovingAverage(MOVING_AVERAGE_DECAY, global_step)

variables_averages_op = variable_averages.apply(tf.trainable_variables())

average_y = inference(x, variable_averages, weights1, biases1, weights2, biases2)

# 计算交叉熵及其平均值

cross_entropy = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=y, labels=tf.argmax(y_, 1))

cross_entropy_mean = tf.reduce_mean(cross_entropy)

# 损失函数的计算

regularizer = tf.contrib.layers.l2_regularizer(REGULARAZTION_RATE)

regularaztion = regularizer(weights1) + regularizer(weights2)

loss = cross_entropy_mean + regularaztion

# 设置指数衰减的学习率。

learning_rate = tf.train.exponential_decay(

LEARNING_RATE_BASE,

global_step,

mnist.train.num_examples / BATCH_SIZE,

LEARNING_RATE_DECAY,

staircase=True)

# 优化损失函数

train_step = tf.train.GradientDescentOptimizer(learning_rate).minimize(loss, global_step=global_step)

# 反向传播更新参数和更新每一个参数的滑动平均值

with tf.control_dependencies([train_step, variables_averages_op]):

train_op = tf.no_op(name='train')

# 计算正确率

correct_prediction = tf.equal(tf.argmax(average_y, 1), tf.argmax(y_, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

# 初始化会话,并开始训练过程。

with tf.Session() as sess:

tf.global_variables_initializer().run()

validate_feed = {x: mnist.validation.images, y_: mnist.validation.labels}

test_feed = {x: mnist.test.images, y_: mnist.test.labels}

# 循环的训练神经网络。

for i in range(TRAINING_STEPS):

if i % 1000 == 0:

validate_acc = sess.run(accuracy, feed_dict=validate_feed)

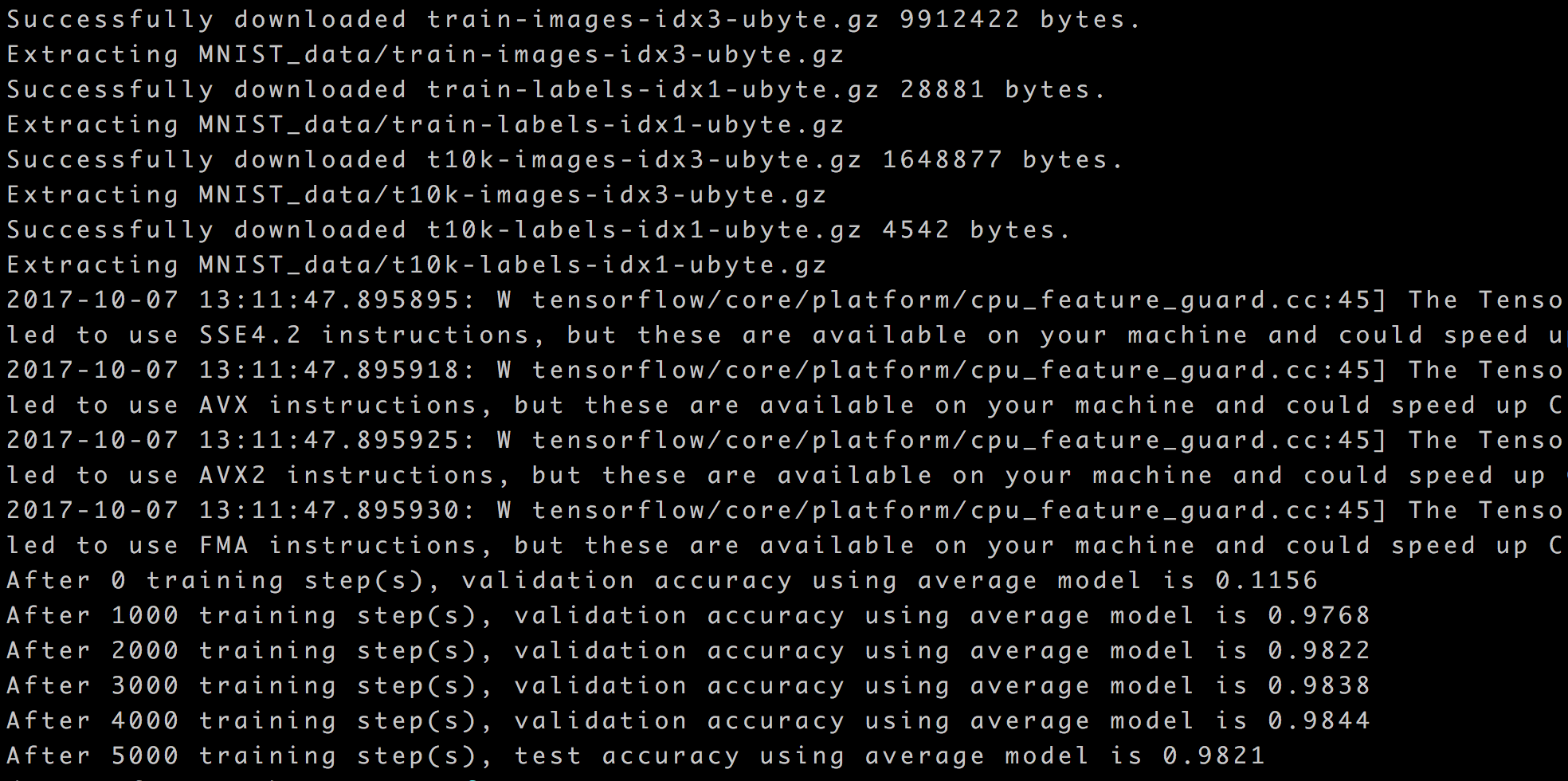

print("After %d training step(s), validation accuracy using average model is %g " % (i, validate_acc))

xs,ys=mnist.train.next_batch(BATCH_SIZE)

sess.run(train_op,feed_dict={x:xs,y_:ys})

test_acc=sess.run(accuracy,feed_dict=test_feed)

print(("After %d training step(s), test accuracy using average model is %g" %(TRAINING_STEPS, test_acc)))

def main(argv=None):

mnist = input_data.read_data_sets("MNIST_data", one_hot=True)

train(mnist)

if __name__=='__main__':

main()

结果:

最新文章

- C#设计模式系列:装饰模式(Decorator)

- POJ1276Cash Machine[多重背包可行性]

- JavaScript中变量和函数声明的提升

- 自己动手写js分享插件(QQ空间,微信,新浪微博。。。)

- Combination Sum [LeetCode]

- SRM 581 D2 L3:TreeUnionDiv2,Floyd算法

- httpd的简单配置(转)

- windows phone (24) Canvas元素A

- docker rmi all

- ASP提取字段中的图片地址

- linux常用脚本

- UML总结(对九种图的认识和如何使用Rational Rose 画图)

- linux(centos)下安装PHP的PDO扩展

- 201521123121 《Java程序设计》第11周学习总结

- [AtCoder3856]Ice Rink Game - 模拟

- 【JS单元测试】Qunit 和 jsCoverage使用方法

- 《Linux内核设计与实现》课本第四章学习总结

- pycharm的小问题之光标

- MySQL ERROR 1300 (HY000): Invalid utf8 character string

- 最全的Spring面试题和答案<一>

热门文章

- 使用CMake在Linux下编译tinyxml静态库

- HTML提交方式post和get区别(实验)

- Confluence 6 使用 LDAP 授权连接到 Confluence 内部目录

- ubuntu下使用CAJ云阅读--CAJViewer(Cloud)

- Java网络编程和NIO详解8:浅析mmap和Direct Buffer

- Deep Learning of Graph Matching 阅读笔记

- 规格化设计-----JSF(第三次博客作业)

- CNN autoencoder 进行异常检测——TODO,使用keras进行测试

- splunk中mongodb作用——存用户相关数据如会话、搜索结果等

- 实现自己的ls命令