(待整理)flume操作----------hivelogsToHDFS案例----------运行时,发生NoClassDefFoundError错误

1.

2.错误日志

命令为 bin/flume-ng agent --name a2 --conf conf/ --conf-file job/file-hdfs.conf Info: Sourcing environment configuration script /opt/modules/flume/conf/flume-env.sh

Info: Including Hive libraries found via () for Hive access

+ exec /opt/modules/jdk1.8.0_121/bin/java -Xmx20m -cp '/opt/modules/flume/conf:/opt/modules/flume/lib/*:/lib/*' -Djava.library.path= org.apache.flume.node.Application --name a2 --conf-file job/file-hdfs.conf

Exception in thread "SinkRunner-PollingRunner-DefaultSinkProcessor" java.lang.NoClassDefFoundError: org/apache/htrace/SamplerBuilder

at org.apache.hadoop.hdfs.DFSClient.<init>(DFSClient.java:635)

at org.apache.hadoop.hdfs.DFSClient.<init>(DFSClient.java:619)

at org.apache.hadoop.hdfs.DistributedFileSystem.initialize(DistributedFileSystem.java:149)

at org.apache.hadoop.fs.FileSystem.createFileSystem(FileSystem.java:2653)

at org.apache.hadoop.fs.FileSystem.access$200(FileSystem.java:92)

at org.apache.hadoop.fs.FileSystem$Cache.getInternal(FileSystem.java:2687)

at org.apache.hadoop.fs.FileSystem$Cache.get(FileSystem.java:2669)

3.情况好转

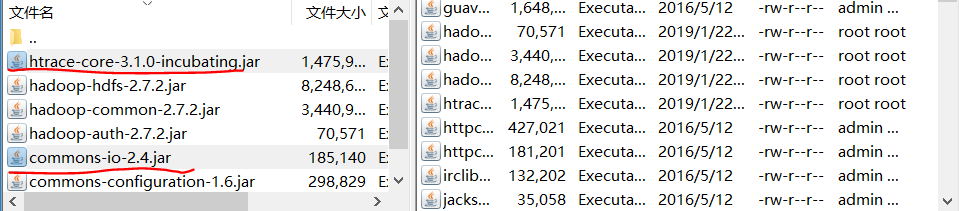

把如图的两个jar放入flume下的lib目录

重新运行flume,没有报错,但是没有动静,如图

同时启动hive,在hdfs并没有产生/flume/%Y%m%d/%H目录

问题待解决!!!

4.进一步实验

把那两个jar移除,同时把conf中sink指定的02号机namenode关闭掉,再启动01号机上的flume,没有发生错误但是在hdfs上任然没有flume目录

猜想原因:能够不报错,可能是因为JVM记录着原来的变量??????

问题待解决!!!

案列3,发生同样的情况,HDFS上没有flume文件夹

在命令中加入了输出日志

bin/flume-ng agent --conf conf/ --name a3 --conf-file job/dir-hdfs.conf -Dflume.root.logger=INFO,console

发现错误日志

-- ::, (conf-file-poller-) [INFO - org.apache.flume.node.Application.startAllComponents(Application.java:)] Starting Source r3

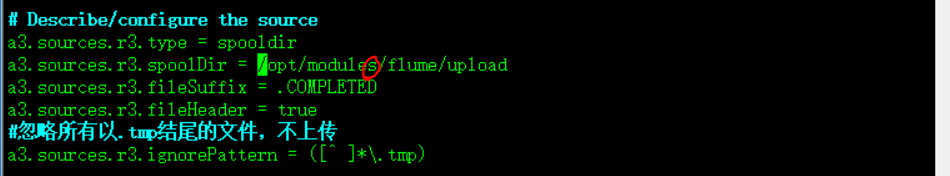

-- ::, (lifecycleSupervisor--) [INFO - org.apache.flume.source.SpoolDirectorySource.start(SpoolDirectorySource.java:)] SpoolDirectorySource source starting with directory: /opt/module/flume/upload

-- ::, (lifecycleSupervisor--) [ERROR - org.apache.flume.lifecycle.LifecycleSupervisor$MonitorRunnable.run(LifecycleSupervisor.java:)] Unable to start EventDrivenSourceRunner: { source:Spool Directory source r3: { spoolDir: /opt/module/flume/upload } } - Exception follows.

java.lang.IllegalStateException: Directory does not exist: /opt/module/flume/upload

at com.google.common.base.Preconditions.checkState(Preconditions.java:)

at org.apache.flume.client.avro.ReliableSpoolingFileEventReader.<init>(ReliableSpoolingFileEventReader.java:)

at org.apache.flume.client.avro.ReliableSpoolingFileEventReader.<init>(ReliableSpoolingFileEventReader.java:)

上述日志中错误原因是:

conf中少了s

改正之后重新运行flume:

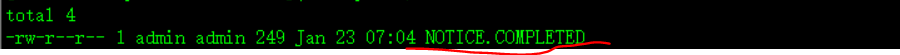

同时上传NOTICE文件到upload中,此时upload文件中

但是flume打印出来的日志提示:

[ERROR - org.apache.flume.sink.hdfs.HDFSEventSink.process(HDFSEventSink.java:447)] process failed

java.lang.NoClassDefFoundError: org/apache/htrace/SamplerBuilder

-- ::, (lifecycleSupervisor--) [INFO - org.apache.flume.instrumentation.MonitoredCounterGroup.start(MonitoredCounterGroup.java:)] Component type: SOURCE, name: r3 started

-- ::, (pool--thread-) [INFO - org.apache.flume.client.avro.ReliableSpoolingFileEventReader.readEvents(ReliableSpoolingFileEventReader.java:)] Last read took us just up to a file boundary. Rolling to the next file, if there is one.

-- ::, (pool--thread-) [INFO - org.apache.flume.client.avro.ReliableSpoolingFileEventReader.rollCurrentFile(ReliableSpoolingFileEventReader.java:)] Preparing to move file /opt/modules/flume/upload/NOTICE to /opt/modules/flume/upload/NOTICE.COMPLETED

-- ::, (SinkRunner-PollingRunner-DefaultSinkProcessor) [INFO - org.apache.flume.sink.hdfs.HDFSDataStream.configure(HDFSDataStream.java:)] Serializer = TEXT, UseRawLocalFileSystem = false

-- ::, (SinkRunner-PollingRunner-DefaultSinkProcessor) [INFO - org.apache.flume.sink.hdfs.BucketWriter.open(BucketWriter.java:)] Creating hdfs://hadoop-senior02.itguigu.com:9000/flume/upload/20190123/07/upload-.1548198334086.tmp

-- ::, (hdfs-k3-call-runner-) [WARN - org.apache.hadoop.util.NativeCodeLoader.<clinit>(NativeCodeLoader.java:)] Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

-- ::, (SinkRunner-PollingRunner-DefaultSinkProcessor) [ERROR - org.apache.flume.sink.hdfs.HDFSEventSink.process(HDFSEventSink.java:)] process failed

java.lang.NoClassDefFoundError: org/apache/htrace/SamplerBuilder

at org.apache.hadoop.hdfs.DFSClient.<init>(DFSClient.java:)

at org.apache.hadoop.hdfs.DFSClient.<init>(DFSClient.java:)

at org.apache.hadoop.hdfs.DistributedFileSystem.initialize(DistributedFileSystem.java:)

经查询:flime/lib中缺少htrace-core-3.1.0-incubating.jar包,mvn工程的话,通过mvn install安装(参考http://blog.51cto.com/enetq/1827028)。我直接找到此jar包手动拷贝进lib/xia

上面问题解决了,继续:cp NOTICE upload/,但是flume报错,日志如下:

java.lang.NoClassDefFoundError: org/apache/commons/io/Charsets

Info: Sourcing environment configuration script /opt/modules/flume/conf/flume-env.sh

Info: Including Hive libraries found via () for Hive access

+ exec /opt/modules/jdk1..0_121/bin/java -Xmx20m -cp '/opt/modules/flume/conf:/opt/modules/flume/lib/*:/lib/*' -Djava.library.path= org.apache.flume.node.Application -n a3 -f job/dir-hdfs.conf

Exception in thread "SinkRunner-PollingRunner-DefaultSinkProcessor" java.lang.NoClassDefFoundError: org/apache/commons/io/Charsets

at org.apache.hadoop.ipc.Server.<clinit>(Server.java:)

at org.apache.hadoop.ipc.ProtobufRpcEngine.<clinit>(ProtobufRpcEngine.java:)

at java.lang.Class.forName0(Native Method)

at java.lang.Class.forName(Class.java:)

解决:把commons-io-2.4.jar放进flume/lib/目录下

再重新过程,出现HDFS IO error,见日志:

-- ::, (SinkRunner-PollingRunner-DefaultSinkProcessor) [WARN - org.apache.flume.sink.hdfs.HDFSEventSink.process(HDFSEventSink.java:)] HDFS IO error

java.net.ConnectException: Call From hadoop-senior01/192.168.10.20 to hadoop-senior02: failed on connection exception: java.net.ConnectException: Connection refused; For more details see: http://wiki.apache.org/hadoop/ConnectionRefused

at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:)

(插曲) 因为file-hdfs.conf,之前也出现了问题,现在配置基本改好了。运行此配置出现,如日志所示问题:

xecutor.java:)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:)

at java.lang.Thread.run(Thread.java:)

-- ::, (SinkRunner-PollingRunner-DefaultSinkProcessor) [INFO - org.apache.flume.sink.hdfs.BucketWriter.open(BucketWriter.java:)] Creating hdfs://hadoop-senior01/flume/20190123/15/logs-.1548230362663.tmp

-- ::, (SinkRunner-PollingRunner-DefaultSinkProcessor) [WARN - org.apache.flume.sink.hdfs.HDFSEventSink.process(HDFSEventSink.java:)] HDFS IO error

org.apache.hadoop.ipc.RemoteException(org.apache.hadoop.ipc.StandbyException): Operation category WRITE is not supported in state standby

at org.apache.hadoop.hdfs.server.namenode.ha.StandbyState.checkOperation(StandbyState.java:)

打不开HA中的standby节点中的目录,改成active namenode之后,flume运行过程成功!

继续,dir-file.conf还是出问题,经对比file-file.conf(成功),dir-file.conf中指定了9000端口,去掉,成功!!!

a2.sinks.k2.hdfs.path = hdfs://hadoop-senior02/flume/%Y%m%d/

%H

有关参考:https://blog.csdn.net/dai451954706/article/details/50449436

https://blog.csdn.net/woloqun/article/details/81350323

最新文章

- theano scan optimization

- Android6.0动态获取权限

- ZeroMQ接口函数之 :zmq_msg_init - 初始化一个空的ZMQ消息结构

- MVC无刷新分页(即局部刷新,带搜索,页数选择,排序功能)

- 【NOIP模拟题】【二分】【倍增】【链表】【树规】

- Tomcat服务器启动常见问题

- memcpy的使用方法总结

- 3,C语言文件读写

- java 各数据类型之间的转换

- docker 清理容器的一些命令,彻底或选择清理

- 【缓存算法】FIFO,LFU,LRU

- [福大软工] Z班 第2次成绩排行榜

- 今天刚用asp.net做的导出Eecel

- .NetCore源码阅读笔记系列之Security (三) Authentication & AddOpenIdConnect

- Android -- java代码设置margin

- 1. pyhanlp介绍和简单应用

- /^\s+|\s+$/g 技术 内容

- Activity相关知识点总结

- TPO-17 C2 Reschedule part-time job in campus dining hall

- 【JQuery】css操作

热门文章

- python 测试:wraps

- Windows ->> Windows下一代文件系统 -- Resilient file system(ReFS)

- mongodb使用mongos链接复制集

- 【Leetcode】【Medium】Maximum Subarray

- 【pbrt】在c++程序中使用pbrt进行渲染

- 哈哈,原来IOC容器的bean是存在DefaultSingletonBeanRegistry的一个Map类型的属性当中。

- 闲来无事,用javascript写了一个简单的轨迹动画

- Educational Codeforces Round 56 (Rated for Div. 2) D. Beautiful Graph 【规律 && DFS】

- Lambda使用

- 简单说一说对JavaScript原型链的理解