OpenACC 书上的范例代码(Jacobi 迭代),part 3

2024-10-14 21:16:34

▶ 使用Jacobi 迭代求泊松方程的数值解

● 使用 data 构件,强行要求 u0 仅拷入和拷出 GPU 各一次,u1 仅拷入GPU 一次

#include <stdio.h>

#include <stdlib.h>

#include <math.h>

#include <time.h>

#include <openacc.h> #if defined(_WIN32) || defined(_WIN64)

#include <C:\Program Files\PGI\win64\19.4\include\wrap\sys\timeb.h>

#define timestruct clock_t

#define gettime(a) (*(a) = clock())

#define usec(t1,t2) (t2 - t1)

#else

#include <sys/time.h>

#define gettime(a) gettimeofday(a, NULL)

#define usec(t1,t2) (((t2).tv_sec - (t1).tv_sec) * 1000000 + (t2).tv_usec - (t1).tv_usec)

typedef struct timeval timestruct;

#endif inline float uval(float x, float y)

{

return x * x + y * y;

} int main()

{

const int row = , col = ;

const float height = 1.0, width = 2.0;

const float hx = height / row, wy = width / col;

const float fij = -4.0f;

const float hx2 = hx * hx, wy2 = wy * wy, c1 = hx2 * wy2, c2 = 1.0f / (2.0 * (hx2 + wy2));

const int maxIter = ;

const int colPlus = col + ; float *restrict u0 = (float *)malloc(sizeof(float)*(row + )*colPlus);

float *restrict u1 = (float *)malloc(sizeof(float)*(row + )*colPlus);

float *utemp = NULL; // 初始化

for (int ix = ; ix <= row; ix++)

{

u0[ix*colPlus + ] = u1[ix*colPlus + ] = uval(ix * hx, 0.0f);

u0[ix*colPlus + col] = u1[ix*colPlus + col] = uval(ix*hx, col * wy);

}

for (int jy = ; jy <= col; jy++)

{

u0[jy] = u1[jy] = uval(0.0f, jy * wy);

u0[row*colPlus + jy] = u1[row*colPlus + jy] = uval(row*hx, jy * wy);

}

for (int ix = ; ix < row; ix++)

{

for (int jy = ; jy < col; jy++)

u0[ix*colPlus + jy] = 0.0f;

} // 计算

timestruct t1, t2;

acc_init(acc_device_nvidia);

gettime(&t1);

#pragma acc data copy(u0[0:(row + 1) * colPlus]) copyin(u1[0:(row + 1) * colPlus]) // 循环外侧添加 data 构件,跨迭代(内核)构造数据空间

{

for (int iter = ; iter < maxIter; iter++)

{

#pragma acc kernels present(u0[0:((row + 1) * colPlus)], u1[0:((row + 1) * colPlus)]) // 每次调用内核时声明 u0 和 u1 已经存在,不要再拷贝

{

#pragma acc loop independent

for (int ix = ; ix < row; ix++)

{

#pragma acc loop independent

for (int jy = ; jy < col; jy++)

{

u1[ix*colPlus + jy] = (c1*fij + wy2 * (u0[(ix - )*colPlus + jy] + u0[(ix + )*colPlus + jy]) + \

hx2 * (u0[ix*colPlus + jy - ] + u0[ix*colPlus + jy + ])) * c2;

}

}

}

utemp = u0, u0 = u1, u1 = utemp;

}

}

gettime(&t2); long long timeElapse = usec(t1, t2);

#if defined(_WIN32) || defined(_WIN64)

printf("\nElapsed time: %13ld ms.\n", timeElapse);

#else

printf("\nElapsed time: %13ld us.\n", timeElapse);

#endif

free(u0);

free(u1);

acc_shutdown(acc_device_nvidia);

//getchar();

return ;

}

● 输出结果,win10 中运行结果,关闭 PGI_ACC_NOTIFY 后可以达到 67 ms

D:\Code\OpenACC>pgcc main.c -o main.exe -c99 -Minfo -acc

main:

, Memory zero idiom, loop replaced by call to __c_mzero4

, Generating copy(u0[:colPlus*(row+)])

Generating copyin(u1[:colPlus*(row+)])

, Generating present(u1[:colPlus*(row+)],u0[:colPlus*(row+)])

, Loop is parallelizable

, Loop is parallelizable

Generating Tesla code

, #pragma acc loop gang, vector(4) /* blockIdx.y threadIdx.y */

, #pragma acc loop gang, vector(32) /* blockIdx.x threadIdx.x */

, FMA (fused multiply-add) instruction(s) generated

uval:

, FMA (fused multiply-add) instruction(s) generated D:\Code\OpenACC>main.exe

launch CUDA kernel file=D:\Code\OpenACC\main.c function=main line= device= threadid= num_gangs= num_workers= vector_length= grid=32x1024 block=32x4 ... launch CUDA kernel file=D:\Code\OpenACC\main.c function=main line= device= threadid= num_gangs= num_workers= vector_length= grid=32x1024 block=32x4 Elapsed time: ms.

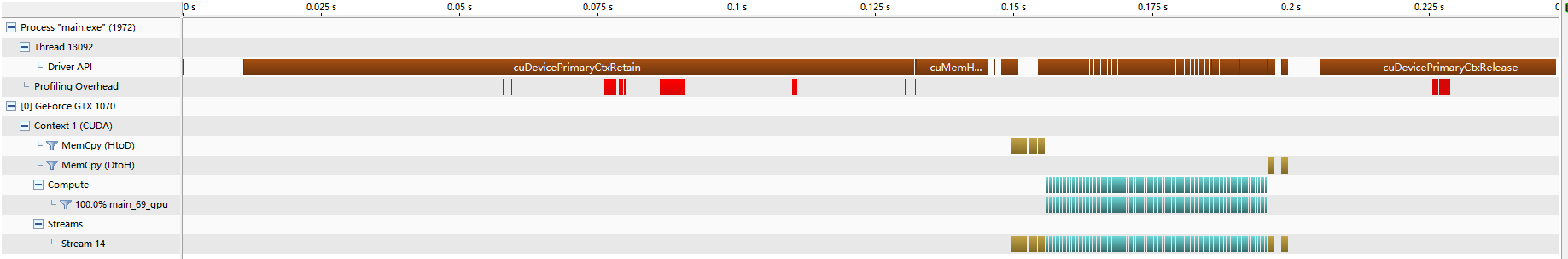

● nvvp 结果,可见大部分时间都花在了初始化设备上,计算用时已经比较少了,拷贝用时更少,只有开头和结尾有一点

● 输出结果,Ubuntu 中运行结果,含开启 PGI_ACC_TIME 的数据

cuan@CUAN:~$ pgcc data.c -o data.exe -c99 -Minfo -acc

main:

, Memory zero idiom, loop replaced by call to __c_mzero4

, Generating copy(u0[:colPlus*(row+)])

Generating copyin(u1[:colPlus*(row+)])

, Generating present(utemp[:],u1[:colPlus*(row+)],u0[:colPlus*(row+)])

FMA (fused multiply-add) instruction(s) generated

, Loop is parallelizable

, Loop is parallelizable

Generating Tesla code

, #pragma acc loop gang, vector(4) /* blockIdx.y threadIdx.y */

, #pragma acc loop gang, vector(32) /* blockIdx.x threadIdx.x */

uval:

, FMA (fused multiply-add) instruction(s) generated

cuan@CUAN:~$ ./data.exe

launch CUDA kernel file=/home/cuan/data.c function=main line= device= threadid= num_gangs= num_workers= vector_length= grid=32x1024 block=32x4 ... launch CUDA kernel file=/home/cuan/data.c function=main line= device= threadid= num_gangs= num_workers= vector_length= grid=32x1024 block=32x4 Elapsed time: us. Accelerator Kernel Timing data

/home/cuan/data.c

main NVIDIA devicenum=

time(us): ,

: data region reached times

: data copyin transfers:

device time(us): total=, max=, min=, avg=,

: data copyout transfers:

device time(us): total=, max=, min= avg=

: data region reached times

: compute region reached times

: kernel launched times

grid: [32x1024] block: [32x4]

device time(us): total=, max= min= avg=

elapsed time(us): total=, max=, min= avg=

● 将 tempp 放到了更里一层循环,报运行时错误 715 或 719,参考【https://stackoverflow.com/questions/41366915/openacc-create-data-while-running-inside-a-kernels】,大意是关于内存泄露

D:\Code\OpenACC>main.exe

launch CUDA kernel file=D:\Code\OpenACC\OpenACCProject\OpenACCProject\main.c function=main

line=69 device=0 threadid=1 num_gangs=32768 num_workers=4 vector_length=32 grid=32x1024 block=32x4

launch CUDA kernel file=D:\Code\OpenACC\OpenACCProject\OpenACCProject\main.c function=main

line=74 device=0 threadid=1 num_gangs=1 num_workers=1 vector_length=1 grid=1 block=1

call to cuStreamSynchronize returned error 715: Illegal instruction call to cuMemFreeHost returned error 715: Illegal instruction D:\Code\OpenACC>main.exe

launch CUDA kernel file=D:\Code\OpenACC\main.c function=main line= device= threadid= num_gangs= num_workers= vector_length= grid=32x1024 block=32x4

launch CUDA kernel file=D:\Code\OpenACC\main.c function=main line= device= threadid= num_gangs= num_workers= vector_length= grid= block=

Failing in Thread:

call to cuStreamSynchronize returned error : Launch failed (often invalid pointer dereference) Failing in Thread:

call to cuMemFreeHost returned error : Launch failed (often invalid pointer dereference)

● 尝试 在 data 构件中添加 create(utemp) 或在交换指针的位置临时定义 float *utemp 都会报运行时错误 700

D:\Code\OpenACC>main.exe

launch CUDA kernel file=D:\Code\OpenACC\main.c function=main line= device= threadid= num_gangs= num_workers= vector_length= grid=32x1024 block=32x4

launch CUDA kernel file=D:\Code\OpenACC\main.c function=main line= device= threadid= num_gangs= num_workers= vector_length= grid= block=

Failing in Thread:

call to cuStreamSynchronize returned error : Illegal address during kernel execution Failing in Thread:

call to cuMemFreeHost returned error : Illegal address during kernel execution

▶ 恢复错误控制,添加 reduction 导语用来计量改进量

#include <stdio.h>

#include <stdlib.h>

#include <math.h>

#include <time.h>

#include <openacc.h> #if defined(_WIN32) || defined(_WIN64)

#include <C:\Program Files\PGI\win64\19.4\include\wrap\sys\timeb.h>

#define timestruct clock_t

#define gettime(a) (*(a) = clock())

#define usec(t1,t2) (t2 - t1)

#else

#include <sys/time.h>

#define gettime(a) gettimeofday(a, NULL)

#define usec(t1,t2) (((t2).tv_sec - (t1).tv_sec) * 1000000 + (t2).tv_usec - (t1).tv_usec)

typedef struct timeval timestruct; #define max(x,y) ((x) > (y) ? (x) : (y))

#endif inline float uval(float x, float y)

{

return x * x + y * y;

} int main()

{

const int row = , col = ;

const float height = 1.0, width = 2.0;

const float hx = height / row, wy = width / col;

const float fij = -4.0f;

const float hx2 = hx * hx, wy2 = wy * wy, c1 = hx2 * wy2, c2 = 1.0f / (2.0 * (hx2 + wy2)), errControl = 0.0f;

const int maxIter = ;

const int colPlus = col + ; float *restrict u0 = (float *)malloc(sizeof(float)*(row + )*colPlus);

float *restrict u1 = (float *)malloc(sizeof(float)*(row + )*colPlus);

float *utemp = NULL; // 初始化

for (int ix = ; ix <= row; ix++)

{

u0[ix*colPlus + ] = u1[ix*colPlus + ] = uval(ix * hx, 0.0f);

u0[ix*colPlus + col] = u1[ix*colPlus + col] = uval(ix*hx, col * wy);

}

for (int jy = ; jy <= col; jy++)

{

u0[jy] = u1[jy] = uval(0.0f, jy * wy);

u0[row*colPlus + jy] = u1[row*colPlus + jy] = uval(row*hx, jy * wy);

}

for (int ix = ; ix < row; ix++)

{

for (int jy = ; jy < col; jy++)

u0[ix*colPlus + jy] = 0.0f;

} // 计算

timestruct t1, t2;

acc_init(acc_device_nvidia);

gettime(&t1);

#pragma acc data copy(u0[0:(row + 1) * colPlus]) copyin(u1[0:(row + 1) * colPlus])

{

for (int iter = ; iter < maxIter; iter++)

{

float uerr = 0.0f; // uerr 要放到前面,否则离开代码块数据未定义,书上这里是错的

#pragma acc kernels present(u0[0:(row + 1) * colPlus]) present(u1[0:(row + 1) * colPlus])

{

#pragma acc loop independent reduction(max:uerr) // 添加 reduction 语句统计改进量

for (int ix = ; ix < row; ix++)

{

for (int jy = ; jy < col; jy++)

{

u1[ix*colPlus + jy] = (c1*fij + wy2 * (u0[(ix - )*colPlus + jy] + u0[(ix + )*colPlus + jy]) + \

hx2 * (u0[ix*colPlus + jy - ] + u0[ix*colPlus + jy + ])) * c2;

uerr = max(uerr, fabs(u0[ix * colPlus + jy] - u1[ix * colPlus + jy]));

}

}

}

printf("\niter = %d, uerr = %e\n", iter, uerr);

if (uerr < errControl)

break;

utemp = u0, u0 = u1, u1 = utemp;

}

}

gettime(&t2); long long timeElapse = usec(t1, t2);

#if defined(_WIN32) || defined(_WIN64)

printf("\nElapsed time: %13ld ms.\n", timeElapse);

#else

printf("\nElapsed time: %13ld us.\n", timeElapse);

#endif

free(u0);

free(u1);

acc_shutdown(acc_device_nvidia);

//getchar();

return ;

}

● 输出结果,win10 相比没有错误控制的情形整整慢了一倍,nvvp 没有明显变化,不放上来了

D:\Code\OpenACC>pgcc main.c -o main.exe -c99 -Minfo -acc

main:

, Memory zero idiom, loop replaced by call to __c_mzero4

, Generating copy(u0[:colPlus*(row+)])

Generating copyin(u1[:colPlus*(row+)])

, Generating present(u0[:colPlus*(row+)])

Generating implicit copy(uerr)

Generating present(u1[:colPlus*(row+)])

, Loop is parallelizable

, Loop is parallelizable

Generating Tesla code

, #pragma acc loop gang, vector(4) /* blockIdx.y threadIdx.y */

, #pragma acc loop gang, vector(32) /* blockIdx.x threadIdx.x */

Generating reduction(max:uerr) // 多了 reduction 的信息

, FMA (fused multiply-add) instruction(s) generated

uval:

, FMA (fused multiply-add) instruction(s) generated D:\Code\OpenACC>main.exe iter = , uerr = 2.496107e+00 ... iter = , uerr = 2.202189e-02 Elapsed time: ms.

● 输出结果,Unubtu

cuan@CUAN:~$ pgcc data+reduction.c -o data+reduction.exe -c99 -Minfo -acc

main:

, Memory zero idiom, loop replaced by call to __c_mzero4

, Generating copyin(u1[:colPlus*(row+)])

Generating copy(u0[:colPlus*(row+)])

, FMA (fused multiply-add) instruction(s) generated

, Generating present(u0[:colPlus*(row+)])

Generating implicit copy(uerr)

Generating present(u1[:colPlus*(row+)])

, Loop is parallelizable

, Loop is parallelizable

Generating Tesla code

, #pragma acc loop gang, vector(4) /* blockIdx.y threadIdx.y */

, #pragma acc loop gang, vector(32) /* blockIdx.x threadIdx.x */

Generating reduction(max:uerr)

uval:

, FMA (fused multiply-add) instruction(s) generated

cuan@CUAN:~$ ./data+reduction.exe

launch CUDA kernel file=/home/cuan/data+reduction.c function=main line= device= threadid= num_gangs= num_workers= vector_length= grid=32x1024 block=32x4 shared memory=

launch CUDA kernel file=/home/cuan/data+reduction.c function=main line= device= threadid= num_gangs= num_workers= vector_length= grid= block= shared memory= iter = , uerr = 2.496107e+00

launch CUDA kernel file=/home/cuan/data+reduction.c function=main line= device= threadid= num_gangs= num_workers= vector_length= grid=32x1024 block=32x4 shared memory=

launch CUDA kernel file=/home/cuan/data+reduction.c function=main line= device= threadid= num_gangs= num_workers= vector_length= grid= block= shared memory= ... iter = , uerr = 2.214956e-02

launch CUDA kernel file=/home/cuan/data+reduction.c function=main line= device= threadid= num_gangs= num_workers= vector_length= grid=32x1024 block=32x4 shared memory=

launch CUDA kernel file=/home/cuan/data+reduction.c function=main line= device= threadid= num_gangs= num_workers= vector_length= grid= block= shared memory= iter = , uerr = 2.202189e-02 Elapsed time: us. Accelerator Kernel Timing data

/home/cuan/data+reduction.c

main NVIDIA devicenum=

time(us): ,

: data region reached times

: data copyin transfers:

device time(us): total=, max=, min=, avg=,

: data copyout transfers:

device time(us): total=, max=, min= avg=

: compute region reached times

: kernel launched times

grid: [32x1024] block: [32x4]

device time(us): total=, max= min= avg=

elapsed time(us): total=, max=, min= avg=

: reduction kernel launched times

grid: [] block: []

device time(us): total=, max= min= avg=

elapsed time(us): total=, max=, min= avg=

: data region reached times

: data copyin transfers:

device time(us): total= max= min= avg=

: data copyout transfers:

device time(us): total= max= min= avg=

▶ 尝试在计算的循环导语上加上 collapse(2) 子句,意思是合并两个较小的循环为一个较大的循环。发现效果不显著,不放上来了

最新文章

- 走进AngularJs 表单及表单验证

- Unity3d之json解析研究

- vs2013单元测试练习过程

- Oracle游标、参数的使用例子

- 【字典树】【贪心】Codeforces 706D Vasiliy's Multiset

- C# 异步编程之 Task 的使用

- StringBuffer&StringBuilder

- NE76003单片机调试DS18B20 步骤

- git 合并冲突 取消合并

- backbond整体架构

- 2017-2018-2 20155309 南皓芯 Exp3 免杀原理与实践

- k8s环境搭建

- SSH无密码登录:只需两个简单步骤 (Linux)

- Codeforces Round #247 (Div. 2) C D

- Java工具类_随机生成任意长度的字符串【密码、验证码】

- python可视化基础

- iOS 地图 通过经纬度计算两点间距离

- 经典笛卡尔积SQL

- Linux 文件名中包含特殊字符

- 5、数据类型三:hash

热门文章

- 国内Ubuntu镜像源

- jQuery--- .hasOwnProperty 用法

- 【java规则引擎】《Drools7.0.0.Final规则引擎教程》第4章 4.3 日历

- Array.prototype.slice.call(arguments)探究

- 手把手图文教你eclipse下如何配置tomcat

- Math类的学习 java 类库 API 文档学习

- java企业级开发的实质就是前台后台如何交互的-各个对象之间如何交互,通信的-程序执行的流程是怎样的

- JAVA关闭钩子

- MySQL中character set与collation的理解(转)

- FineUI Grid中WindowField根据列数据决定是否Enalble