open-local部署和使用

Open-Local简介

Open-local 是阿里巴巴开源,由多个组件构成的本地磁盘管理系统,目标是解决当前kubernetes本地存储能力缺失问题。

Open-Local包含四大类组件:

• Scheduler-Extender: 作为 Kubernetes Scheduler 的扩展组件,通过 Extender 方式实现,新增本地存储调度算法

• CSI: 按照 CSI(Container Storage Interface) 标准实现本地磁盘管理能力

• Agent: 运行在集群中的每个节点,根据配置清单初始化存储设备,并通过上报集群中本地存储设备信息以供 Scheduler-Extender 决策调度

• Controller: 获取集群存储初始化配置,并向运行在各个节点的 Agent 下发详细的配置清单

架构图

Open-Local部署

方式1、Ack-distro 部署k8s,自带open-local(阿里巴巴二次开发的)

前期准备:(前期准备过程略)

基于redhat和debian的linux发行版(本次实验使用centos7.9)

LVM2+

docker-ce版本:19.03.15

集群中至少有一个空闲块设备用来测试

Ack-distro

Ack-distro作为完整的kubernetes发行版,通过阿里巴巴开源的应用打包交付工具sealer,可以简单,快速的交付到离线环境,帮助使用者更简单、敏捷地管理自己的集群。Ack-distro 部署时会默认安装open-local。

安装sealer

(前提:需要docker-ce版本:19.03.15)

上传压缩包,解压到/usr/bin目录 (压缩包需要github或者网上找)

tar -zxvf sealer-latest-linux-amd64.tar.gz

cp sealer /usr/bin/

上传sealer部署k8s集群所需的镜像包ackdistro.tar (压缩包需要github或者网上找)

sealer load -i ackdistro.tar

部署k8s

sealer run ack-agility-registry.cn-shanghai.cr.aliyuncs.com/ecp_builder/ackdistro:v1-20-4-ack-5 -m 10.0.101.89 -p asdfasdf..

-m 后面参数是k8s的masterIP

-p 后面参数是masterIP的root密码

(如果报错时间同步有问题,可以用:

sealer run ack-agility-registry.cn-shanghai.cr.aliyuncs.com/ecp_builder/ackdistro:v1-20-4-ack-5 -m 10.0.101.89 -p asdfasdf.. --env IgnoreErrors="OS;TimeSyncService")

完成之后,可以看到版本

查看open-local

支持的存储驱动

使用open-local

新增VG

首先新增LV

fdisk /dev/vdb

fdisk -l

pvcreate /dev/vdb1

pvscan

vgcreate -s 32M open-local-pool-1 /dev/vdb1

vgdisplay

存储初始化配置

(如果上面新建的名字就是open-local-pool-1,这个步骤可以省略)

首先kubectl get nlsc 查一下name

编辑nlsc:

kubectl edit nlsc yoda

文件内容中的open-local-pool-[0-9]+ 改为你新建的pv名字。加号+保留

创建一个Statefulset,使用已有的存储类模板

[root@laijx-k8s-test1 tmp]# cat sts-nginx.yaml

apiVersion: v1

kind: Service

metadata:

name: nginx-lvm

labels:

app: nginx-lvm

spec:

ports:

- port: 80

name: web

clusterIP: None

selector:

app: nginx-lvm

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: nginx-lvm

spec:

selector:

matchLabels:

app: nginx-lvm

podManagementPolicy: Parallel

serviceName: "nginx-lvm"

replicas: 1

volumeClaimTemplates:

- metadata:

name: html

spec:

accessModes:

- ReadWriteOnce

storageClassName: yoda-lvm-xfs

resources:

requests:

storage: 5Gi

template:

metadata:

labels:

app: nginx-lvm

spec:

tolerations:

- key: node-role.kubernetes.io/master

operator: Exists

effect: NoSchedule

containers:

- name: nginx

image: 10.0.103.102/laijxtest/nginx:v1.23

imagePullPolicy: IfNotPresent

volumeMounts:

- mountPath: "/data"

name: html

command:

- sh

- "-c"

- |

while true; do

echo "huizhi testing";

echo "yes ">>/data/yes.txt;

sleep 120s

done;

启动

Kubectl apply –f sts-nginx.yaml

现在可以看到已经创建了pv和pvc

扩容

kubectl patch pvc html-nginx-lvm-0 -p '{"spec":{"resources":{"requests":{"storage":"10Gi"}}}}'

将5G扩容为10G

Pvc需要等两分钟左右会显示变更为10G

进入容器查看挂载情况

删除pod,验证持久化存储

方式2、Helm安装open-local

前期准备:(具体准备过程略)

基于redhat和debian的linux发行版(本次实验使用centos7.9)

Kubernetes V1.20+ (如果有老版本的k8s,可能要先升级k8s版本)

LVM2+

Helm3.0+

集群中至少有一个空闲块设备用来测试

服务器:

Master 10.0.101.91

Node1 10.0.101.10

Node2 10.0.101.92

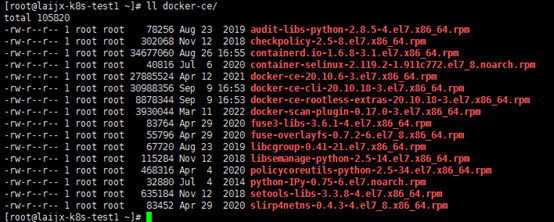

首先在三台服务器安装docker-ce-20.10.6(我的操作环境是内网的,有外网的话会简单很多,直接yum)

上传安装包,通过yum安装已经准备好的rpm包

yum localinstall -y ./*

编辑配置文件

Vim /etc/docker/daemon.json

{

"storage-driver": "overlay2",

"storage-opts": [

"overlay2.override_kernel_check=true"],

"insecure-registries" : ["10.0.103.102"],

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": {

"max-size": "100m",

"max-file": "3"

}

} systemctl start docker

systemctl enable docker

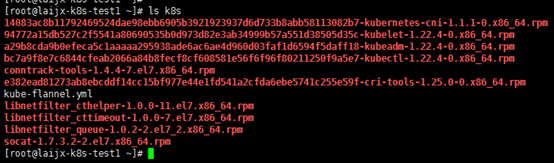

安装k8s 1.22.4

Master:

上传rpm压缩包,解压,安装

unzip k8s-1.22.4.rpm.zip

yum localinstall -y ./*

echo "net.bridge.bridge-nf-call-iptables = 1" > /etc/sysctl.d/k8s.conf

echo "net.bridge.bridge-nf-call-ip6tables = 1" >> /etc/sysctl.d/k8s.conf

sysctl -p /etc/sysctl.d/k8s.conf

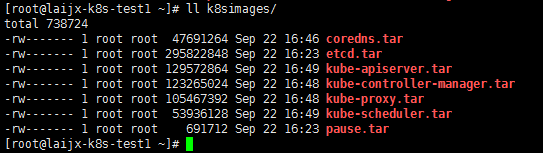

导入k8s初始化需要的镜像包,并打标签(有外网的话这步可以跳过)

unzip k8simages.zip

docker load < coredns.tar

docker image

docker load < etcd.tar

docker load < pause.tar

docker load < kube-apiserver.tar

docker load < kube-controller-manager.tar

docker load < kube-proxy.tar

docker load < kube-scheduler.tar

docker tag 8a5cc299272d k8s.gcr.io/kube-apiserver:v1.22.4

docker tag 0ce02f92d3e4 k8s.gcr.io/kube-controller-manager:v1.22.4

docker tag 721ba97f54a6 k8s.gcr.io/kube-scheduler:v1.22.4

docker tag edeff87e4802 k8s.gcr.io/kube-proxy:v1.22.4

docker tag 8d147537fb7d k8s.gcr.io/coredns/coredns:v1.8.4

docker tag 004811815584 k8s.gcr.io/etcd:3.5.0-0

docker tag ed210e3e4a5b k8s.gcr.io/pause:3.5

systemctl start kubelet

systemctl enable kubelet

开始初始化

kubeadm init --kubernetes-version=v1.22.4 --pod-network-cidr=10.244.0.0/16 --service-cidr=10.96.0.0/12

初始化完成之后执行:export KUBECONFIG=/etc/kubernetes/admin.conf

记录加入集群的命令,后面要用

kubeadm join 10.0.101.91:6443 --token ffvff0.bf7e0urhj0sys1ka --discovery-token-ca-cert-hash sha256:d7488bc4f02d96c359e322bef5097ff7d59d8ee31e6560011594f37bc0c0e29f

两台node节点操作:

上传rpm压缩包,解压,安装

unzip k8s-1.22.4.rpm.zip

yum localinstall -y ./*

echo "net.bridge.bridge-nf-call-iptables = 1" > /etc/sysctl.d/k8s.conf

echo "net.bridge.bridge-nf-call-ip6tables = 1" >> /etc/sysctl.d/k8s.conf

sysctl -p /etc/sysctl.d/k8s.conf

systemctl start kubelet

systemctl enable kubelet

加入集群

kubeadm join 10.0.101.91:6443 --token ffvff0.bf7e0urhj0sys1ka --discovery-token-ca-cert-hash sha256:d7488bc4f02d96c359e322bef5097ff7d59d8ee31e6560011594f37bc0c0e29f

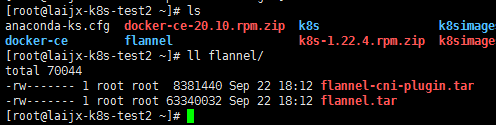

安装flannel

三台服务器操作:

导入flannel镜像包

Unzip flannel.zip

cd flannel/

docker load < flannel.tar

docker load < flannel-cni-plugin.tar

Master操作:

kubectl apply -f kube-flannel.yml

[root@laijx-k8s-test1 ~]# cat k8s/kube-flannel.yml

---

kind: Namespace

apiVersion: v1

metadata:

name: kube-flannel

labels:

pod-security.kubernetes.io/enforce: privileged

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

rules:

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-flannel

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: flannel

namespace: kube-flannel

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-flannel

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds

namespace: kube-flannel

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

hostNetwork: true

priorityClassName: system-node-critical

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni-plugin

#image: flannelcni/flannel-cni-plugin:v1.1.0 for ppc64le and mips64le (dockerhub limitations may apply)

image: docker.io/rancher/mirrored-flannelcni-flannel-cni-plugin:v1.1.0

command:

- cp

args:

- -f

- /flannel

- /opt/cni/bin/flannel

volumeMounts:

- name: cni-plugin

mountPath: /opt/cni/bin

- name: install-cni

#image: flannelcni/flannel:v0.19.2 for ppc64le and mips64le (dockerhub limitations may apply)

image: docker.io/rancher/mirrored-flannelcni-flannel:v0.19.2

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

#image: flannelcni/flannel:v0.19.2 for ppc64le and mips64le (dockerhub limitations may apply)

image: docker.io/rancher/mirrored-flannelcni-flannel:v0.19.2

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN", "NET_RAW"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: EVENT_QUEUE_DEPTH

value: "5000"

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

- name: xtables-lock

mountPath: /run/xtables.lock

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni-plugin

hostPath:

path: /opt/cni/bin

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

- name: xtables-lock

hostPath:

path: /run/xtables.lock

type: FileOrCreate

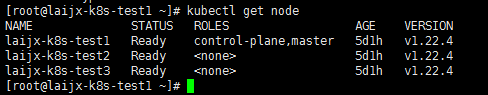

正常部署flannel之后,集群node状态正常

部署个nginx测试一下

kubectl apply -f .

Pod部署正常。

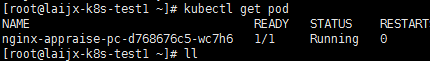

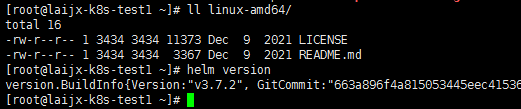

部署helm3.7

tar -zxvf helm-v3.7.2-linux-amd64.tar.gz

cd linux-amd64/

mv helm /usr/bin/

helm version

Helm安装open-local

准备yaml文件

Helm安装open-local需要一些yaml文件,我已从github下载

open-local-main.zip

并且,由于我们是内网,所以需要将yaml文件中的镜像拉取策略由always改为ifnotpresent,并提前准备好镜像。

grep -C 2 -r image `find ./ -name "*.yaml"`

sed -i 's/imagePullPolicy:\ Always/imagePullPolicy:\ IfNotPresent/g' ./templates/*

准备镜像

通过helm安装open-local之前,需要将用到的镜像手动导入到docker

Unzip open-local-images.zip

导入镜像

for i in `ls` ;do docker load < $i;done

开始安装open-local

cd open-local-main

helm install open-local ./helm

观察进度

kubectl get pod –A

大概几分钟之后,我们就能看到需要的open-local已经部署完成了。且使用 kubectl get sc 能够看到目前支持的存储驱动类型

Open-local使用

创建样例pv和pvc,以供参考

参考open-local官方文档,创建一个StatefulSet 的同时创建pv和pvc:

[root@laijx-k8s-test1 tmp]# cat sts-nginx.yaml

apiVersion: v1

kind: Service

metadata:

name: nginx-lvm

labels:

app: nginx-lvm

spec:

ports:

- port: 80

name: web

clusterIP: None

selector:

app: nginx-lvm

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: nginx-lvm

spec:

selector:

matchLabels:

app: nginx-lvm

podManagementPolicy: Parallel

serviceName: "nginx-lvm"

replicas: 1

volumeClaimTemplates:

- metadata:

name: html

spec:

accessModes:

- ReadWriteOnce

storageClassName: yoda-lvm-xfs

resources:

requests:

storage: 5Gi

template:

metadata:

labels:

app: nginx-lvm

spec:

tolerations:

- key: node-role.kubernetes.io/master

operator: Exists

effect: NoSchedule

containers:

- name: nginx

image: 10.0.103.102/laijxtest/nginx:v1.23

imagePullPolicy: IfNotPresent

volumeMounts:

- mountPath: "/data"

name: html

command:

- sh

- "-c"

- |

while true; do

echo "huizhi testing";

echo "yes ">>/data/yes.txt;

sleep 120s

done;

在已有的pv和pvc的yaml文档进行修改,创建我们自己想要的新的pv和pvc

(或者你也可以直接将这个部署单元删除,然后其他的部署单元可以使用这个样例pv和pvc了)

kubectl get pv -o yaml

新建pv文件pv01.yaml,将uid,creationTimestamp注释掉,并修改name:

[root@laijx-k8s-test1 k8s]# cat pv01.yaml

apiVersion: v1

items:

- apiVersion: v1

kind: PersistentVolume

metadata:

annotations:

pv.kubernetes.io/provisioned-by: local.csi.aliyun.com

# creationTimestamp: "2022-10-08T01:37:50Z"

finalizers:

- kubernetes.io/pv-protection

name: pv01

resourceVersion: "1941146"

# uid: f91ffa5b-e4dc-42ed-8855-f6420b0979b4

spec:

accessModes:

- ReadWriteOnce

capacity:

storage: 5Gi

claimRef:

# apiVersion: v1

# kind: PersistentVolumeClaim

# name: pvc01

# namespace: default

# resourceVersion: "1941140"

# uid: f8bbc82b-a781-4116-9bdd-59346b8d4240

csi:

driver: local.csi.aliyun.com

fsType: ext4

volumeAttributes:

csi.storage.k8s.io/pv/name: pv01

csi.storage.k8s.io/pvc/name: pvc01

csi.storage.k8s.io/pvc/namespace: default

storage.kubernetes.io/csiProvisionerIdentity: 1664262928261-8081-local.csi.aliyun.com

vgName: open-local-pool-0

volume.kubernetes.io/selected-node: laijx-k8s-test2

volumeType: LVM

volumeHandle: pv01

nodeAffinity:

required:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/hostname

operator: In

values:

- laijx-k8s-test2

persistentVolumeReclaimPolicy: Delete

storageClassName: open-local-lvm

volumeMode: Filesystem

status:

phase: Bound

kind: List

metadata:

resourceVersion: ""

selfLink: ""

claimRef:这个字段要注释掉,否则pv会认为已经绑定了pvc,导致报错Bound claim has lost its PersistentVolume

新建pvc文件pvc01.yaml,将uid,creationTimestamp注释掉,并修改name:

修改后的文件如下:

[root@laijx-k8s-test1 k8s]# cat pvc01.yaml

apiVersion: v1

items:

- apiVersion: v1

kind: PersistentVolumeClaim

metadata:

annotations:

pv.kubernetes.io/bind-completed: "yes"

pv.kubernetes.io/bound-by-controller: "yes"

volume.beta.kubernetes.io/storage-provisioner: local.csi.aliyun.com

volume.kubernetes.io/selected-node: laijx-k8s-test2

# creationTimestamp: "2022-10-08T01:37:49Z"

finalizers:

- kubernetes.io/pvc-protection

labels:

app: pv01

name: pvc01

namespace: default

resourceVersion: "1941148"

# uid: f8bbc82b-a781-4116-9bdd-59346b8d4240

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 5Gi

storageClassName: open-local-lvm

volumeMode: Filesystem

volumeName: pv01

status:

accessModes:

- ReadWriteOnce

capacity:

storage: 5Gi

phase: Bound

kind: List

metadata:

resourceVersion: ""

selfLink: ""

创建pv、pvc

kubectl apply -f pvc01.yaml -f pv01.yaml

storageClassName若指定 open-local-lvm,则创建的文件系统为ext4,

若指定open-local-lvm-xfs,则创建的文件系统为xfs

使用pv和pvc

在部署单元中使用创建的pvc

[root@laijx-k8s-test1 k8s]# cat tomcat7-lvm.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: tomcat7-2

spec:

replicas: 1

selector:

matchLabels:

app: tomcat7-2

template:

metadata:

labels:

app: tomcat7-2

spec:

containers:

- name: tomcat7-2

image: 10.0.103.102/laijxtest/tomcat7:v1

ports:

- containerPort: 80

command:

- sh

- "-c"

- |

while true; do

echo "laijx testing";

echo "yes ">>/tmp/yes.txt;

sleep 120s

done;

volumeMounts:

- mountPath: /data/

name: tomcat7-lvm

volumes:

- name: tomcat7-lvm

persistentVolumeClaim:

claimName: pvc01

---

apiVersion: v1

kind: Service

metadata:

name: tomcat7-2

spec:

ports:

- name: tomcat7-2-svc

port: 8080

targetPort: 8080

nodePort: 31124

selector:

app: tomcat7-2

type: NodePort

启动部署单元:

kubectl apply -f tomcat7-lvm.yaml

进入容器可以看到,/data路径已经成功挂载

验证持久化:

进入容器cp /tmp/yes.txt /data/ 然后重启pod,再次观察文件是否还在:

持久化正常。

扩容pvc

kubectl patch pvc html-nginx-lvm-0 -p '{"spec":{"resources":{"requests":{"storage":"10Gi"}}}}'

参考文档:

https://github.com/alibaba/open-local/tree/main/docs

https://developer.aliyun.com/article/790208 Open-Local - 云原生本地磁盘管理系统

https://segmentfault.com/a/1190000042291703 如何通过 open-local 玩转容器本地存储? | 龙蜥技术

https://www.luozhiyun.com/archives/335 深入k8s:持久卷PV、PVC及其源码分析

最新文章

- 关于BAPI_PATIENT_CREATE(病患主数据创建)

- 三妹,我拆了你的本-- Day One(大图赏)

- iOS的架构

- BZOJ1025: [SCOI2009]游戏

- Java简介

- 【BZOJ】【2178】圆的面积并

- cdev、udev

- 单点登录系统CAS筹建及取得更多用户信息的实现

- [转贴]JAVA:RESTLET开发实例(三)基于spring的REST服务

- Jmeter Boss系统login

- MFC更换窗口图标

- Junit测试private方法

- hibernate中configuration和配置文件笔记

- 2017 icpc 沈阳 G - Infinite Fraction Path

- 三星核S5PV210AH-A0 SAMSUNG

- 2019.1.3 WLAN 802.11 a/b/g PHY Specification and EDVT Measurement II - Transmit Spectrum Mask & Current Consumption

- springboot - mybatis - 下划线与驼峰自动转换 mapUnderscoreToCamelCase

- UVa 11021 麻球繁衍

- iview中tree的事件运用

- vue中-webkit-box-orient:vertical打包放到线上不显示