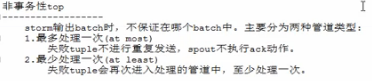

Trident学习笔记(二)

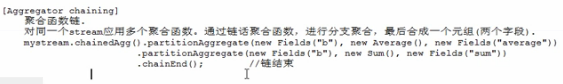

aggregator

------------------

聚合动作;聚合操作可以是基于batch、stream、partiton

[聚合方式-分区聚合]

partitionAggregate

分区聚合;基于分区进行聚合运算;作用于分区而不是batch。

mystream.partitionAggregate(new Fields("x"), new Count(), new Fields("count"));

[聚合方式-batch聚合]

aggregate

批次聚合;先将同一batch的所有分区的tuple进行global再分区,将其汇集到一个分区中,再进行聚合运算。

.aggregate(new Fields("a"), new Count(), new Fields("count")); // 批次聚合

聚合函数

[ReducerAggregator]

init();

reduce();

Aggregator

CombinerAggregator

import org.apache.storm.trident.operation.ReducerAggregator;

import org.apache.storm.trident.tuple.TridentTuple; /**

* 自定义sum聚合函数

*/

public class SumReducerAggregator implements ReducerAggregator<Integer> { private static final long serialVersionUID = 1L; @Override

public Integer init() {

return 0;

} @Override

public Integer reduce(Integer curr, TridentTuple tuple) {

return curr + tuple.getInteger(0) + tuple.getInteger(1);

} }

分区聚合

import net.baiqu.shop.report.trident.demo01.PrintFunction;

import org.apache.storm.Config;

import org.apache.storm.LocalCluster;

import org.apache.storm.trident.Stream;

import org.apache.storm.trident.TridentTopology;

import org.apache.storm.trident.testing.FixedBatchSpout;

import org.apache.storm.tuple.Fields;

import org.apache.storm.tuple.Values; public class TridentTopologyApp4 { public static void main(String[] args) {

// 创建topology

TridentTopology topology = new TridentTopology(); // 创建spout

FixedBatchSpout testSpout = new FixedBatchSpout(new Fields("a", "b"), 4,

new Values(1, 2),

new Values(2, 3),

new Values(3, 4),

new Values(4, 5)); // 创建流

Stream stream = topology.newStream("testSpout", testSpout);

stream.partitionAggregate(new Fields("a", "b"), new SumReducerAggregator(), new Fields("sum"))

.shuffle().each(new Fields("sum"), new PrintFunction(), new Fields("result")); // 本地提交

LocalCluster cluster = new LocalCluster();

cluster.submitTopology("TridentDemo4", new Config(), topology.build());

} }

[ReducerAggregator]

init();

reduce();

public interface ReducerAggregator<T> extends Serializable {

T init();

T reduce(T curr, TridentTuple tuple);

}

[Aggregator]

描述同ReducerAggregator.

public interface Aggregator<T> extends Operation {

// 开始聚合之间调用,主要用于保存状态。共下面的两个方法使用

T init(Object batchId, TridentCollector collector);

// 迭代batch的每个tuple, 处理每个tuple后更新state的状态。

void aggreate(T val, TridentTuple tuple, TridentCollector collector);

// 所有tuple处理完成后调用,返回单个tuple给每个batch。

void complete(T val, TridentCollector collector);

}

CombinerAggregator

import org.apache.storm.trident.operation.BaseAggregator;

import org.apache.storm.trident.operation.TridentCollector;

import org.apache.storm.trident.tuple.TridentTuple;

import org.apache.storm.tuple.Values; import java.io.Serializable; /**

* 批次求和函数

*/

public class SumAggregator extends BaseAggregator<SumAggregator.State> { private static final long serialVersionUID = 1L; static class State implements Serializable {

private static final long serialVersionUID = 1L;

int sum = 0;

} @Override

public SumAggregator.State init(Object batchId, TridentCollector collector) {

return new State();

} @Override

public void aggregate(SumAggregator.State state, TridentTuple tuple, TridentCollector collector) {

state.sum = state.sum + tuple.getInteger(0) + tuple.getInteger(1);

} @Override

public void complete(SumAggregator.State state, TridentCollector collector) {

collector.emit(new Values(state.sum));

} }

批次聚合

package net.baiqu.shop.report.trident.demo04; import net.baiqu.shop.report.trident.demo01.PrintFunction;

import org.apache.storm.Config;

import org.apache.storm.LocalCluster;

import org.apache.storm.trident.Stream;

import org.apache.storm.trident.TridentTopology;

import org.apache.storm.trident.testing.FixedBatchSpout;

import org.apache.storm.tuple.Fields;

import org.apache.storm.tuple.Values; public class TridentTopologyApp4 { public static void main(String[] args) {

// 创建topology

TridentTopology topology = new TridentTopology(); // 创建spout

FixedBatchSpout testSpout = new FixedBatchSpout(new Fields("a", "b"), 4,

new Values(1, 2),

new Values(2, 3),

new Values(3, 4),

new Values(4, 5)); // 创建流

Stream stream = topology.newStream("testSpout", testSpout);

stream.aggregate(new Fields("a", "b"), new SumAggregator(), new Fields("sum"))

.shuffle().each(new Fields("sum"), new PrintFunction(), new Fields("result")); // 本地提交

LocalCluster cluster = new LocalCluster();

cluster.submitTopology("TridentDemo4", new Config(), topology.build());

} }

运行结果

PrintFunction:

PrintFunction:

PrintFunction:

......

平均值批次聚合函数

import org.apache.storm.trident.operation.BaseAggregator;

import org.apache.storm.trident.operation.TridentCollector;

import org.apache.storm.trident.tuple.TridentTuple;

import org.apache.storm.tuple.Values; import java.io.Serializable; /**

* 批次求平均值函数

*/

public class AvgAggregator extends BaseAggregator<AvgAggregator.State> { private static final long serialVersionUID = 1L; static class State implements Serializable {

private static final long serialVersionUID = 1L;

// 元组值的总和

float sum = 0;

// 元组个数

int count = 0;

} /**

* 初始化状态

*/

@Override

public AvgAggregator.State init(Object batchId, TridentCollector collector) {

return new State();

} /**

* 在state变量中维护状态

*/

@Override

public void aggregate(AvgAggregator.State state, TridentTuple tuple, TridentCollector collector) {

state.count = state.count + 2;

state.sum = state.sum + tuple.getInteger(0) + tuple.getInteger(1);

} /**

* 处理完成所有元组之后,返回一个具有单个值的tuple

*/

@Override

public void complete(AvgAggregator.State state, TridentCollector collector) {

collector.emit(new Values(state.sum / state.count));

} }

批次聚合求平均值

import net.baiqu.shop.report.trident.demo01.PrintFunction;

import org.apache.storm.Config;

import org.apache.storm.LocalCluster;

import org.apache.storm.trident.Stream;

import org.apache.storm.trident.TridentTopology;

import org.apache.storm.trident.testing.FixedBatchSpout;

import org.apache.storm.tuple.Fields;

import org.apache.storm.tuple.Values; public class TridentTopologyApp4 { public static void main(String[] args) {

// 创建topology

TridentTopology topology = new TridentTopology(); // 创建spout

FixedBatchSpout testSpout = new FixedBatchSpout(new Fields("a", "b"), 4,

new Values(1, 2),

new Values(2, 3),

new Values(3, 4),

new Values(4, 5)); // 创建流

Stream stream = topology.newStream("testSpout", testSpout);

stream.aggregate(new Fields("a", "b"), new AvgAggregator(), new Fields("avg"))

.shuffle().each(new Fields("avg"), new PrintFunction(), new Fields("result")); // 本地提交

LocalCluster cluster = new LocalCluster();

cluster.submitTopology("TridentDemo4", new Config(), topology.build());

} }

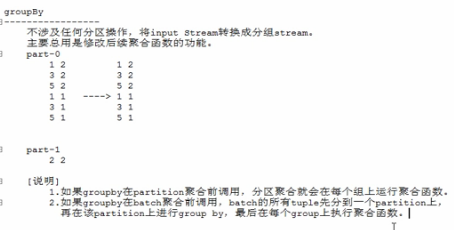

[CombinerAggregator]

在每个partition运行分区聚合,然后再进行global再分区将同一对batch的所有tuple分到一个partition中,最后再这一个partition中进行聚合运算,并产生结果进行输出。

该种方式的网络流量占用少于前两种方式。

public interface CombinerAggregator<T> extents Serializable {

// 在每个tuple上运行,并接受字段值

T init(TridentTuple tuple);

// 合成tuple的值,并输出一个值的tuple

T combine(T val1, T vak2);

// 如果分区不含有tuple,调用该方法.

T zero();

}

合成聚合函数

import clojure.lang.Numbers;

import org.apache.storm.trident.operation.CombinerAggregator;

import org.apache.storm.trident.tuple.TridentTuple; /**

* 合成聚合函数

*/

public class SumCombinerAggregator implements CombinerAggregator<Number> { private static final long serialVersionUID = 1L; @Override

public Number init(TridentTuple tuple) {

return (Number) tuple.getValue(0);

} @Override

public Number combine(Number val1, Number val2) {

return Numbers.add(val1, val2);

} @Override

public Number zero() {

return 0;

} }

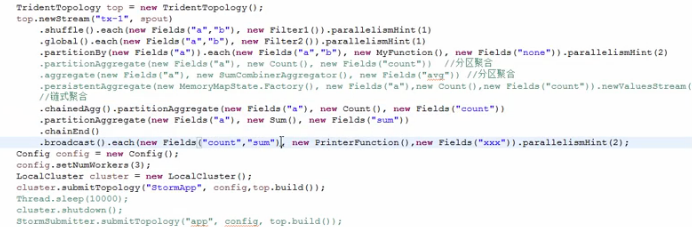

topology

import net.baiqu.shop.report.trident.demo01.PrintFunction;

import org.apache.storm.Config;

import org.apache.storm.LocalCluster;

import org.apache.storm.trident.Stream;

import org.apache.storm.trident.TridentTopology;

import org.apache.storm.trident.testing.FixedBatchSpout;

import org.apache.storm.tuple.Fields;

import org.apache.storm.tuple.Values; public class TridentTopologyApp4 { public static void main(String[] args) {

// 创建topology

TridentTopology topology = new TridentTopology(); // 创建spout

FixedBatchSpout testSpout = new FixedBatchSpout(new Fields("a", "b"), 4,

new Values(1, 2),

new Values(2, 3),

new Values(3, 4),

new Values(4, 5)); // 创建流

Stream stream = topology.newStream("testSpout", testSpout);

stream.aggregate(new Fields("a", "b"), new SumCombinerAggregator(), new Fields("sum"))

.shuffle().each(new Fields("sum"), new PrintFunction(), new Fields("result")); // 本地提交

LocalCluster cluster = new LocalCluster();

cluster.submitTopology("TridentDemo4", new Config(), topology.build());

} }

输出结果

PrintFunction:

PrintFunction:

PrintFunction:

......

最新文章

- Another app is currently holding the yum lock

- 基于Python的TestAgent实现

- Mongodb 的基本使用

- 分享50款 Android 移动应用程序图标【下篇】

- sd_cms置顶新闻,背景颜色突击显示

- 如何解决paramiko执行与否的问题

- 【转载】Kafka High Availability

- [BZOJ 1042] [HAOI2008] 硬币购物 【DP + 容斥】

- Discuz X2.5 用户名包含被系统屏蔽的字符[解决方法]

- (转) C# Activator.CreateInstance()方法使用

- 如何中途停止RMAN备份任务

- 如何使用SQLite数据库 匹配一个字符串的子串?

- Netty(二) 从线程模型的角度看 Netty 为什么是高性能的?

- 一个简单的TensorFlow可视化MNIST数据集识别程序

- ubuntu 下搭建redis和php的redis的拓展

- mac安装navicat mysql破解版

- wps去除首字母自动大写

- background 和渐变 总结

- ubuntu安装redis 和可视化工具

- 【六】注入框架RoboGuice使用:(Singletons And ContextSingletons)