Spark-class启动脚本解读

2024-10-19 10:18:16

#!/usr/bin/env bash #

# Licensed to the Apache Software Foundation (ASF) under one or more

# contributor license agreements. See the NOTICE file distributed with

# this work for additional information regarding copyright ownership.

# The ASF licenses this file to You under the Apache License, Version 2.0

# (the "License"); you may not use this file except in compliance with

# the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# # NOTE: Any changes to this file must be reflected in SparkSubmitDriverBootstrapper.scala! #判断是否是cygwin环境

cygwin=false

case "`uname`" in

CYGWIN*) cygwin=true;;

esac SCALA_VERSION=2.10 # Figure out where Spark is installed

#进去到SPark的安装目录

FWDIR="$(cd `dirname $0`/..; pwd)" # Export this as SPARK_HOME

# 生成SPARK_HOME环境变量

export SPARK_HOME="$FWDIR" #执行load-spark-env.sh脚本,主要功能为:

#执行spark-env.sh

#spark-env.sh的主要内容为一些程序过程中的配置和路径的环境变量

. $FWDIR/bin/load-spark-env.sh #如果没有参数的话执行以下内容

if [ -z "$1" ]; then

echo "Usage: spark-class <class> [<args>]" >&

exit

fi #如果SPARK_MEM不为null

if [ -n "$SPARK_MEM" ]; then

echo -e "Warning: SPARK_MEM is deprecated, please use a more specific config option" >&

echo -e "(e.g., spark.executor.memory or spark.driver.memory)." >&

fi # Use SPARK_MEM or 512m as the default memory, to be overridden by specific options

#默认SPARK_MEM的大小为512M

DEFAULT_MEM=${SPARK_MEM:-512m} SPARK_DAEMON_JAVA_OPTS="$SPARK_DAEMON_JAVA_OPTS -Dspark.akka.logLifecycleEvents=true" #注意SPARK_DRIVER_MEMORY从spark-env.sh的配置文件中读取SPARK_DRIVER_MEMORY参数 # Add java opts and memory settings for master, worker, history server, executors, and repl.

case "$1" in

# Master, Worker, and HistoryServer use SPARK_DAEMON_JAVA_OPTS (and specific opts) + SPARK_DAEMON_MEMORY.

'org.apache.spark.deploy.master.Master')

OUR_JAVA_OPTS="$SPARK_DAEMON_JAVA_OPTS $SPARK_MASTER_OPTS"

OUR_JAVA_MEM=${SPARK_DAEMON_MEMORY:-$DEFAULT_MEM}

;;

'org.apache.spark.deploy.worker.Worker')

OUR_JAVA_OPTS="$SPARK_DAEMON_JAVA_OPTS $SPARK_WORKER_OPTS"

OUR_JAVA_MEM=${SPARK_DAEMON_MEMORY:-$DEFAULT_MEM}

;;

'org.apache.spark.deploy.history.HistoryServer')

OUR_JAVA_OPTS="$SPARK_DAEMON_JAVA_OPTS $SPARK_HISTORY_OPTS"

OUR_JAVA_MEM=${SPARK_DAEMON_MEMORY:-$DEFAULT_MEM}

;; # Executors use SPARK_JAVA_OPTS + SPARK_EXECUTOR_MEMORY.

'org.apache.spark.executor.CoarseGrainedExecutorBackend')

OUR_JAVA_OPTS="$SPARK_JAVA_OPTS $SPARK_EXECUTOR_OPTS"

OUR_JAVA_MEM=${SPARK_EXECUTOR_MEMORY:-$DEFAULT_MEM}

;;

'org.apache.spark.executor.MesosExecutorBackend')

OUR_JAVA_OPTS="$SPARK_JAVA_OPTS $SPARK_EXECUTOR_OPTS"

OUR_JAVA_MEM=${SPARK_EXECUTOR_MEMORY:-$DEFAULT_MEM}

;; # Spark submit uses SPARK_JAVA_OPTS + SPARK_SUBMIT_OPTS +

# SPARK_DRIVER_MEMORY + SPARK_SUBMIT_DRIVER_MEMORY.

'org.apache.spark.deploy.SparkSubmit')

OUR_JAVA_OPTS="$SPARK_JAVA_OPTS $SPARK_SUBMIT_OPTS"

OUR_JAVA_MEM=${SPARK_DRIVER_MEMORY:-$DEFAULT_MEM}

if [ -n "$SPARK_SUBMIT_LIBRARY_PATH" ]; then

OUR_JAVA_OPTS="$OUR_JAVA_OPTS -Djava.library.path=$SPARK_SUBMIT_LIBRARY_PATH"

fi

if [ -n "$SPARK_SUBMIT_DRIVER_MEMORY" ]; then

OUR_JAVA_MEM="$SPARK_SUBMIT_DRIVER_MEMORY"

fi

;; *)

OUR_JAVA_OPTS="$SPARK_JAVA_OPTS"

OUR_JAVA_MEM=${SPARK_DRIVER_MEMORY:-$DEFAULT_MEM}

;;

esac #找到java的安装目录 # Find the java binary

if [ -n "${JAVA_HOME}" ]; then

RUNNER="${JAVA_HOME}/bin/java"

else

if [ `command -v java` ]; then

RUNNER="java"

else

echo "JAVA_HOME is not set" >&

exit

fi

fi # Set JAVA_OPTS to be able to load native libraries and to set heap size

JAVA_OPTS="-XX:MaxPermSize=128m $OUR_JAVA_OPTS"

JAVA_OPTS="$JAVA_OPTS -Xms$OUR_JAVA_MEM -Xmx$OUR_JAVA_MEM" # Load extra JAVA_OPTS from conf/java-opts, if it exists

if [ -e "$FWDIR/conf/java-opts" ] ; then

JAVA_OPTS="$JAVA_OPTS `cat $FWDIR/conf/java-opts`"

fi # Attention: when changing the way the JAVA_OPTS are assembled, the change must be reflected in CommandUtils.scala! TOOLS_DIR="$FWDIR"/tools SPARK_TOOLS_JAR=""

if [ -e "$TOOLS_DIR"/target/scala-$SCALA_VERSION/spark-tools*[-9Tg].jar ]; then

# Use the JAR from the SBT build

export SPARK_TOOLS_JAR=`ls "$TOOLS_DIR"/target/scala-$SCALA_VERSION/spark-tools*[-9Tg].jar`

fi

if [ -e "$TOOLS_DIR"/target/spark-tools*[-9Tg].jar ]; then

# Use the JAR from the Maven build

# TODO: this also needs to become an assembly!

export SPARK_TOOLS_JAR=`ls "$TOOLS_DIR"/target/spark-tools*[-9Tg].jar`

fi # Compute classpath using external script

classpath_output=$($FWDIR/bin/compute-classpath.sh)

if [[ "$?" != "" ]]; then

echo "$classpath_output"

exit

else

CLASSPATH=$classpath_output

fi if [[ "$1" =~ org.apache.spark.tools.* ]]; then

if test -z "$SPARK_TOOLS_JAR"; then

echo "Failed to find Spark Tools Jar in $FWDIR/tools/target/scala-$SCALA_VERSION/" >&

echo "You need to build spark before running $1." >&

exit

fi

CLASSPATH="$CLASSPATH:$SPARK_TOOLS_JAR"

fi if $cygwin; then

CLASSPATH=`cygpath -wp $CLASSPATH`

if [ "$1" == "org.apache.spark.tools.JavaAPICompletenessChecker" ]; then

export SPARK_TOOLS_JAR=`cygpath -w $SPARK_TOOLS_JAR`

fi

fi

export CLASSPATH # In Spark submit client mode, the driver is launched in the same JVM as Spark submit itself.

# Here we must parse the properties file for relevant "spark.driver.*" configs before launching

# the driver JVM itself. Instead of handling this complexity in Bash, we launch a separate JVM

# to prepare the launch environment of this driver JVM. # 最终调用org.apache.spark.deploy.SparkSubmit类 if [ -n "$SPARK_SUBMIT_BOOTSTRAP_DRIVER" ]; then

# This is used only if the properties file actually contains these special configs

# Export the environment variables needed by SparkSubmitDriverBootstrapper

export RUNNER

export CLASSPATH

export JAVA_OPTS

export OUR_JAVA_MEM

export SPARK_CLASS=

shift # Ignore main class (org.apache.spark.deploy.SparkSubmit) and use our own

exec "$RUNNER" org.apache.spark.deploy.SparkSubmitDriverBootstrapper "$@"

else

# Note: The format of this command is closely echoed in SparkSubmitDriverBootstrapper.scala

if [ -n "$SPARK_PRINT_LAUNCH_COMMAND" ]; then

echo -n "Spark Command: " >&

echo "$RUNNER" #E:\Program Files\Java\jdk1..0_79/bin/java

echo "$CLASSPATH" #E:\cygwin64\home\hadoop2\hive\lib\mysql-connector-java-5.1.-bin.jar;E:\cygwin64\home\hadoop2\hive\conf\hive-site.xml;E:\cygwin64\home\hadoop2\spark-1.1.-bin-hadoop2.\lib\datanucleus-core-3.2..jar;E:\cygwin64\home\hadoop2\spark-1.1.-bin-hadoop2.\lib\datanucleus-api-jdo-3.2..jar;E:\cygwin64\home\hadoop2\spark-1.1.-bin-hadoop2.\lib\datanucleus-rdbms-3.2..jar;.;E:\cygwin64\usr\local\spark-1.1.-bin-hadoop2.\conf;E:\cygwin64\usr\local\spark-1.1.-bin-hadoop2.\lib\spark-assembly-1.1.-hadoop2.4.0.jar;E:\cygwin64\home\hadoop2\hadoop-2.5.\etc\hadoop\

echo $JAVA_OPTS #-XX:MaxPermSize=512m -Djline.terminal=unix -Xms2048M -Xmx2048M

echo "$@" #org.apache.spark.deploy.SparkSubmit --class org.apache.spark.repl.Main spark-shell

echo "$RUNNER" -cp "$CLASSPATH" $JAVA_OPTS "$@" >&

echo -e "========================================\n" >&

fi

exec "$RUNNER" -cp "$CLASSPATH" $JAVA_OPTS "$@"

fi

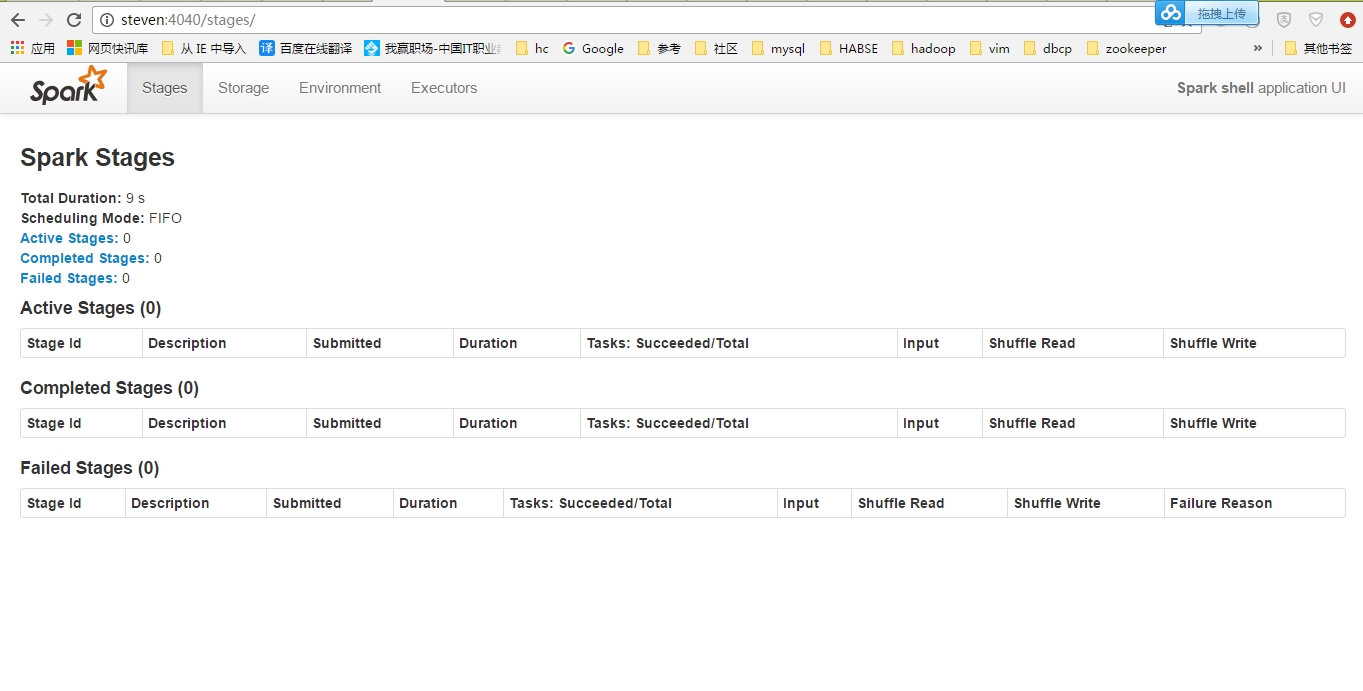

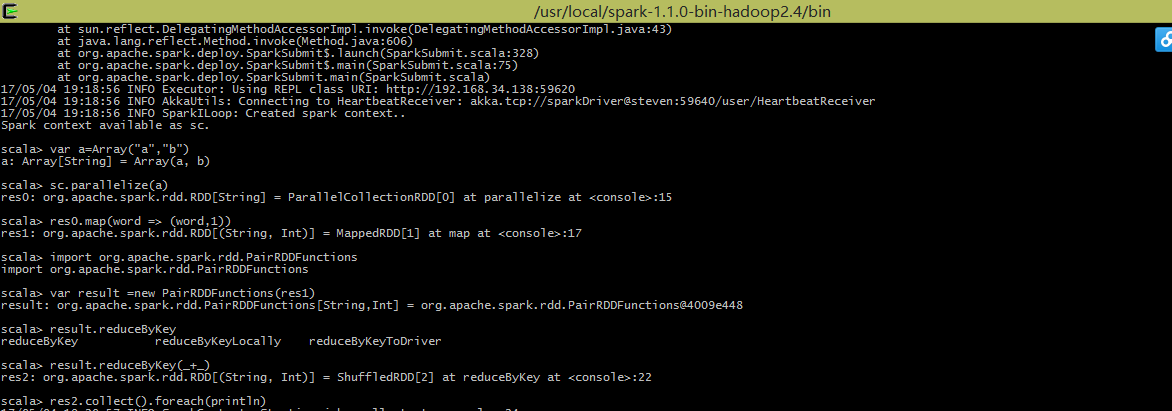

用Client模式跑一下:

执行一个WordCount:

最新文章

- Collections.unmodifiableMap

- Git使用指南(1)——Git配置命令

- IOS第18天(3,CALayer隐式动画)

- Java多线程初学者指南(4):线程的生命周期

- css 日常

- BZOJ1596: [Usaco2008 Jan]电话网络

- Android 使用Post方式提交数据(登录)

- iOS开发:自定义tableViewCell处理的问题

- VB execl文件后台代码,基础语法

- TTL电平与RS232电平的区别

- 并归排序 (Java版本,时间复杂度为O(n))

- ORM之SQLALchemy

- Solr 07 - Solr从MySQL数据库中导入数据 (Solr DIH的使用示例)

- OpenCV-Python入门教程6-Otsu阈值法

- python-web自动化-三种等待方式

- TOJ5398: 签到大富翁(简单模拟) and TOJ 5395: 大于中值的边界元素(数组的应用)

- vue实现左侧滑动删除

- Jenkins-Pipeline 流水线发布

- linux命令:linux权限管理命令

- mysql 笔记分享