mapreduce课堂测试结果

2024-09-03 15:13:40

package mapreduce;

import java.io.IOException;

import java.util.StringTokenizer;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class WordCount {

public static void main(String[] args) throws IOException,ClassNotFoundException,InterruptedException {

Job job = Job.getInstance();

job.setJobName("WordCount");

job.setJarByClass(WordCount.class);

job.setMapperClass(doMapper.class);

job.setReducerClass(doReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

Path in = new Path("hdfs://localhost:9000/a/buyer_favorite1");

Path out = new Path("hdfs://localhost:9000/a/out");

FileInputFormat.addInputPath(job,in);

FileOutputFormat.setOutputPath(job,out);

System.exit(job.waitForCompletion(true)?:);

}

public static class doMapper extends Mapper<Object,Text,Text,IntWritable>{

public static final IntWritable one = new IntWritable();

public static Text word = new Text();

@Override

protected void map(Object key, Text value, Context context)

throws IOException,InterruptedException {

StringTokenizer tokenizer = new StringTokenizer(value.toString()," ");

word.set(tokenizer.nextToken());

context.write(word,one);

}

}

public static class doReducer extends Reducer<Text,IntWritable,Text,IntWritable>{

private IntWritable result = new IntWritable();

@Override

protected void reduce(Text key,Iterable<IntWritable> values,Context context)

throws IOException,InterruptedException{

int sum = ;

for (IntWritable value : values){

sum += value.get();

}

result.set(sum);

context.write(key,result);

}

}

}

题目:

在/home/hadoop/data/mapreduce1/a下面创建buyer_mapreduce1.txt文件并向其中添加一部分数据,通过命令

cd /usr/local/hadoop

切换至/usr/local/hadoop文件夹下将刚才创建的文本文件通过命令

./bin/hdfs dfs -put /home/hadoop/data/mapreduce1/a/buyer_mapreduce1.txt a

上传至hadoop文件夹下的a文件夹下并对其进行后续操作;

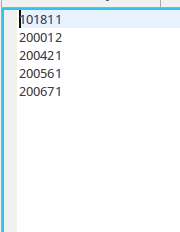

运行截图:

经验总结:在linux的文本文件中相邻行数据之间如果存在空行就会报错,去除空行则错误消失;

最新文章

- Android Activity的4种启动模式详解(示例)

- 【转】java中volatile关键字的含义

- python中的二维数组和lamda

- img标签src=""和background-image:url();引发两次请求页面bug

- iOS - UIButton

- Turbo Sort Add problem to Todo list Problem code: TSORT

- css基本属性

- jquery之each遍历list列表

- 分针网—每日分享:HTML解析原理

- hibernate:There is a cycle in the hierarchy! 造成死循环解决办法

- Android知识点剖析系列:深入了解layout_weight属性

- NB-IoT省电模式:PSM、DRX、eDRX【转】

- Linux-3.0.8中基于S5PV210的IRQ模块代码追踪和分析

- 接口隔离原则(ISP)

- <转>从K近邻算法、距离度量谈到KD树、SIFT+BBF算法

- CSS COLOR

- odoo开发笔记 -- odoo源码解析

- HAWQ + MADlib 玩转数据挖掘之(六)——主成分分析与主成分投影

- 20165227 学习基础和C语言基础调查

- 跟我一起学extjs5(08--自己定义菜单1)

热门文章

- 必会的 55 个 Java 性能优化细节!一网打尽!

- 向量召回 vearch

- windows安装IIS不成功的原因

- keepalived haproxy 主备配置

- Linux上DNS解析总是选择resolv.conf中第二位的DNS服务器IP地址

- linux 实时监控网速脚本(转)

- pom.xml activatedProperties --spring.profiles.active=uat 对应

- [译]在Python中,如何拆分字符串并保留分隔符?

- xml 3 字节的 UTF-8 序列的字节 3 无效

- ThinkPHP 中入口文件中的APP_DEBUG为TRUE时不报错,改为FALSE时报错