解惑:在Ubuntu18.04.2的idea上运行Scala支持的spark程序遇到的问题

解惑:在Ubuntu18.04.2的idea上运行Scala支持的spark程序遇到的问题

一、前言

最近在做一点小的实验,用到了Scala,spark这些东西,于是在Linux平台上来完成,结果一个最简单的入门程序搞了一两天,出了汗颜之外,对于这些工具的难用性也有了深刻的认知,难怪Hadoop的几个公司会渐渐走向衰落。

二、解惑

如果大家看过我之前的博客就知道,我是用过Hadoop,spark的,当时就遇到了非常多的麻烦,这些产品迭代的比较快,每个版本对于之前的兼容性可以说是微乎其微,因此版本的选用非常重要,除了在官网上看这些版本匹配的知识之外,网上很少涉及到这些东西的,但是这些东西却是非常重要的。而且这些产品安装起来也比较麻烦,下载下来,虽说是开箱即用,但是也需要对于里面的一些配置文件进行一些修改,这些都不算什么,当我们在命令行上运行的时候,却发现出现莫名其妙的错误,这些错误多与底层的Java版本,Hadoop版本,Scala版本等等有关,真的是让人很郁闷,但是产品做的也不好没有一些正确的提示,于是在网上找资料,但是发现能找到的非常少,往往是南辕北辙,自相矛盾,最后没有个一两天是很难找到最终的解决办法的。这些产品如果不改进,和那些MySQL,mongodb相比绝对是会被淘汰的。在本次小测试中,我就遇到了因为版本依赖问题而停工两天的问题,那就是在Ubuntu18.04.2的idea上运行Scala支持的spark程序,遇到的奇葩的问题。

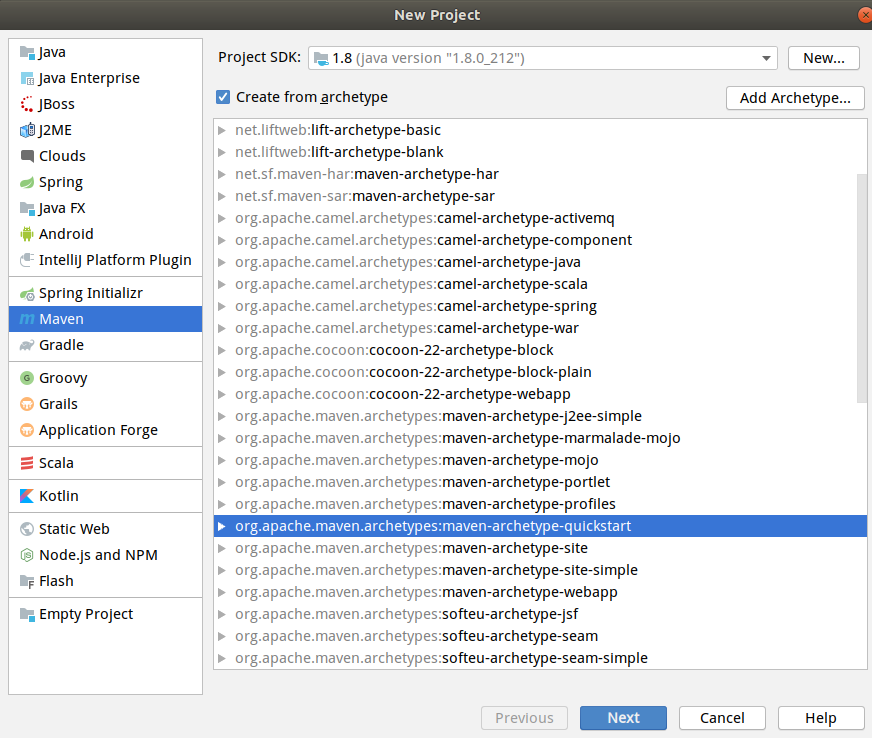

先介绍一下我是怎么一步步来构建程序的,网上有不少案例,但是都是浅尝辄止,语焉不详,这些人是不配写文章的,没有一点敬畏心和责任感,搞出来的东西是把很多最重要的细节直接忽略,不知道是缺乏表达能力还是不屑为之。首先就是创建什么样的工程,支持Scala的程序,在idea中可以有两种方法,一种是直接创建Scala工程,这样首先需要安装Scala插件,其次在创建工程之后需要自己配置程序运行的环境,这些环境盘根错节,配置起来可能需要很多次尝试,最终浪费大量的精力;第二种方式还是要安装Scala插件,但是创建maven工程,在pom.xml文件中导入需要的配置,根据依赖和继承关系自动下载,并且导入Scala插件即可,显然第二种更简单一点。于是我们用第二种方式,构建maven工程。

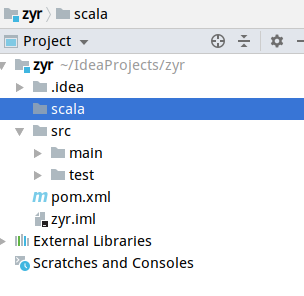

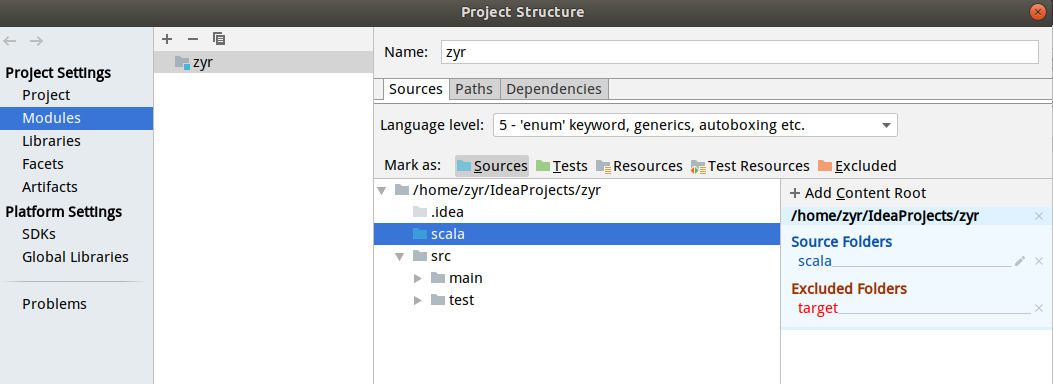

创建新的文件夹,并且在程序结构中设置为我们的源文件文件夹。

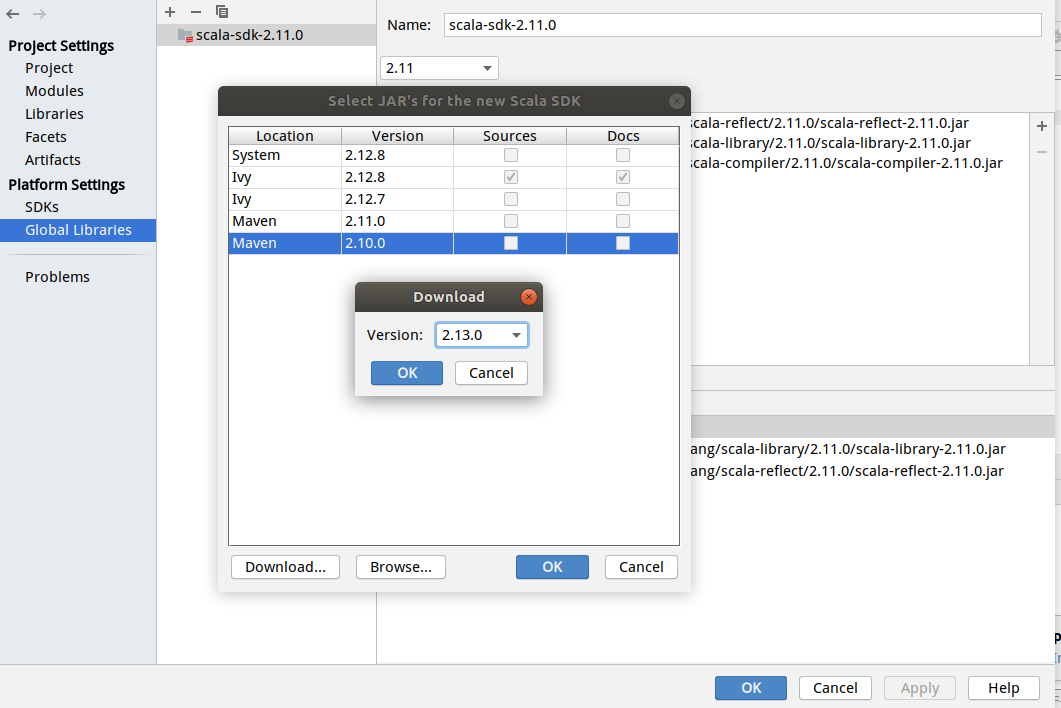

最后我们需要引入我们下载的Scala插件,这个时候就涉及到版本问题了,Scala2.10之前支持Java7,2.11之后不支持Java7,而是Java8了,我们用的Java8,那么至少也是2.11,而2.11有很多版本,我们需要去选择一个。在这个界面,我们加入相应的Scala版本,但是这个版本可能没有,于是我们点击download按钮即可选择相应的版本下载,这里不得不吐槽一下idea实在是做的比较差的一点,下载需要半个小时时间,并且下载过程中没有进度条,让人非常的不耐,关闭也非常的麻烦。下载之后我们选择相应的版本。

到了这一点就需要在pom.xml中进行配置了,因为用到spark里面的机器学习插件,我们引入即可,因为包之间的依赖关系,maven自动帮我们搞定依赖关系,值得称赞。

<properties>

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

<maven.compiler.source>1.7</maven.compiler.source>

<maven.compiler.target>1.7</maven.compiler.target>

<!-- <scala.version>2.11.0</scala.version>-->

<spark.artifactID.suffix>2.11</spark.artifactID.suffix>

<spark.version>2.4.3</spark.version>

</properties>

<dependencies>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.11</version>

<scope>test</scope>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-mllib_${spark.artifactID.suffix}</artifactId>

<version>${spark.version}</version>

</dependency>

</dependencies>

这里我们版本设置成Scala2.11,对应于刚刚的下载,如果用2.12不知道怎么的,明明导入了依赖关系,总是连程序都出现问题,说找不到相应的包,而我在下载的依赖中明明就发现了这些文件,真的是让人惊讶!!!后来好不容易找到了,运行的时候却发现对于出现奇葩的异常,运行个程序真的是难呀,我们的Scala的hello程序竟然都难到这种程度了,版本问题造成的错误可以说是很奇葩了,spark按照maven仓库里面来尝试,我选的是最新版2.4.3。因为这是我目前可以运行的配置,所以是暂时没问题的。有的时候更奇葩的是第一次运行成功了,第二次再运行另一个程序失败了,第三次再来运行第一次的程序也出现了一样的问题,把idea的缓存都清了一次重启了很多次,依然存在这些问题,在另一台电脑上操作还是这样的问题,你说让不让人绝望?!最终暂时探索的一个可行的版本关联配置是Java8+Scala2.11.0+sparkmlib2.11+spark2.4.3,至此问题解决。

第一个程序:

package com.kmeans

import org.apache.spark.{SparkConf, SparkContext}

object MyTest {

def main(args:Array[String]): Unit = {

val logFile="file:///home/zyr/file.txt"

val conf = new SparkConf().setAppName("Simple Application").setMaster("local[2]")

val sc=new SparkContext(conf)

val logData=sc.textFile(logFile,2).cache()

val num=logData.flatMap(x=>x.split(" ")).filter(_.contains("a")).count()

println("Words with a : %s".format(num))

sc.stop()

}

}

文件:

xyr a b c d f g a d f g

a a a a a a a a a

w e r t y yuu

运行结果:

/usr/lib/jvm/java-8-openjdk-amd64/bin/java -javaagent:/usr/local/idea/lib/idea_rt.jar=44451:/usr/local/idea/bin -Dfile.encoding=UTF-8 -classpath /usr/lib/jvm/java-8-openjdk-amd64/jre/lib/charsets.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/ext/cldrdata.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/ext/dnsns.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/ext/icedtea-sound.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/ext/jaccess.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/ext/java-atk-wrapper.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/ext/localedata.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/ext/nashorn.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/ext/sunec.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/ext/sunjce_provider.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/ext/sunpkcs11.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/ext/zipfs.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/jce.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/jsse.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/management-agent.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/resources.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/rt.jar:/home/zyr/IdeaProjects/myspark/target/classes:/home/zyr/.m2/repository/org/scala-lang/scala-reflect/2.11.0/scala-reflect-2.11.0.jar:/home/zyr/.m2/repository/org/scala-lang/scala-library/2.11.0/scala-library-2.11.0.jar:/home/zyr/.m2/repository/org/apache/spark/spark-mllib_2.11/2.4.3/spark-mllib_2.11-2.4.3.jar:/home/zyr/.m2/repository/org/scala-lang/modules/scala-parser-combinators_2.11/1.1.0/scala-parser-combinators_2.11-1.1.0.jar:/home/zyr/.m2/repository/org/scala-lang/scala-library/2.11.12/scala-library-2.11.12.jar:/home/zyr/.m2/repository/org/apache/spark/spark-core_2.11/2.4.3/spark-core_2.11-2.4.3.jar:/home/zyr/.m2/repository/com/thoughtworks/paranamer/paranamer/2.8/paranamer-2.8.jar:/home/zyr/.m2/repository/org/apache/avro/avro/1.8.2/avro-1.8.2.jar:/home/zyr/.m2/repository/org/codehaus/jackson/jackson-core-asl/1.9.13/jackson-core-asl-1.9.13.jar:/home/zyr/.m2/repository/org/codehaus/jackson/jackson-mapper-asl/1.9.13/jackson-mapper-asl-1.9.13.jar:/home/zyr/.m2/repository/org/apache/commons/commons-compress/1.8.1/commons-compress-1.8.1.jar:/home/zyr/.m2/repository/org/tukaani/xz/1.5/xz-1.5.jar:/home/zyr/.m2/repository/org/apache/avro/avro-mapred/1.8.2/avro-mapred-1.8.2-hadoop2.jar:/home/zyr/.m2/repository/org/apache/avro/avro-ipc/1.8.2/avro-ipc-1.8.2.jar:/home/zyr/.m2/repository/commons-codec/commons-codec/1.9/commons-codec-1.9.jar:/home/zyr/.m2/repository/com/twitter/chill_2.11/0.9.3/chill_2.11-0.9.3.jar:/home/zyr/.m2/repository/com/esotericsoftware/kryo-shaded/4.0.2/kryo-shaded-4.0.2.jar:/home/zyr/.m2/repository/com/esotericsoftware/minlog/1.3.0/minlog-1.3.0.jar:/home/zyr/.m2/repository/org/objenesis/objenesis/2.5.1/objenesis-2.5.1.jar:/home/zyr/.m2/repository/com/twitter/chill-java/0.9.3/chill-java-0.9.3.jar:/home/zyr/.m2/repository/org/apache/xbean/xbean-asm6-shaded/4.8/xbean-asm6-shaded-4.8.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-client/2.6.5/hadoop-client-2.6.5.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-common/2.6.5/hadoop-common-2.6.5.jar:/home/zyr/.m2/repository/commons-cli/commons-cli/1.2/commons-cli-1.2.jar:/home/zyr/.m2/repository/xmlenc/xmlenc/0.52/xmlenc-0.52.jar:/home/zyr/.m2/repository/commons-httpclient/commons-httpclient/3.1/commons-httpclient-3.1.jar:/home/zyr/.m2/repository/commons-io/commons-io/2.4/commons-io-2.4.jar:/home/zyr/.m2/repository/commons-collections/commons-collections/3.2.2/commons-collections-3.2.2.jar:/home/zyr/.m2/repository/commons-configuration/commons-configuration/1.6/commons-configuration-1.6.jar:/home/zyr/.m2/repository/commons-digester/commons-digester/1.8/commons-digester-1.8.jar:/home/zyr/.m2/repository/commons-beanutils/commons-beanutils/1.7.0/commons-beanutils-1.7.0.jar:/home/zyr/.m2/repository/com/google/code/gson/gson/2.2.4/gson-2.2.4.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-auth/2.6.5/hadoop-auth-2.6.5.jar:/home/zyr/.m2/repository/org/apache/httpcomponents/httpclient/4.2.5/httpclient-4.2.5.jar:/home/zyr/.m2/repository/org/apache/httpcomponents/httpcore/4.2.4/httpcore-4.2.4.jar:/home/zyr/.m2/repository/org/apache/directory/server/apacheds-kerberos-codec/2.0.0-M15/apacheds-kerberos-codec-2.0.0-M15.jar:/home/zyr/.m2/repository/org/apache/directory/server/apacheds-i18n/2.0.0-M15/apacheds-i18n-2.0.0-M15.jar:/home/zyr/.m2/repository/org/apache/directory/api/api-asn1-api/1.0.0-M20/api-asn1-api-1.0.0-M20.jar:/home/zyr/.m2/repository/org/apache/directory/api/api-util/1.0.0-M20/api-util-1.0.0-M20.jar:/home/zyr/.m2/repository/org/apache/curator/curator-client/2.6.0/curator-client-2.6.0.jar:/home/zyr/.m2/repository/org/htrace/htrace-core/3.0.4/htrace-core-3.0.4.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-hdfs/2.6.5/hadoop-hdfs-2.6.5.jar:/home/zyr/.m2/repository/org/mortbay/jetty/jetty-util/6.1.26/jetty-util-6.1.26.jar:/home/zyr/.m2/repository/xerces/xercesImpl/2.9.1/xercesImpl-2.9.1.jar:/home/zyr/.m2/repository/xml-apis/xml-apis/1.3.04/xml-apis-1.3.04.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-mapreduce-client-app/2.6.5/hadoop-mapreduce-client-app-2.6.5.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-mapreduce-client-common/2.6.5/hadoop-mapreduce-client-common-2.6.5.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-yarn-client/2.6.5/hadoop-yarn-client-2.6.5.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-yarn-server-common/2.6.5/hadoop-yarn-server-common-2.6.5.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-mapreduce-client-shuffle/2.6.5/hadoop-mapreduce-client-shuffle-2.6.5.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-yarn-api/2.6.5/hadoop-yarn-api-2.6.5.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-mapreduce-client-core/2.6.5/hadoop-mapreduce-client-core-2.6.5.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-yarn-common/2.6.5/hadoop-yarn-common-2.6.5.jar:/home/zyr/.m2/repository/javax/xml/bind/jaxb-api/2.2.2/jaxb-api-2.2.2.jar:/home/zyr/.m2/repository/javax/xml/stream/stax-api/1.0-2/stax-api-1.0-2.jar:/home/zyr/.m2/repository/org/codehaus/jackson/jackson-jaxrs/1.9.13/jackson-jaxrs-1.9.13.jar:/home/zyr/.m2/repository/org/codehaus/jackson/jackson-xc/1.9.13/jackson-xc-1.9.13.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-mapreduce-client-jobclient/2.6.5/hadoop-mapreduce-client-jobclient-2.6.5.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-annotations/2.6.5/hadoop-annotations-2.6.5.jar:/home/zyr/.m2/repository/org/apache/spark/spark-launcher_2.11/2.4.3/spark-launcher_2.11-2.4.3.jar:/home/zyr/.m2/repository/org/apache/spark/spark-kvstore_2.11/2.4.3/spark-kvstore_2.11-2.4.3.jar:/home/zyr/.m2/repository/org/fusesource/leveldbjni/leveldbjni-all/1.8/leveldbjni-all-1.8.jar:/home/zyr/.m2/repository/com/fasterxml/jackson/core/jackson-core/2.6.7/jackson-core-2.6.7.jar:/home/zyr/.m2/repository/com/fasterxml/jackson/core/jackson-annotations/2.6.7/jackson-annotations-2.6.7.jar:/home/zyr/.m2/repository/org/apache/spark/spark-network-common_2.11/2.4.3/spark-network-common_2.11-2.4.3.jar:/home/zyr/.m2/repository/org/apache/spark/spark-network-shuffle_2.11/2.4.3/spark-network-shuffle_2.11-2.4.3.jar:/home/zyr/.m2/repository/org/apache/spark/spark-unsafe_2.11/2.4.3/spark-unsafe_2.11-2.4.3.jar:/home/zyr/.m2/repository/javax/activation/activation/1.1.1/activation-1.1.1.jar:/home/zyr/.m2/repository/org/apache/curator/curator-recipes/2.6.0/curator-recipes-2.6.0.jar:/home/zyr/.m2/repository/org/apache/curator/curator-framework/2.6.0/curator-framework-2.6.0.jar:/home/zyr/.m2/repository/com/google/guava/guava/16.0.1/guava-16.0.1.jar:/home/zyr/.m2/repository/org/apache/zookeeper/zookeeper/3.4.6/zookeeper-3.4.6.jar:/home/zyr/.m2/repository/javax/servlet/javax.servlet-api/3.1.0/javax.servlet-api-3.1.0.jar:/home/zyr/.m2/repository/org/apache/commons/commons-lang3/3.5/commons-lang3-3.5.jar:/home/zyr/.m2/repository/com/google/code/findbugs/jsr305/1.3.9/jsr305-1.3.9.jar:/home/zyr/.m2/repository/org/slf4j/slf4j-api/1.7.16/slf4j-api-1.7.16.jar:/home/zyr/.m2/repository/org/slf4j/jul-to-slf4j/1.7.16/jul-to-slf4j-1.7.16.jar:/home/zyr/.m2/repository/org/slf4j/jcl-over-slf4j/1.7.16/jcl-over-slf4j-1.7.16.jar:/home/zyr/.m2/repository/log4j/log4j/1.2.17/log4j-1.2.17.jar:/home/zyr/.m2/repository/org/slf4j/slf4j-log4j12/1.7.16/slf4j-log4j12-1.7.16.jar:/home/zyr/.m2/repository/com/ning/compress-lzf/1.0.3/compress-lzf-1.0.3.jar:/home/zyr/.m2/repository/org/xerial/snappy/snappy-java/1.1.7.3/snappy-java-1.1.7.3.jar:/home/zyr/.m2/repository/org/lz4/lz4-java/1.4.0/lz4-java-1.4.0.jar:/home/zyr/.m2/repository/com/github/luben/zstd-jni/1.3.2-2/zstd-jni-1.3.2-2.jar:/home/zyr/.m2/repository/org/roaringbitmap/RoaringBitmap/0.7.45/RoaringBitmap-0.7.45.jar:/home/zyr/.m2/repository/org/roaringbitmap/shims/0.7.45/shims-0.7.45.jar:/home/zyr/.m2/repository/commons-net/commons-net/3.1/commons-net-3.1.jar:/home/zyr/.m2/repository/org/json4s/json4s-jackson_2.11/3.5.3/json4s-jackson_2.11-3.5.3.jar:/home/zyr/.m2/repository/org/json4s/json4s-core_2.11/3.5.3/json4s-core_2.11-3.5.3.jar:/home/zyr/.m2/repository/org/json4s/json4s-ast_2.11/3.5.3/json4s-ast_2.11-3.5.3.jar:/home/zyr/.m2/repository/org/json4s/json4s-scalap_2.11/3.5.3/json4s-scalap_2.11-3.5.3.jar:/home/zyr/.m2/repository/org/scala-lang/modules/scala-xml_2.11/1.0.6/scala-xml_2.11-1.0.6.jar:/home/zyr/.m2/repository/org/glassfish/jersey/core/jersey-client/2.22.2/jersey-client-2.22.2.jar:/home/zyr/.m2/repository/javax/ws/rs/javax.ws.rs-api/2.0.1/javax.ws.rs-api-2.0.1.jar:/home/zyr/.m2/repository/org/glassfish/hk2/hk2-api/2.4.0-b34/hk2-api-2.4.0-b34.jar:/home/zyr/.m2/repository/org/glassfish/hk2/hk2-utils/2.4.0-b34/hk2-utils-2.4.0-b34.jar:/home/zyr/.m2/repository/org/glassfish/hk2/external/aopalliance-repackaged/2.4.0-b34/aopalliance-repackaged-2.4.0-b34.jar:/home/zyr/.m2/repository/org/glassfish/hk2/external/javax.inject/2.4.0-b34/javax.inject-2.4.0-b34.jar:/home/zyr/.m2/repository/org/glassfish/hk2/hk2-locator/2.4.0-b34/hk2-locator-2.4.0-b34.jar:/home/zyr/.m2/repository/org/javassist/javassist/3.18.1-GA/javassist-3.18.1-GA.jar:/home/zyr/.m2/repository/org/glassfish/jersey/core/jersey-common/2.22.2/jersey-common-2.22.2.jar:/home/zyr/.m2/repository/javax/annotation/javax.annotation-api/1.2/javax.annotation-api-1.2.jar:/home/zyr/.m2/repository/org/glassfish/jersey/bundles/repackaged/jersey-guava/2.22.2/jersey-guava-2.22.2.jar:/home/zyr/.m2/repository/org/glassfish/hk2/osgi-resource-locator/1.0.1/osgi-resource-locator-1.0.1.jar:/home/zyr/.m2/repository/org/glassfish/jersey/core/jersey-server/2.22.2/jersey-server-2.22.2.jar:/home/zyr/.m2/repository/org/glassfish/jersey/media/jersey-media-jaxb/2.22.2/jersey-media-jaxb-2.22.2.jar:/home/zyr/.m2/repository/javax/validation/validation-api/1.1.0.Final/validation-api-1.1.0.Final.jar:/home/zyr/.m2/repository/org/glassfish/jersey/containers/jersey-container-servlet/2.22.2/jersey-container-servlet-2.22.2.jar:/home/zyr/.m2/repository/org/glassfish/jersey/containers/jersey-container-servlet-core/2.22.2/jersey-container-servlet-core-2.22.2.jar:/home/zyr/.m2/repository/io/netty/netty-all/4.1.17.Final/netty-all-4.1.17.Final.jar:/home/zyr/.m2/repository/io/netty/netty/3.9.9.Final/netty-3.9.9.Final.jar:/home/zyr/.m2/repository/com/clearspring/analytics/stream/2.7.0/stream-2.7.0.jar:/home/zyr/.m2/repository/io/dropwizard/metrics/metrics-core/3.1.5/metrics-core-3.1.5.jar:/home/zyr/.m2/repository/io/dropwizard/metrics/metrics-jvm/3.1.5/metrics-jvm-3.1.5.jar:/home/zyr/.m2/repository/io/dropwizard/metrics/metrics-json/3.1.5/metrics-json-3.1.5.jar:/home/zyr/.m2/repository/io/dropwizard/metrics/metrics-graphite/3.1.5/metrics-graphite-3.1.5.jar:/home/zyr/.m2/repository/com/fasterxml/jackson/core/jackson-databind/2.6.7.1/jackson-databind-2.6.7.1.jar:/home/zyr/.m2/repository/com/fasterxml/jackson/module/jackson-module-scala_2.11/2.6.7.1/jackson-module-scala_2.11-2.6.7.1.jar:/home/zyr/.m2/repository/org/scala-lang/scala-reflect/2.11.8/scala-reflect-2.11.8.jar:/home/zyr/.m2/repository/com/fasterxml/jackson/module/jackson-module-paranamer/2.7.9/jackson-module-paranamer-2.7.9.jar:/home/zyr/.m2/repository/org/apache/ivy/ivy/2.4.0/ivy-2.4.0.jar:/home/zyr/.m2/repository/oro/oro/2.0.8/oro-2.0.8.jar:/home/zyr/.m2/repository/net/razorvine/pyrolite/4.13/pyrolite-4.13.jar:/home/zyr/.m2/repository/net/sf/py4j/py4j/0.10.7/py4j-0.10.7.jar:/home/zyr/.m2/repository/org/apache/commons/commons-crypto/1.0.0/commons-crypto-1.0.0.jar:/home/zyr/.m2/repository/org/apache/spark/spark-streaming_2.11/2.4.3/spark-streaming_2.11-2.4.3.jar:/home/zyr/.m2/repository/org/apache/spark/spark-sql_2.11/2.4.3/spark-sql_2.11-2.4.3.jar:/home/zyr/.m2/repository/com/univocity/univocity-parsers/2.7.3/univocity-parsers-2.7.3.jar:/home/zyr/.m2/repository/org/apache/spark/spark-sketch_2.11/2.4.3/spark-sketch_2.11-2.4.3.jar:/home/zyr/.m2/repository/org/apache/spark/spark-catalyst_2.11/2.4.3/spark-catalyst_2.11-2.4.3.jar:/home/zyr/.m2/repository/org/codehaus/janino/janino/3.0.9/janino-3.0.9.jar:/home/zyr/.m2/repository/org/codehaus/janino/commons-compiler/3.0.9/commons-compiler-3.0.9.jar:/home/zyr/.m2/repository/org/antlr/antlr4-runtime/4.7/antlr4-runtime-4.7.jar:/home/zyr/.m2/repository/org/apache/orc/orc-core/1.5.5/orc-core-1.5.5-nohive.jar:/home/zyr/.m2/repository/org/apache/orc/orc-shims/1.5.5/orc-shims-1.5.5.jar:/home/zyr/.m2/repository/com/google/protobuf/protobuf-java/2.5.0/protobuf-java-2.5.0.jar:/home/zyr/.m2/repository/commons-lang/commons-lang/2.6/commons-lang-2.6.jar:/home/zyr/.m2/repository/io/airlift/aircompressor/0.10/aircompressor-0.10.jar:/home/zyr/.m2/repository/org/apache/orc/orc-mapreduce/1.5.5/orc-mapreduce-1.5.5-nohive.jar:/home/zyr/.m2/repository/org/apache/parquet/parquet-column/1.10.1/parquet-column-1.10.1.jar:/home/zyr/.m2/repository/org/apache/parquet/parquet-common/1.10.1/parquet-common-1.10.1.jar:/home/zyr/.m2/repository/org/apache/parquet/parquet-encoding/1.10.1/parquet-encoding-1.10.1.jar:/home/zyr/.m2/repository/org/apache/parquet/parquet-hadoop/1.10.1/parquet-hadoop-1.10.1.jar:/home/zyr/.m2/repository/org/apache/parquet/parquet-format/2.4.0/parquet-format-2.4.0.jar:/home/zyr/.m2/repository/org/apache/parquet/parquet-jackson/1.10.1/parquet-jackson-1.10.1.jar:/home/zyr/.m2/repository/org/apache/arrow/arrow-vector/0.10.0/arrow-vector-0.10.0.jar:/home/zyr/.m2/repository/org/apache/arrow/arrow-format/0.10.0/arrow-format-0.10.0.jar:/home/zyr/.m2/repository/org/apache/arrow/arrow-memory/0.10.0/arrow-memory-0.10.0.jar:/home/zyr/.m2/repository/joda-time/joda-time/2.9.9/joda-time-2.9.9.jar:/home/zyr/.m2/repository/com/carrotsearch/hppc/0.7.2/hppc-0.7.2.jar:/home/zyr/.m2/repository/com/vlkan/flatbuffers/1.2.0-3f79e055/flatbuffers-1.2.0-3f79e055.jar:/home/zyr/.m2/repository/org/apache/spark/spark-graphx_2.11/2.4.3/spark-graphx_2.11-2.4.3.jar:/home/zyr/.m2/repository/com/github/fommil/netlib/core/1.1.2/core-1.1.2.jar:/home/zyr/.m2/repository/net/sourceforge/f2j/arpack_combined_all/0.1/arpack_combined_all-0.1.jar:/home/zyr/.m2/repository/org/apache/spark/spark-mllib-local_2.11/2.4.3/spark-mllib-local_2.11-2.4.3.jar:/home/zyr/.m2/repository/org/scalanlp/breeze_2.11/0.13.2/breeze_2.11-0.13.2.jar:/home/zyr/.m2/repository/org/scalanlp/breeze-macros_2.11/0.13.2/breeze-macros_2.11-0.13.2.jar:/home/zyr/.m2/repository/net/sf/opencsv/opencsv/2.3/opencsv-2.3.jar:/home/zyr/.m2/repository/com/github/rwl/jtransforms/2.4.0/jtransforms-2.4.0.jar:/home/zyr/.m2/repository/org/spire-math/spire_2.11/0.13.0/spire_2.11-0.13.0.jar:/home/zyr/.m2/repository/org/spire-math/spire-macros_2.11/0.13.0/spire-macros_2.11-0.13.0.jar:/home/zyr/.m2/repository/org/typelevel/machinist_2.11/0.6.1/machinist_2.11-0.6.1.jar:/home/zyr/.m2/repository/com/chuusai/shapeless_2.11/2.3.2/shapeless_2.11-2.3.2.jar:/home/zyr/.m2/repository/org/typelevel/macro-compat_2.11/1.1.1/macro-compat_2.11-1.1.1.jar:/home/zyr/.m2/repository/org/apache/commons/commons-math3/3.4.1/commons-math3-3.4.1.jar:/home/zyr/.m2/repository/org/apache/spark/spark-tags_2.11/2.4.3/spark-tags_2.11-2.4.3.jar:/home/zyr/.m2/repository/org/spark-project/spark/unused/1.0.0/unused-1.0.0.jar com.kmeans.MyTest

Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties

19/07/10 11:36:47 WARN Utils: Your hostname, zyrpc resolves to a loopback address: 127.0.1.1; using 192.168.31.160 instead (on interface ens33)

19/07/10 11:36:47 WARN Utils: Set SPARK_LOCAL_IP if you need to bind to another address

19/07/10 11:36:47 INFO SparkContext: Running Spark version 2.4.3

19/07/10 11:36:49 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

19/07/10 11:36:50 INFO SparkContext: Submitted application: Simple Application

19/07/10 11:36:50 INFO SecurityManager: Changing view acls to: zyr

19/07/10 11:36:50 INFO SecurityManager: Changing modify acls to: zyr

19/07/10 11:36:50 INFO SecurityManager: Changing view acls groups to:

19/07/10 11:36:50 INFO SecurityManager: Changing modify acls groups to:

19/07/10 11:36:50 INFO SecurityManager: SecurityManager: authentication disabled; ui acls disabled; users with view permissions: Set(zyr); groups with view permissions: Set(); users with modify permissions: Set(zyr); groups with modify permissions: Set()

19/07/10 11:36:52 INFO Utils: Successfully started service 'sparkDriver' on port 41147.

19/07/10 11:36:52 INFO SparkEnv: Registering MapOutputTracker

19/07/10 11:36:52 INFO SparkEnv: Registering BlockManagerMaster

19/07/10 11:36:52 INFO BlockManagerMasterEndpoint: Using org.apache.spark.storage.DefaultTopologyMapper for getting topology information

19/07/10 11:36:52 INFO BlockManagerMasterEndpoint: BlockManagerMasterEndpoint up

19/07/10 11:36:52 INFO DiskBlockManager: Created local directory at /tmp/blockmgr-63b48034-1ffc-40fa-bb45-6c117cb0451b

19/07/10 11:36:52 INFO MemoryStore: MemoryStore started with capacity 345.0 MB

19/07/10 11:36:52 INFO SparkEnv: Registering OutputCommitCoordinator

19/07/10 11:36:53 INFO Utils: Successfully started service 'SparkUI' on port 4040.

19/07/10 11:36:53 INFO SparkUI: Bound SparkUI to 0.0.0.0, and started at http://192.168.31.160:4040

19/07/10 11:36:54 INFO Executor: Starting executor ID driver on host localhost

19/07/10 11:36:54 INFO Utils: Successfully started service 'org.apache.spark.network.netty.NettyBlockTransferService' on port 34263.

19/07/10 11:36:54 INFO NettyBlockTransferService: Server created on 192.168.31.160:34263

19/07/10 11:36:54 INFO BlockManager: Using org.apache.spark.storage.RandomBlockReplicationPolicy for block replication policy

19/07/10 11:36:54 INFO BlockManagerMaster: Registering BlockManager BlockManagerId(driver, 192.168.31.160, 34263, None)

19/07/10 11:36:54 INFO BlockManagerMasterEndpoint: Registering block manager 192.168.31.160:34263 with 345.0 MB RAM, BlockManagerId(driver, 192.168.31.160, 34263, None)

19/07/10 11:36:54 INFO BlockManagerMaster: Registered BlockManager BlockManagerId(driver, 192.168.31.160, 34263, None)

19/07/10 11:36:54 INFO BlockManager: Initialized BlockManager: BlockManagerId(driver, 192.168.31.160, 34263, None)

19/07/10 11:36:57 INFO MemoryStore: Block broadcast_0 stored as values in memory (estimated size 214.6 KB, free 344.8 MB)

19/07/10 11:36:57 INFO MemoryStore: Block broadcast_0_piece0 stored as bytes in memory (estimated size 20.4 KB, free 344.8 MB)

19/07/10 11:36:57 INFO BlockManagerInfo: Added broadcast_0_piece0 in memory on 192.168.31.160:34263 (size: 20.4 KB, free: 345.0 MB)

19/07/10 11:36:57 INFO SparkContext: Created broadcast 0 from textFile at MyTest.scala:11

19/07/10 11:36:58 INFO FileInputFormat: Total input paths to process : 1

19/07/10 11:36:58 INFO SparkContext: Starting job: count at MyTest.scala:12

19/07/10 11:36:58 INFO DAGScheduler: Got job 0 (count at MyTest.scala:12) with 2 output partitions

19/07/10 11:36:58 INFO DAGScheduler: Final stage: ResultStage 0 (count at MyTest.scala:12)

19/07/10 11:36:58 INFO DAGScheduler: Parents of final stage: List()

19/07/10 11:36:58 INFO DAGScheduler: Missing parents: List()

19/07/10 11:36:58 INFO DAGScheduler: Submitting ResultStage 0 (MapPartitionsRDD[3] at filter at MyTest.scala:12), which has no missing parents

19/07/10 11:36:58 INFO MemoryStore: Block broadcast_1 stored as values in memory (estimated size 3.7 KB, free 344.8 MB)

19/07/10 11:36:58 INFO MemoryStore: Block broadcast_1_piece0 stored as bytes in memory (estimated size 2.1 KB, free 344.8 MB)

19/07/10 11:36:58 INFO BlockManagerInfo: Added broadcast_1_piece0 in memory on 192.168.31.160:34263 (size: 2.1 KB, free: 345.0 MB)

19/07/10 11:36:58 INFO SparkContext: Created broadcast 1 from broadcast at DAGScheduler.scala:1161

19/07/10 11:36:58 INFO DAGScheduler: Submitting 2 missing tasks from ResultStage 0 (MapPartitionsRDD[3] at filter at MyTest.scala:12) (first 15 tasks are for partitions Vector(0, 1))

19/07/10 11:36:58 INFO TaskSchedulerImpl: Adding task set 0.0 with 2 tasks

19/07/10 11:36:58 INFO TaskSetManager: Starting task 0.0 in stage 0.0 (TID 0, localhost, executor driver, partition 0, PROCESS_LOCAL, 7883 bytes)

19/07/10 11:36:58 INFO TaskSetManager: Starting task 1.0 in stage 0.0 (TID 1, localhost, executor driver, partition 1, PROCESS_LOCAL, 7883 bytes)

19/07/10 11:36:58 INFO Executor: Running task 0.0 in stage 0.0 (TID 0)

19/07/10 11:36:58 INFO Executor: Running task 1.0 in stage 0.0 (TID 1)

19/07/10 11:36:59 INFO HadoopRDD: Input split: file:/home/zyr/file.txt:0+29

19/07/10 11:36:59 INFO HadoopRDD: Input split: file:/home/zyr/file.txt:29+29

19/07/10 11:36:59 INFO MemoryStore: Block rdd_1_0 stored as values in memory (estimated size 192.0 B, free 344.8 MB)

19/07/10 11:36:59 INFO MemoryStore: Block rdd_1_1 stored as values in memory (estimated size 96.0 B, free 344.8 MB)

19/07/10 11:36:59 INFO BlockManagerInfo: Added rdd_1_1 in memory on 192.168.31.160:34263 (size: 96.0 B, free: 345.0 MB)

19/07/10 11:36:59 INFO BlockManagerInfo: Added rdd_1_0 in memory on 192.168.31.160:34263 (size: 192.0 B, free: 345.0 MB)

19/07/10 11:36:59 INFO Executor: Finished task 0.0 in stage 0.0 (TID 0). 875 bytes result sent to driver

19/07/10 11:36:59 INFO Executor: Finished task 1.0 in stage 0.0 (TID 1). 875 bytes result sent to driver

19/07/10 11:36:59 INFO TaskSetManager: Finished task 0.0 in stage 0.0 (TID 0) in 635 ms on localhost (executor driver) (1/2)

19/07/10 11:36:59 INFO TaskSetManager: Finished task 1.0 in stage 0.0 (TID 1) in 593 ms on localhost (executor driver) (2/2)

19/07/10 11:36:59 INFO TaskSchedulerImpl: Removed TaskSet 0.0, whose tasks have all completed, from pool

19/07/10 11:36:59 INFO DAGScheduler: ResultStage 0 (count at MyTest.scala:12) finished in 1.203 s

19/07/10 11:36:59 INFO DAGScheduler: Job 0 finished: count at MyTest.scala:12, took 1.438576 s

Words with a : 11

19/07/10 11:36:59 INFO SparkUI: Stopped Spark web UI at http://192.168.31.160:4040

19/07/10 11:36:59 INFO MapOutputTrackerMasterEndpoint: MapOutputTrackerMasterEndpoint stopped!

19/07/10 11:36:59 INFO MemoryStore: MemoryStore cleared

19/07/10 11:36:59 INFO BlockManager: BlockManager stopped

19/07/10 11:36:59 INFO BlockManagerMaster: BlockManagerMaster stopped

19/07/10 11:36:59 INFO OutputCommitCoordinator$OutputCommitCoordinatorEndpoint: OutputCommitCoordinator stopped!

19/07/10 11:36:59 INFO SparkContext: Successfully stopped SparkContext

19/07/10 11:36:59 INFO ShutdownHookManager: Shutdown hook called

19/07/10 11:36:59 INFO ShutdownHookManager: Deleting directory /tmp/spark-e07b1de0-0ac0-4abe-952a-504c2c7282fd Process finished with exit code 0

第二个程序:

package com.kmeans import org.apache.spark.mllib.clustering.KMeans

import org.apache.spark.mllib.linalg.Vectors

import org.apache.spark.{SparkConf, SparkContext} /**

Scala版K近邻算法获取三维空间点中数据的归属

* ****************

* 测试数据(x,y,z) *

* ***************

* 0.0 0.0 0.0

* 0.1 0.1 0.1

* 0.2 0.2 0.2

* 9.0 9.0 9.0

* 9.1 9.1 9.1

* 9.2 9.2 9.2

*/

object Kmeans {

def main(args: Array[String]): Unit = { val conf = new SparkConf().setAppName("Simple Application").setMaster("local[2]")

val context=new SparkContext(conf)

val dataSourceRDD = context.textFile("file:///home/zyr/kmeanstest.txt").cache()

val trainRDD = dataSourceRDD.map(lines => Vectors.dense(lines.split(" ").map(_.toDouble)))

// trainRDD.foreach(trainRow => println(trainRow)

// trainRDD.foreach(println)

// 训练数据得到模型

// 参数一:训练数据(Vectors类型的RDD)

// 参数二:中心簇数量 0 ~ n

// 参数三:代次数

val model = KMeans.train(trainRDD, 3, 30) // 获取数据模型的中心点

val clustercenters = model.clusterCenters // 打印数据模型的中心点

clustercenters.foreach(println) //计算误差

val cross = model.computeCost(trainRDD)

println("误差为:" + cross) // 使用模型匹配测试数据获取预测结果

val res1 = model.predict(Vectors.dense("0.2 0.2 0.2".split(' ').map(_.toDouble)))

val res2 = model.predict(Vectors.dense("0.25 0.25 0.25".split(' ').map(_.toDouble)))

val res3 = model.predict(Vectors.dense("0.1 0.1 0.1".split(' ').map(_.toDouble)))

val res4 = model.predict(Vectors.dense("9 9 9".split(' ').map(_.toDouble)))

val res5 = model.predict(Vectors.dense("9.1 9.1 9.1".split(' ').map(_.toDouble)))

val res6 = model.predict(Vectors.dense("9.06 9.06 9.06".split(' ').map(_.toDouble)))

// println("预测结果为:\r\n" + res1 + "\r\n" + res2 + "\r\n" + res3 + "\r\n" + res4 + "\r\n" + res5 + "\r\n" + res6)

/**

* 这是三个中心点

* [9.1,9.1,9.1]

* [0.05,0.05,0.05]

* [0.2,0.2,0.2]

* 以下为类簇值

* 2

* 2

* 1

* 0

* 0

* 0

* 此处结果可以看出输入数据与中心点更靠近的话就属于哪一个簇

*/

// 使用原数据进行交叉评估预测

val crossPredictRes = dataSourceRDD.map{

lines =>

val lineVectors = Vectors.dense(lines.split(" ").map(_.toDouble))

val predictRes = model.predict(lineVectors)

lineVectors + "==>" + predictRes

}

crossPredictRes.foreach(println) /**

* [9.0,9.0,9.0]==>0

* [9.1,9.1,9.1]==>0

* [9.2,9.2,9.2]==>0

* [0.0,0.0,0.0]==>1

* [0.1,0.1,0.1]==>1

* [0.2,0.2,0.2]==>2

*

*/

}

}

文件:

0.0 0.0 0.0

0.1 0.1 0.1

0.2 0.2 0.2

9.0 9.0 9.0

9.1 9.1 9.1

9.2 9.2 9.2

运行结果:

/usr/lib/jvm/java-8-openjdk-amd64/bin/java -javaagent:/usr/local/idea/lib/idea_rt.jar=34781:/usr/local/idea/bin -Dfile.encoding=UTF-8 -classpath /usr/lib/jvm/java-8-openjdk-amd64/jre/lib/charsets.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/ext/cldrdata.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/ext/dnsns.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/ext/icedtea-sound.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/ext/jaccess.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/ext/java-atk-wrapper.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/ext/localedata.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/ext/nashorn.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/ext/sunec.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/ext/sunjce_provider.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/ext/sunpkcs11.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/ext/zipfs.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/jce.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/jsse.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/management-agent.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/resources.jar:/usr/lib/jvm/java-8-openjdk-amd64/jre/lib/rt.jar:/home/zyr/IdeaProjects/myspark/target/classes:/home/zyr/.m2/repository/org/scala-lang/scala-reflect/2.11.0/scala-reflect-2.11.0.jar:/home/zyr/.m2/repository/org/scala-lang/scala-library/2.11.0/scala-library-2.11.0.jar:/home/zyr/.m2/repository/org/apache/spark/spark-mllib_2.11/2.4.3/spark-mllib_2.11-2.4.3.jar:/home/zyr/.m2/repository/org/scala-lang/modules/scala-parser-combinators_2.11/1.1.0/scala-parser-combinators_2.11-1.1.0.jar:/home/zyr/.m2/repository/org/scala-lang/scala-library/2.11.12/scala-library-2.11.12.jar:/home/zyr/.m2/repository/org/apache/spark/spark-core_2.11/2.4.3/spark-core_2.11-2.4.3.jar:/home/zyr/.m2/repository/com/thoughtworks/paranamer/paranamer/2.8/paranamer-2.8.jar:/home/zyr/.m2/repository/org/apache/avro/avro/1.8.2/avro-1.8.2.jar:/home/zyr/.m2/repository/org/codehaus/jackson/jackson-core-asl/1.9.13/jackson-core-asl-1.9.13.jar:/home/zyr/.m2/repository/org/codehaus/jackson/jackson-mapper-asl/1.9.13/jackson-mapper-asl-1.9.13.jar:/home/zyr/.m2/repository/org/apache/commons/commons-compress/1.8.1/commons-compress-1.8.1.jar:/home/zyr/.m2/repository/org/tukaani/xz/1.5/xz-1.5.jar:/home/zyr/.m2/repository/org/apache/avro/avro-mapred/1.8.2/avro-mapred-1.8.2-hadoop2.jar:/home/zyr/.m2/repository/org/apache/avro/avro-ipc/1.8.2/avro-ipc-1.8.2.jar:/home/zyr/.m2/repository/commons-codec/commons-codec/1.9/commons-codec-1.9.jar:/home/zyr/.m2/repository/com/twitter/chill_2.11/0.9.3/chill_2.11-0.9.3.jar:/home/zyr/.m2/repository/com/esotericsoftware/kryo-shaded/4.0.2/kryo-shaded-4.0.2.jar:/home/zyr/.m2/repository/com/esotericsoftware/minlog/1.3.0/minlog-1.3.0.jar:/home/zyr/.m2/repository/org/objenesis/objenesis/2.5.1/objenesis-2.5.1.jar:/home/zyr/.m2/repository/com/twitter/chill-java/0.9.3/chill-java-0.9.3.jar:/home/zyr/.m2/repository/org/apache/xbean/xbean-asm6-shaded/4.8/xbean-asm6-shaded-4.8.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-client/2.6.5/hadoop-client-2.6.5.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-common/2.6.5/hadoop-common-2.6.5.jar:/home/zyr/.m2/repository/commons-cli/commons-cli/1.2/commons-cli-1.2.jar:/home/zyr/.m2/repository/xmlenc/xmlenc/0.52/xmlenc-0.52.jar:/home/zyr/.m2/repository/commons-httpclient/commons-httpclient/3.1/commons-httpclient-3.1.jar:/home/zyr/.m2/repository/commons-io/commons-io/2.4/commons-io-2.4.jar:/home/zyr/.m2/repository/commons-collections/commons-collections/3.2.2/commons-collections-3.2.2.jar:/home/zyr/.m2/repository/commons-configuration/commons-configuration/1.6/commons-configuration-1.6.jar:/home/zyr/.m2/repository/commons-digester/commons-digester/1.8/commons-digester-1.8.jar:/home/zyr/.m2/repository/commons-beanutils/commons-beanutils/1.7.0/commons-beanutils-1.7.0.jar:/home/zyr/.m2/repository/com/google/code/gson/gson/2.2.4/gson-2.2.4.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-auth/2.6.5/hadoop-auth-2.6.5.jar:/home/zyr/.m2/repository/org/apache/httpcomponents/httpclient/4.2.5/httpclient-4.2.5.jar:/home/zyr/.m2/repository/org/apache/httpcomponents/httpcore/4.2.4/httpcore-4.2.4.jar:/home/zyr/.m2/repository/org/apache/directory/server/apacheds-kerberos-codec/2.0.0-M15/apacheds-kerberos-codec-2.0.0-M15.jar:/home/zyr/.m2/repository/org/apache/directory/server/apacheds-i18n/2.0.0-M15/apacheds-i18n-2.0.0-M15.jar:/home/zyr/.m2/repository/org/apache/directory/api/api-asn1-api/1.0.0-M20/api-asn1-api-1.0.0-M20.jar:/home/zyr/.m2/repository/org/apache/directory/api/api-util/1.0.0-M20/api-util-1.0.0-M20.jar:/home/zyr/.m2/repository/org/apache/curator/curator-client/2.6.0/curator-client-2.6.0.jar:/home/zyr/.m2/repository/org/htrace/htrace-core/3.0.4/htrace-core-3.0.4.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-hdfs/2.6.5/hadoop-hdfs-2.6.5.jar:/home/zyr/.m2/repository/org/mortbay/jetty/jetty-util/6.1.26/jetty-util-6.1.26.jar:/home/zyr/.m2/repository/xerces/xercesImpl/2.9.1/xercesImpl-2.9.1.jar:/home/zyr/.m2/repository/xml-apis/xml-apis/1.3.04/xml-apis-1.3.04.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-mapreduce-client-app/2.6.5/hadoop-mapreduce-client-app-2.6.5.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-mapreduce-client-common/2.6.5/hadoop-mapreduce-client-common-2.6.5.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-yarn-client/2.6.5/hadoop-yarn-client-2.6.5.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-yarn-server-common/2.6.5/hadoop-yarn-server-common-2.6.5.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-mapreduce-client-shuffle/2.6.5/hadoop-mapreduce-client-shuffle-2.6.5.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-yarn-api/2.6.5/hadoop-yarn-api-2.6.5.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-mapreduce-client-core/2.6.5/hadoop-mapreduce-client-core-2.6.5.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-yarn-common/2.6.5/hadoop-yarn-common-2.6.5.jar:/home/zyr/.m2/repository/javax/xml/bind/jaxb-api/2.2.2/jaxb-api-2.2.2.jar:/home/zyr/.m2/repository/javax/xml/stream/stax-api/1.0-2/stax-api-1.0-2.jar:/home/zyr/.m2/repository/org/codehaus/jackson/jackson-jaxrs/1.9.13/jackson-jaxrs-1.9.13.jar:/home/zyr/.m2/repository/org/codehaus/jackson/jackson-xc/1.9.13/jackson-xc-1.9.13.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-mapreduce-client-jobclient/2.6.5/hadoop-mapreduce-client-jobclient-2.6.5.jar:/home/zyr/.m2/repository/org/apache/hadoop/hadoop-annotations/2.6.5/hadoop-annotations-2.6.5.jar:/home/zyr/.m2/repository/org/apache/spark/spark-launcher_2.11/2.4.3/spark-launcher_2.11-2.4.3.jar:/home/zyr/.m2/repository/org/apache/spark/spark-kvstore_2.11/2.4.3/spark-kvstore_2.11-2.4.3.jar:/home/zyr/.m2/repository/org/fusesource/leveldbjni/leveldbjni-all/1.8/leveldbjni-all-1.8.jar:/home/zyr/.m2/repository/com/fasterxml/jackson/core/jackson-core/2.6.7/jackson-core-2.6.7.jar:/home/zyr/.m2/repository/com/fasterxml/jackson/core/jackson-annotations/2.6.7/jackson-annotations-2.6.7.jar:/home/zyr/.m2/repository/org/apache/spark/spark-network-common_2.11/2.4.3/spark-network-common_2.11-2.4.3.jar:/home/zyr/.m2/repository/org/apache/spark/spark-network-shuffle_2.11/2.4.3/spark-network-shuffle_2.11-2.4.3.jar:/home/zyr/.m2/repository/org/apache/spark/spark-unsafe_2.11/2.4.3/spark-unsafe_2.11-2.4.3.jar:/home/zyr/.m2/repository/javax/activation/activation/1.1.1/activation-1.1.1.jar:/home/zyr/.m2/repository/org/apache/curator/curator-recipes/2.6.0/curator-recipes-2.6.0.jar:/home/zyr/.m2/repository/org/apache/curator/curator-framework/2.6.0/curator-framework-2.6.0.jar:/home/zyr/.m2/repository/com/google/guava/guava/16.0.1/guava-16.0.1.jar:/home/zyr/.m2/repository/org/apache/zookeeper/zookeeper/3.4.6/zookeeper-3.4.6.jar:/home/zyr/.m2/repository/javax/servlet/javax.servlet-api/3.1.0/javax.servlet-api-3.1.0.jar:/home/zyr/.m2/repository/org/apache/commons/commons-lang3/3.5/commons-lang3-3.5.jar:/home/zyr/.m2/repository/com/google/code/findbugs/jsr305/1.3.9/jsr305-1.3.9.jar:/home/zyr/.m2/repository/org/slf4j/slf4j-api/1.7.16/slf4j-api-1.7.16.jar:/home/zyr/.m2/repository/org/slf4j/jul-to-slf4j/1.7.16/jul-to-slf4j-1.7.16.jar:/home/zyr/.m2/repository/org/slf4j/jcl-over-slf4j/1.7.16/jcl-over-slf4j-1.7.16.jar:/home/zyr/.m2/repository/log4j/log4j/1.2.17/log4j-1.2.17.jar:/home/zyr/.m2/repository/org/slf4j/slf4j-log4j12/1.7.16/slf4j-log4j12-1.7.16.jar:/home/zyr/.m2/repository/com/ning/compress-lzf/1.0.3/compress-lzf-1.0.3.jar:/home/zyr/.m2/repository/org/xerial/snappy/snappy-java/1.1.7.3/snappy-java-1.1.7.3.jar:/home/zyr/.m2/repository/org/lz4/lz4-java/1.4.0/lz4-java-1.4.0.jar:/home/zyr/.m2/repository/com/github/luben/zstd-jni/1.3.2-2/zstd-jni-1.3.2-2.jar:/home/zyr/.m2/repository/org/roaringbitmap/RoaringBitmap/0.7.45/RoaringBitmap-0.7.45.jar:/home/zyr/.m2/repository/org/roaringbitmap/shims/0.7.45/shims-0.7.45.jar:/home/zyr/.m2/repository/commons-net/commons-net/3.1/commons-net-3.1.jar:/home/zyr/.m2/repository/org/json4s/json4s-jackson_2.11/3.5.3/json4s-jackson_2.11-3.5.3.jar:/home/zyr/.m2/repository/org/json4s/json4s-core_2.11/3.5.3/json4s-core_2.11-3.5.3.jar:/home/zyr/.m2/repository/org/json4s/json4s-ast_2.11/3.5.3/json4s-ast_2.11-3.5.3.jar:/home/zyr/.m2/repository/org/json4s/json4s-scalap_2.11/3.5.3/json4s-scalap_2.11-3.5.3.jar:/home/zyr/.m2/repository/org/scala-lang/modules/scala-xml_2.11/1.0.6/scala-xml_2.11-1.0.6.jar:/home/zyr/.m2/repository/org/glassfish/jersey/core/jersey-client/2.22.2/jersey-client-2.22.2.jar:/home/zyr/.m2/repository/javax/ws/rs/javax.ws.rs-api/2.0.1/javax.ws.rs-api-2.0.1.jar:/home/zyr/.m2/repository/org/glassfish/hk2/hk2-api/2.4.0-b34/hk2-api-2.4.0-b34.jar:/home/zyr/.m2/repository/org/glassfish/hk2/hk2-utils/2.4.0-b34/hk2-utils-2.4.0-b34.jar:/home/zyr/.m2/repository/org/glassfish/hk2/external/aopalliance-repackaged/2.4.0-b34/aopalliance-repackaged-2.4.0-b34.jar:/home/zyr/.m2/repository/org/glassfish/hk2/external/javax.inject/2.4.0-b34/javax.inject-2.4.0-b34.jar:/home/zyr/.m2/repository/org/glassfish/hk2/hk2-locator/2.4.0-b34/hk2-locator-2.4.0-b34.jar:/home/zyr/.m2/repository/org/javassist/javassist/3.18.1-GA/javassist-3.18.1-GA.jar:/home/zyr/.m2/repository/org/glassfish/jersey/core/jersey-common/2.22.2/jersey-common-2.22.2.jar:/home/zyr/.m2/repository/javax/annotation/javax.annotation-api/1.2/javax.annotation-api-1.2.jar:/home/zyr/.m2/repository/org/glassfish/jersey/bundles/repackaged/jersey-guava/2.22.2/jersey-guava-2.22.2.jar:/home/zyr/.m2/repository/org/glassfish/hk2/osgi-resource-locator/1.0.1/osgi-resource-locator-1.0.1.jar:/home/zyr/.m2/repository/org/glassfish/jersey/core/jersey-server/2.22.2/jersey-server-2.22.2.jar:/home/zyr/.m2/repository/org/glassfish/jersey/media/jersey-media-jaxb/2.22.2/jersey-media-jaxb-2.22.2.jar:/home/zyr/.m2/repository/javax/validation/validation-api/1.1.0.Final/validation-api-1.1.0.Final.jar:/home/zyr/.m2/repository/org/glassfish/jersey/containers/jersey-container-servlet/2.22.2/jersey-container-servlet-2.22.2.jar:/home/zyr/.m2/repository/org/glassfish/jersey/containers/jersey-container-servlet-core/2.22.2/jersey-container-servlet-core-2.22.2.jar:/home/zyr/.m2/repository/io/netty/netty-all/4.1.17.Final/netty-all-4.1.17.Final.jar:/home/zyr/.m2/repository/io/netty/netty/3.9.9.Final/netty-3.9.9.Final.jar:/home/zyr/.m2/repository/com/clearspring/analytics/stream/2.7.0/stream-2.7.0.jar:/home/zyr/.m2/repository/io/dropwizard/metrics/metrics-core/3.1.5/metrics-core-3.1.5.jar:/home/zyr/.m2/repository/io/dropwizard/metrics/metrics-jvm/3.1.5/metrics-jvm-3.1.5.jar:/home/zyr/.m2/repository/io/dropwizard/metrics/metrics-json/3.1.5/metrics-json-3.1.5.jar:/home/zyr/.m2/repository/io/dropwizard/metrics/metrics-graphite/3.1.5/metrics-graphite-3.1.5.jar:/home/zyr/.m2/repository/com/fasterxml/jackson/core/jackson-databind/2.6.7.1/jackson-databind-2.6.7.1.jar:/home/zyr/.m2/repository/com/fasterxml/jackson/module/jackson-module-scala_2.11/2.6.7.1/jackson-module-scala_2.11-2.6.7.1.jar:/home/zyr/.m2/repository/org/scala-lang/scala-reflect/2.11.8/scala-reflect-2.11.8.jar:/home/zyr/.m2/repository/com/fasterxml/jackson/module/jackson-module-paranamer/2.7.9/jackson-module-paranamer-2.7.9.jar:/home/zyr/.m2/repository/org/apache/ivy/ivy/2.4.0/ivy-2.4.0.jar:/home/zyr/.m2/repository/oro/oro/2.0.8/oro-2.0.8.jar:/home/zyr/.m2/repository/net/razorvine/pyrolite/4.13/pyrolite-4.13.jar:/home/zyr/.m2/repository/net/sf/py4j/py4j/0.10.7/py4j-0.10.7.jar:/home/zyr/.m2/repository/org/apache/commons/commons-crypto/1.0.0/commons-crypto-1.0.0.jar:/home/zyr/.m2/repository/org/apache/spark/spark-streaming_2.11/2.4.3/spark-streaming_2.11-2.4.3.jar:/home/zyr/.m2/repository/org/apache/spark/spark-sql_2.11/2.4.3/spark-sql_2.11-2.4.3.jar:/home/zyr/.m2/repository/com/univocity/univocity-parsers/2.7.3/univocity-parsers-2.7.3.jar:/home/zyr/.m2/repository/org/apache/spark/spark-sketch_2.11/2.4.3/spark-sketch_2.11-2.4.3.jar:/home/zyr/.m2/repository/org/apache/spark/spark-catalyst_2.11/2.4.3/spark-catalyst_2.11-2.4.3.jar:/home/zyr/.m2/repository/org/codehaus/janino/janino/3.0.9/janino-3.0.9.jar:/home/zyr/.m2/repository/org/codehaus/janino/commons-compiler/3.0.9/commons-compiler-3.0.9.jar:/home/zyr/.m2/repository/org/antlr/antlr4-runtime/4.7/antlr4-runtime-4.7.jar:/home/zyr/.m2/repository/org/apache/orc/orc-core/1.5.5/orc-core-1.5.5-nohive.jar:/home/zyr/.m2/repository/org/apache/orc/orc-shims/1.5.5/orc-shims-1.5.5.jar:/home/zyr/.m2/repository/com/google/protobuf/protobuf-java/2.5.0/protobuf-java-2.5.0.jar:/home/zyr/.m2/repository/commons-lang/commons-lang/2.6/commons-lang-2.6.jar:/home/zyr/.m2/repository/io/airlift/aircompressor/0.10/aircompressor-0.10.jar:/home/zyr/.m2/repository/org/apache/orc/orc-mapreduce/1.5.5/orc-mapreduce-1.5.5-nohive.jar:/home/zyr/.m2/repository/org/apache/parquet/parquet-column/1.10.1/parquet-column-1.10.1.jar:/home/zyr/.m2/repository/org/apache/parquet/parquet-common/1.10.1/parquet-common-1.10.1.jar:/home/zyr/.m2/repository/org/apache/parquet/parquet-encoding/1.10.1/parquet-encoding-1.10.1.jar:/home/zyr/.m2/repository/org/apache/parquet/parquet-hadoop/1.10.1/parquet-hadoop-1.10.1.jar:/home/zyr/.m2/repository/org/apache/parquet/parquet-format/2.4.0/parquet-format-2.4.0.jar:/home/zyr/.m2/repository/org/apache/parquet/parquet-jackson/1.10.1/parquet-jackson-1.10.1.jar:/home/zyr/.m2/repository/org/apache/arrow/arrow-vector/0.10.0/arrow-vector-0.10.0.jar:/home/zyr/.m2/repository/org/apache/arrow/arrow-format/0.10.0/arrow-format-0.10.0.jar:/home/zyr/.m2/repository/org/apache/arrow/arrow-memory/0.10.0/arrow-memory-0.10.0.jar:/home/zyr/.m2/repository/joda-time/joda-time/2.9.9/joda-time-2.9.9.jar:/home/zyr/.m2/repository/com/carrotsearch/hppc/0.7.2/hppc-0.7.2.jar:/home/zyr/.m2/repository/com/vlkan/flatbuffers/1.2.0-3f79e055/flatbuffers-1.2.0-3f79e055.jar:/home/zyr/.m2/repository/org/apache/spark/spark-graphx_2.11/2.4.3/spark-graphx_2.11-2.4.3.jar:/home/zyr/.m2/repository/com/github/fommil/netlib/core/1.1.2/core-1.1.2.jar:/home/zyr/.m2/repository/net/sourceforge/f2j/arpack_combined_all/0.1/arpack_combined_all-0.1.jar:/home/zyr/.m2/repository/org/apache/spark/spark-mllib-local_2.11/2.4.3/spark-mllib-local_2.11-2.4.3.jar:/home/zyr/.m2/repository/org/scalanlp/breeze_2.11/0.13.2/breeze_2.11-0.13.2.jar:/home/zyr/.m2/repository/org/scalanlp/breeze-macros_2.11/0.13.2/breeze-macros_2.11-0.13.2.jar:/home/zyr/.m2/repository/net/sf/opencsv/opencsv/2.3/opencsv-2.3.jar:/home/zyr/.m2/repository/com/github/rwl/jtransforms/2.4.0/jtransforms-2.4.0.jar:/home/zyr/.m2/repository/org/spire-math/spire_2.11/0.13.0/spire_2.11-0.13.0.jar:/home/zyr/.m2/repository/org/spire-math/spire-macros_2.11/0.13.0/spire-macros_2.11-0.13.0.jar:/home/zyr/.m2/repository/org/typelevel/machinist_2.11/0.6.1/machinist_2.11-0.6.1.jar:/home/zyr/.m2/repository/com/chuusai/shapeless_2.11/2.3.2/shapeless_2.11-2.3.2.jar:/home/zyr/.m2/repository/org/typelevel/macro-compat_2.11/1.1.1/macro-compat_2.11-1.1.1.jar:/home/zyr/.m2/repository/org/apache/commons/commons-math3/3.4.1/commons-math3-3.4.1.jar:/home/zyr/.m2/repository/org/apache/spark/spark-tags_2.11/2.4.3/spark-tags_2.11-2.4.3.jar:/home/zyr/.m2/repository/org/spark-project/spark/unused/1.0.0/unused-1.0.0.jar com.kmeans.Kmeans

Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties

19/07/10 11:40:11 WARN Utils: Your hostname, zyrpc resolves to a loopback address: 127.0.1.1; using 192.168.31.160 instead (on interface ens33)

19/07/10 11:40:11 WARN Utils: Set SPARK_LOCAL_IP if you need to bind to another address

19/07/10 11:40:11 INFO SparkContext: Running Spark version 2.4.3

19/07/10 11:40:13 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

19/07/10 11:40:14 INFO SparkContext: Submitted application: Simple Application

19/07/10 11:40:15 INFO SecurityManager: Changing view acls to: zyr

19/07/10 11:40:15 INFO SecurityManager: Changing modify acls to: zyr

19/07/10 11:40:15 INFO SecurityManager: Changing view acls groups to:

19/07/10 11:40:15 INFO SecurityManager: Changing modify acls groups to:

19/07/10 11:40:15 INFO SecurityManager: SecurityManager: authentication disabled; ui acls disabled; users with view permissions: Set(zyr); groups with view permissions: Set(); users with modify permissions: Set(zyr); groups with modify permissions: Set()

19/07/10 11:40:17 INFO Utils: Successfully started service 'sparkDriver' on port 45437.

19/07/10 11:40:17 INFO SparkEnv: Registering MapOutputTracker

19/07/10 11:40:18 INFO SparkEnv: Registering BlockManagerMaster

19/07/10 11:40:18 INFO BlockManagerMasterEndpoint: Using org.apache.spark.storage.DefaultTopologyMapper for getting topology information

19/07/10 11:40:18 INFO BlockManagerMasterEndpoint: BlockManagerMasterEndpoint up

19/07/10 11:40:18 INFO DiskBlockManager: Created local directory at /tmp/blockmgr-2d502b3d-b275-49f2-9660-e1310680f61d

19/07/10 11:40:18 INFO MemoryStore: MemoryStore started with capacity 345.0 MB

19/07/10 11:40:18 INFO SparkEnv: Registering OutputCommitCoordinator

19/07/10 11:40:20 INFO Utils: Successfully started service 'SparkUI' on port 4040.

19/07/10 11:40:20 INFO SparkUI: Bound SparkUI to 0.0.0.0, and started at http://192.168.31.160:4040

19/07/10 11:40:21 INFO Executor: Starting executor ID driver on host localhost

19/07/10 11:40:22 INFO Utils: Successfully started service 'org.apache.spark.network.netty.NettyBlockTransferService' on port 44595.

19/07/10 11:40:22 INFO NettyBlockTransferService: Server created on 192.168.31.160:44595

19/07/10 11:40:22 INFO BlockManager: Using org.apache.spark.storage.RandomBlockReplicationPolicy for block replication policy

19/07/10 11:40:22 INFO BlockManagerMaster: Registering BlockManager BlockManagerId(driver, 192.168.31.160, 44595, None)

19/07/10 11:40:23 INFO BlockManagerMasterEndpoint: Registering block manager 192.168.31.160:44595 with 345.0 MB RAM, BlockManagerId(driver, 192.168.31.160, 44595, None)

19/07/10 11:40:23 INFO BlockManagerMaster: Registered BlockManager BlockManagerId(driver, 192.168.31.160, 44595, None)

19/07/10 11:40:23 INFO BlockManager: Initialized BlockManager: BlockManagerId(driver, 192.168.31.160, 44595, None)

19/07/10 11:40:25 INFO MemoryStore: Block broadcast_0 stored as values in memory (estimated size 214.6 KB, free 344.8 MB)

19/07/10 11:40:26 INFO MemoryStore: Block broadcast_0_piece0 stored as bytes in memory (estimated size 20.4 KB, free 344.8 MB)

19/07/10 11:40:26 INFO BlockManagerInfo: Added broadcast_0_piece0 in memory on 192.168.31.160:44595 (size: 20.4 KB, free: 345.0 MB)

19/07/10 11:40:26 INFO SparkContext: Created broadcast 0 from textFile at Kmeans.scala:25

19/07/10 11:40:26 WARN KMeans: The input data is not directly cached, which may hurt performance if its parent RDDs are also uncached.

19/07/10 11:40:26 INFO FileInputFormat: Total input paths to process : 1

19/07/10 11:40:26 INFO SparkContext: Starting job: takeSample at KMeans.scala:386

19/07/10 11:40:26 INFO DAGScheduler: Got job 0 (takeSample at KMeans.scala:386) with 2 output partitions

19/07/10 11:40:26 INFO DAGScheduler: Final stage: ResultStage 0 (takeSample at KMeans.scala:386)

19/07/10 11:40:26 INFO DAGScheduler: Parents of final stage: List()

19/07/10 11:40:26 INFO DAGScheduler: Missing parents: List()

19/07/10 11:40:26 INFO DAGScheduler: Submitting ResultStage 0 (MapPartitionsRDD[5] at map at KMeans.scala:248), which has no missing parents

19/07/10 11:40:27 INFO MemoryStore: Block broadcast_1 stored as values in memory (estimated size 4.3 KB, free 344.8 MB)

19/07/10 11:40:27 INFO MemoryStore: Block broadcast_1_piece0 stored as bytes in memory (estimated size 2.5 KB, free 344.8 MB)

19/07/10 11:40:27 INFO BlockManagerInfo: Added broadcast_1_piece0 in memory on 192.168.31.160:44595 (size: 2.5 KB, free: 345.0 MB)

19/07/10 11:40:27 INFO SparkContext: Created broadcast 1 from broadcast at DAGScheduler.scala:1161

19/07/10 11:40:27 INFO DAGScheduler: Submitting 2 missing tasks from ResultStage 0 (MapPartitionsRDD[5] at map at KMeans.scala:248) (first 15 tasks are for partitions Vector(0, 1))

19/07/10 11:40:27 INFO TaskSchedulerImpl: Adding task set 0.0 with 2 tasks

19/07/10 11:40:27 INFO TaskSetManager: Starting task 0.0 in stage 0.0 (TID 0, localhost, executor driver, partition 0, PROCESS_LOCAL, 8200 bytes)

19/07/10 11:40:27 INFO TaskSetManager: Starting task 1.0 in stage 0.0 (TID 1, localhost, executor driver, partition 1, PROCESS_LOCAL, 8200 bytes)

19/07/10 11:40:27 INFO Executor: Running task 1.0 in stage 0.0 (TID 1)

19/07/10 11:40:27 INFO Executor: Running task 0.0 in stage 0.0 (TID 0)

19/07/10 11:40:28 INFO HadoopRDD: Input split: file:/home/zyr/kmeanstest.txt:36+36

19/07/10 11:40:28 INFO HadoopRDD: Input split: file:/home/zyr/kmeanstest.txt:0+36

19/07/10 11:40:28 INFO MemoryStore: Block rdd_1_0 stored as values in memory (estimated size 288.0 B, free 344.8 MB)

19/07/10 11:40:28 INFO MemoryStore: Block rdd_1_1 stored as values in memory (estimated size 152.0 B, free 344.8 MB)

19/07/10 11:40:28 INFO BlockManagerInfo: Added rdd_1_0 in memory on 192.168.31.160:44595 (size: 288.0 B, free: 345.0 MB)

19/07/10 11:40:28 INFO BlockManagerInfo: Added rdd_1_1 in memory on 192.168.31.160:44595 (size: 152.0 B, free: 345.0 MB)

19/07/10 11:40:28 INFO BlockManager: Found block rdd_1_0 locally

19/07/10 11:40:28 INFO BlockManager: Found block rdd_1_1 locally

19/07/10 11:40:28 INFO MemoryStore: Block rdd_3_0 stored as values in memory (estimated size 48.0 B, free 344.8 MB)

19/07/10 11:40:28 INFO BlockManagerInfo: Added rdd_3_0 in memory on 192.168.31.160:44595 (size: 48.0 B, free: 345.0 MB)

19/07/10 11:40:28 INFO MemoryStore: Block rdd_3_1 stored as values in memory (estimated size 32.0 B, free 344.8 MB)

19/07/10 11:40:28 INFO BlockManagerInfo: Added rdd_3_1 in memory on 192.168.31.160:44595 (size: 32.0 B, free: 345.0 MB)

19/07/10 11:40:28 INFO Executor: Finished task 1.0 in stage 0.0 (TID 1). 875 bytes result sent to driver

19/07/10 11:40:28 INFO Executor: Finished task 0.0 in stage 0.0 (TID 0). 875 bytes result sent to driver

19/07/10 11:40:28 INFO TaskSetManager: Finished task 1.0 in stage 0.0 (TID 1) in 837 ms on localhost (executor driver) (1/2)

19/07/10 11:40:28 INFO TaskSetManager: Finished task 0.0 in stage 0.0 (TID 0) in 942 ms on localhost (executor driver) (2/2)

19/07/10 11:40:28 INFO TaskSchedulerImpl: Removed TaskSet 0.0, whose tasks have all completed, from pool

19/07/10 11:40:28 INFO DAGScheduler: ResultStage 0 (takeSample at KMeans.scala:386) finished in 1.635 s

19/07/10 11:40:28 INFO DAGScheduler: Job 0 finished: takeSample at KMeans.scala:386, took 1.961204 s

19/07/10 11:40:28 INFO SparkContext: Starting job: takeSample at KMeans.scala:386

19/07/10 11:40:28 INFO DAGScheduler: Got job 1 (takeSample at KMeans.scala:386) with 2 output partitions

19/07/10 11:40:28 INFO DAGScheduler: Final stage: ResultStage 1 (takeSample at KMeans.scala:386)

19/07/10 11:40:28 INFO DAGScheduler: Parents of final stage: List()

19/07/10 11:40:28 INFO DAGScheduler: Missing parents: List()

19/07/10 11:40:28 INFO DAGScheduler: Submitting ResultStage 1 (PartitionwiseSampledRDD[7] at takeSample at KMeans.scala:386), which has no missing parents

19/07/10 11:40:28 INFO MemoryStore: Block broadcast_2 stored as values in memory (estimated size 5.1 KB, free 344.8 MB)

19/07/10 11:40:28 INFO MemoryStore: Block broadcast_2_piece0 stored as bytes in memory (estimated size 2.9 KB, free 344.8 MB)

19/07/10 11:40:28 INFO BlockManagerInfo: Added broadcast_2_piece0 in memory on 192.168.31.160:44595 (size: 2.9 KB, free: 345.0 MB)

19/07/10 11:40:28 INFO SparkContext: Created broadcast 2 from broadcast at DAGScheduler.scala:1161

19/07/10 11:40:28 INFO DAGScheduler: Submitting 2 missing tasks from ResultStage 1 (PartitionwiseSampledRDD[7] at takeSample at KMeans.scala:386) (first 15 tasks are for partitions Vector(0, 1))

19/07/10 11:40:28 INFO TaskSchedulerImpl: Adding task set 1.0 with 2 tasks

19/07/10 11:40:28 INFO TaskSetManager: Starting task 0.0 in stage 1.0 (TID 2, localhost, executor driver, partition 0, PROCESS_LOCAL, 8309 bytes)

19/07/10 11:40:28 INFO TaskSetManager: Starting task 1.0 in stage 1.0 (TID 3, localhost, executor driver, partition 1, PROCESS_LOCAL, 8309 bytes)

19/07/10 11:40:28 INFO Executor: Running task 0.0 in stage 1.0 (TID 2)

19/07/10 11:40:28 INFO Executor: Running task 1.0 in stage 1.0 (TID 3)

19/07/10 11:40:28 INFO BlockManager: Found block rdd_1_0 locally

19/07/10 11:40:28 INFO BlockManager: Found block rdd_3_0 locally

19/07/10 11:40:28 INFO BlockManager: Found block rdd_1_1 locally

19/07/10 11:40:28 INFO BlockManager: Found block rdd_3_1 locally

19/07/10 11:40:28 INFO Executor: Finished task 0.0 in stage 1.0 (TID 2). 1283 bytes result sent to driver

19/07/10 11:40:28 INFO Executor: Finished task 1.0 in stage 1.0 (TID 3). 1175 bytes result sent to driver

19/07/10 11:40:28 INFO TaskSetManager: Finished task 0.0 in stage 1.0 (TID 2) in 92 ms on localhost (executor driver) (1/2)

19/07/10 11:40:28 INFO TaskSetManager: Finished task 1.0 in stage 1.0 (TID 3) in 96 ms on localhost (executor driver) (2/2)

19/07/10 11:40:28 INFO TaskSchedulerImpl: Removed TaskSet 1.0, whose tasks have all completed, from pool

19/07/10 11:40:28 INFO DAGScheduler: ResultStage 1 (takeSample at KMeans.scala:386) finished in 0.132 s

19/07/10 11:40:28 INFO DAGScheduler: Job 1 finished: takeSample at KMeans.scala:386, took 0.153980 s

19/07/10 11:40:29 INFO MemoryStore: Block broadcast_3 stored as values in memory (estimated size 144.0 B, free 344.8 MB)

19/07/10 11:40:29 INFO MemoryStore: Block broadcast_3_piece0 stored as bytes in memory (estimated size 344.0 B, free 344.8 MB)

19/07/10 11:40:29 INFO BlockManagerInfo: Added broadcast_3_piece0 in memory on 192.168.31.160:44595 (size: 344.0 B, free: 345.0 MB)

19/07/10 11:40:29 INFO SparkContext: Created broadcast 3 from broadcast at KMeans.scala:400

19/07/10 11:40:29 INFO SparkContext: Starting job: sum at KMeans.scala:406

19/07/10 11:40:29 INFO DAGScheduler: Got job 2 (sum at KMeans.scala:406) with 2 output partitions

19/07/10 11:40:29 INFO DAGScheduler: Final stage: ResultStage 2 (sum at KMeans.scala:406)

19/07/10 11:40:29 INFO DAGScheduler: Parents of final stage: List()

19/07/10 11:40:29 INFO DAGScheduler: Missing parents: List()

19/07/10 11:40:29 INFO DAGScheduler: Submitting ResultStage 2 (MapPartitionsRDD[9] at map at KMeans.scala:403), which has no missing parents

19/07/10 11:40:29 INFO MemoryStore: Block broadcast_4 stored as values in memory (estimated size 5.4 KB, free 344.7 MB)

19/07/10 11:40:29 INFO MemoryStore: Block broadcast_4_piece0 stored as bytes in memory (estimated size 3.0 KB, free 344.7 MB)

19/07/10 11:40:29 INFO BlockManagerInfo: Added broadcast_4_piece0 in memory on 192.168.31.160:44595 (size: 3.0 KB, free: 345.0 MB)

19/07/10 11:40:29 INFO SparkContext: Created broadcast 4 from broadcast at DAGScheduler.scala:1161

19/07/10 11:40:29 INFO DAGScheduler: Submitting 2 missing tasks from ResultStage 2 (MapPartitionsRDD[9] at map at KMeans.scala:403) (first 15 tasks are for partitions Vector(0, 1))

19/07/10 11:40:29 INFO TaskSchedulerImpl: Adding task set 2.0 with 2 tasks

19/07/10 11:40:29 INFO TaskSetManager: Starting task 0.0 in stage 2.0 (TID 4, localhost, executor driver, partition 0, PROCESS_LOCAL, 8232 bytes)

19/07/10 11:40:29 INFO TaskSetManager: Starting task 1.0 in stage 2.0 (TID 5, localhost, executor driver, partition 1, PROCESS_LOCAL, 8232 bytes)

19/07/10 11:40:29 INFO Executor: Running task 0.0 in stage 2.0 (TID 4)

19/07/10 11:40:29 INFO Executor: Running task 1.0 in stage 2.0 (TID 5)

19/07/10 11:40:29 INFO BlockManager: Found block rdd_1_0 locally

19/07/10 11:40:29 INFO BlockManager: Found block rdd_3_0 locally

19/07/10 11:40:29 INFO BlockManager: Found block rdd_1_0 locally

19/07/10 11:40:29 INFO BlockManager: Found block rdd_3_0 locally

19/07/10 11:40:29 INFO BlockManager: Found block rdd_1_1 locally

19/07/10 11:40:29 INFO BlockManager: Found block rdd_3_1 locally

19/07/10 11:40:29 INFO BlockManager: Found block rdd_1_1 locally

19/07/10 11:40:29 INFO BlockManager: Found block rdd_3_1 locally

19/07/10 11:40:29 WARN BLAS: Failed to load implementation from: com.github.fommil.netlib.NativeSystemBLAS

19/07/10 11:40:29 WARN BLAS: Failed to load implementation from: com.github.fommil.netlib.NativeRefBLAS

19/07/10 11:40:29 INFO MemoryStore: Block rdd_9_1 stored as values in memory (estimated size 32.0 B, free 344.7 MB)

19/07/10 11:40:29 INFO BlockManagerInfo: Added rdd_9_1 in memory on 192.168.31.160:44595 (size: 32.0 B, free: 345.0 MB)

19/07/10 11:40:29 INFO Executor: Finished task 1.0 in stage 2.0 (TID 5). 834 bytes result sent to driver

19/07/10 11:40:29 INFO TaskSetManager: Finished task 1.0 in stage 2.0 (TID 5) in 149 ms on localhost (executor driver) (1/2)

19/07/10 11:40:29 INFO MemoryStore: Block rdd_9_0 stored as values in memory (estimated size 48.0 B, free 344.7 MB)

19/07/10 11:40:29 INFO BlockManagerInfo: Added rdd_9_0 in memory on 192.168.31.160:44595 (size: 48.0 B, free: 345.0 MB)

19/07/10 11:40:29 INFO Executor: Finished task 0.0 in stage 2.0 (TID 4). 834 bytes result sent to driver

19/07/10 11:40:29 INFO TaskSetManager: Finished task 0.0 in stage 2.0 (TID 4) in 168 ms on localhost (executor driver) (2/2)

19/07/10 11:40:29 INFO TaskSchedulerImpl: Removed TaskSet 2.0, whose tasks have all completed, from pool

19/07/10 11:40:29 INFO DAGScheduler: ResultStage 2 (sum at KMeans.scala:406) finished in 0.199 s

19/07/10 11:40:29 INFO DAGScheduler: Job 2 finished: sum at KMeans.scala:406, took 0.221468 s

19/07/10 11:40:29 INFO MapPartitionsRDD: Removing RDD 6 from persistence list

19/07/10 11:40:29 INFO BlockManager: Removing RDD 6

19/07/10 11:40:29 INFO SparkContext: Starting job: collect at KMeans.scala:414

19/07/10 11:40:29 INFO DAGScheduler: Got job 3 (collect at KMeans.scala:414) with 2 output partitions

19/07/10 11:40:29 INFO DAGScheduler: Final stage: ResultStage 3 (collect at KMeans.scala:414)

19/07/10 11:40:29 INFO DAGScheduler: Parents of final stage: List()

19/07/10 11:40:29 INFO DAGScheduler: Missing parents: List()

19/07/10 11:40:29 INFO DAGScheduler: Submitting ResultStage 3 (MapPartitionsRDD[11] at mapPartitionsWithIndex at KMeans.scala:411), which has no missing parents

19/07/10 11:40:29 INFO MemoryStore: Block broadcast_5 stored as values in memory (estimated size 6.1 KB, free 344.7 MB)

19/07/10 11:40:29 INFO MemoryStore: Block broadcast_5_piece0 stored as bytes in memory (estimated size 3.3 KB, free 344.7 MB)

19/07/10 11:40:29 INFO BlockManagerInfo: Added broadcast_5_piece0 in memory on 192.168.31.160:44595 (size: 3.3 KB, free: 345.0 MB)

19/07/10 11:40:29 INFO SparkContext: Created broadcast 5 from broadcast at DAGScheduler.scala:1161

19/07/10 11:40:29 INFO DAGScheduler: Submitting 2 missing tasks from ResultStage 3 (MapPartitionsRDD[11] at mapPartitionsWithIndex at KMeans.scala:411) (first 15 tasks are for partitions Vector(0, 1))

19/07/10 11:40:29 INFO TaskSchedulerImpl: Adding task set 3.0 with 2 tasks

19/07/10 11:40:29 INFO TaskSetManager: Starting task 0.0 in stage 3.0 (TID 6, localhost, executor driver, partition 0, PROCESS_LOCAL, 8264 bytes)

19/07/10 11:40:29 INFO TaskSetManager: Starting task 1.0 in stage 3.0 (TID 7, localhost, executor driver, partition 1, PROCESS_LOCAL, 8264 bytes)

19/07/10 11:40:29 INFO Executor: Running task 0.0 in stage 3.0 (TID 6)

19/07/10 11:40:29 INFO Executor: Running task 1.0 in stage 3.0 (TID 7)

19/07/10 11:40:29 INFO BlockManager: Found block rdd_1_0 locally

19/07/10 11:40:29 INFO BlockManager: Found block rdd_3_0 locally

19/07/10 11:40:29 INFO BlockManager: Found block rdd_9_0 locally

19/07/10 11:40:29 INFO Executor: Finished task 0.0 in stage 3.0 (TID 6). 1078 bytes result sent to driver

19/07/10 11:40:29 INFO BlockManager: Found block rdd_1_1 locally

19/07/10 11:40:29 INFO TaskSetManager: Finished task 0.0 in stage 3.0 (TID 6) in 21 ms on localhost (executor driver) (1/2)

19/07/10 11:40:29 INFO BlockManager: Found block rdd_3_1 locally

19/07/10 11:40:29 INFO BlockManager: Found block rdd_9_1 locally

19/07/10 11:40:29 INFO Executor: Finished task 1.0 in stage 3.0 (TID 7). 1132 bytes result sent to driver

19/07/10 11:40:29 INFO TaskSetManager: Finished task 1.0 in stage 3.0 (TID 7) in 31 ms on localhost (executor driver) (2/2)

19/07/10 11:40:29 INFO TaskSchedulerImpl: Removed TaskSet 3.0, whose tasks have all completed, from pool

19/07/10 11:40:29 INFO DAGScheduler: ResultStage 3 (collect at KMeans.scala:414) finished in 0.061 s

19/07/10 11:40:29 INFO DAGScheduler: Job 3 finished: collect at KMeans.scala:414, took 0.084564 s

19/07/10 11:40:29 INFO MemoryStore: Block broadcast_6 stored as values in memory (estimated size 320.0 B, free 344.7 MB)

19/07/10 11:40:29 INFO MemoryStore: Block broadcast_6_piece0 stored as bytes in memory (estimated size 426.0 B, free 344.7 MB)

19/07/10 11:40:29 INFO BlockManagerInfo: Added broadcast_6_piece0 in memory on 192.168.31.160:44595 (size: 426.0 B, free: 345.0 MB)

19/07/10 11:40:29 INFO SparkContext: Created broadcast 6 from broadcast at KMeans.scala:400

19/07/10 11:40:29 INFO SparkContext: Starting job: sum at KMeans.scala:406

19/07/10 11:40:29 INFO DAGScheduler: Got job 4 (sum at KMeans.scala:406) with 2 output partitions

19/07/10 11:40:29 INFO DAGScheduler: Final stage: ResultStage 4 (sum at KMeans.scala:406)

19/07/10 11:40:29 INFO DAGScheduler: Parents of final stage: List()

19/07/10 11:40:29 INFO DAGScheduler: Missing parents: List()

19/07/10 11:40:29 INFO DAGScheduler: Submitting ResultStage 4 (MapPartitionsRDD[13] at map at KMeans.scala:403), which has no missing parents

19/07/10 11:40:29 INFO MemoryStore: Block broadcast_7 stored as values in memory (estimated size 5.7 KB, free 344.7 MB)

19/07/10 11:40:29 INFO MemoryStore: Block broadcast_7_piece0 stored as bytes in memory (estimated size 3.1 KB, free 344.7 MB)

19/07/10 11:40:29 INFO BlockManagerInfo: Added broadcast_7_piece0 in memory on 192.168.31.160:44595 (size: 3.1 KB, free: 345.0 MB)

19/07/10 11:40:29 INFO SparkContext: Created broadcast 7 from broadcast at DAGScheduler.scala:1161

19/07/10 11:40:29 INFO DAGScheduler: Submitting 2 missing tasks from ResultStage 4 (MapPartitionsRDD[13] at map at KMeans.scala:403) (first 15 tasks are for partitions Vector(0, 1))

19/07/10 11:40:29 INFO TaskSchedulerImpl: Adding task set 4.0 with 2 tasks

19/07/10 11:40:29 INFO TaskSetManager: Starting task 0.0 in stage 4.0 (TID 8, localhost, executor driver, partition 0, PROCESS_LOCAL, 8264 bytes)

19/07/10 11:40:29 INFO TaskSetManager: Starting task 1.0 in stage 4.0 (TID 9, localhost, executor driver, partition 1, PROCESS_LOCAL, 8264 bytes)

19/07/10 11:40:29 INFO Executor: Running task 0.0 in stage 4.0 (TID 8)

19/07/10 11:40:29 INFO Executor: Running task 1.0 in stage 4.0 (TID 9)

19/07/10 11:40:29 INFO BlockManager: Found block rdd_1_0 locally

19/07/10 11:40:29 INFO BlockManager: Found block rdd_1_1 locally

19/07/10 11:40:29 INFO BlockManager: Found block rdd_3_1 locally

19/07/10 11:40:29 INFO BlockManager: Found block rdd_9_1 locally

19/07/10 11:40:29 INFO BlockManager: Found block rdd_3_0 locally

19/07/10 11:40:29 INFO BlockManager: Found block rdd_9_0 locally

19/07/10 11:40:29 INFO MemoryStore: Block rdd_13_1 stored as values in memory (estimated size 32.0 B, free 344.7 MB)

19/07/10 11:40:29 INFO BlockManagerInfo: Added rdd_13_1 in memory on 192.168.31.160:44595 (size: 32.0 B, free: 345.0 MB)

19/07/10 11:40:29 INFO MemoryStore: Block rdd_13_0 stored as values in memory (estimated size 48.0 B, free 344.7 MB)

19/07/10 11:40:29 INFO Executor: Finished task 1.0 in stage 4.0 (TID 9). 834 bytes result sent to driver

19/07/10 11:40:29 INFO TaskSetManager: Finished task 1.0 in stage 4.0 (TID 9) in 64 ms on localhost (executor driver) (1/2)

19/07/10 11:40:29 INFO BlockManagerInfo: Added rdd_13_0 in memory on 192.168.31.160:44595 (size: 48.0 B, free: 345.0 MB)

19/07/10 11:40:29 INFO Executor: Finished task 0.0 in stage 4.0 (TID 8). 834 bytes result sent to driver

19/07/10 11:40:29 INFO TaskSetManager: Finished task 0.0 in stage 4.0 (TID 8) in 75 ms on localhost (executor driver) (2/2)

19/07/10 11:40:29 INFO TaskSchedulerImpl: Removed TaskSet 4.0, whose tasks have all completed, from pool

19/07/10 11:40:29 INFO DAGScheduler: ResultStage 4 (sum at KMeans.scala:406) finished in 0.108 s

19/07/10 11:40:29 INFO DAGScheduler: Job 4 finished: sum at KMeans.scala:406, took 0.129273 s

19/07/10 11:40:29 INFO MapPartitionsRDD: Removing RDD 9 from persistence list

19/07/10 11:40:29 INFO BlockManager: Removing RDD 9

19/07/10 11:40:29 INFO SparkContext: Starting job: collect at KMeans.scala:414

19/07/10 11:40:29 INFO DAGScheduler: Got job 5 (collect at KMeans.scala:414) with 2 output partitions

19/07/10 11:40:29 INFO DAGScheduler: Final stage: ResultStage 5 (collect at KMeans.scala:414)

19/07/10 11:40:29 INFO DAGScheduler: Parents of final stage: List()

19/07/10 11:40:29 INFO DAGScheduler: Missing parents: List()

19/07/10 11:40:29 INFO DAGScheduler: Submitting ResultStage 5 (MapPartitionsRDD[15] at mapPartitionsWithIndex at KMeans.scala:411), which has no missing parents

19/07/10 11:40:29 INFO MemoryStore: Block broadcast_8 stored as values in memory (estimated size 6.4 KB, free 344.7 MB)

19/07/10 11:40:29 INFO MemoryStore: Block broadcast_8_piece0 stored as bytes in memory (estimated size 3.4 KB, free 344.7 MB)

19/07/10 11:40:29 INFO BlockManagerInfo: Added broadcast_8_piece0 in memory on 192.168.31.160:44595 (size: 3.4 KB, free: 345.0 MB)

19/07/10 11:40:30 INFO SparkContext: Created broadcast 8 from broadcast at DAGScheduler.scala:1161

19/07/10 11:40:30 INFO DAGScheduler: Submitting 2 missing tasks from ResultStage 5 (MapPartitionsRDD[15] at mapPartitionsWithIndex at KMeans.scala:411) (first 15 tasks are for partitions Vector(0, 1))

19/07/10 11:40:30 INFO TaskSchedulerImpl: Adding task set 5.0 with 2 tasks

19/07/10 11:40:30 INFO TaskSetManager: Starting task 0.0 in stage 5.0 (TID 10, localhost, executor driver, partition 0, PROCESS_LOCAL, 8296 bytes)

19/07/10 11:40:30 INFO TaskSetManager: Starting task 1.0 in stage 5.0 (TID 11, localhost, executor driver, partition 1, PROCESS_LOCAL, 8296 bytes)

19/07/10 11:40:30 INFO Executor: Running task 0.0 in stage 5.0 (TID 10)

19/07/10 11:40:30 INFO Executor: Running task 1.0 in stage 5.0 (TID 11)

19/07/10 11:40:30 INFO BlockManager: Found block rdd_1_1 locally

19/07/10 11:40:30 INFO BlockManager: Found block rdd_3_1 locally

19/07/10 11:40:30 INFO BlockManager: Found block rdd_1_0 locally

19/07/10 11:40:30 INFO BlockManager: Found block rdd_3_0 locally

19/07/10 11:40:30 INFO BlockManager: Found block rdd_13_0 locally

19/07/10 11:40:30 INFO Executor: Finished task 0.0 in stage 5.0 (TID 10). 1132 bytes result sent to driver

19/07/10 11:40:30 INFO BlockManager: Found block rdd_13_1 locally

19/07/10 11:40:30 INFO Executor: Finished task 1.0 in stage 5.0 (TID 11). 826 bytes result sent to driver

19/07/10 11:40:30 INFO TaskSetManager: Finished task 0.0 in stage 5.0 (TID 10) in 56 ms on localhost (executor driver) (1/2)

19/07/10 11:40:30 INFO TaskSetManager: Finished task 1.0 in stage 5.0 (TID 11) in 58 ms on localhost (executor driver) (2/2)

19/07/10 11:40:30 INFO TaskSchedulerImpl: Removed TaskSet 5.0, whose tasks have all completed, from pool

19/07/10 11:40:30 INFO DAGScheduler: ResultStage 5 (collect at KMeans.scala:414) finished in 0.147 s

19/07/10 11:40:30 INFO DAGScheduler: Job 5 finished: collect at KMeans.scala:414, took 0.178237 s

19/07/10 11:40:30 INFO MapPartitionsRDD: Removing RDD 13 from persistence list

19/07/10 11:40:30 INFO BlockManager: Removing RDD 13

19/07/10 11:40:30 INFO TorrentBroadcast: Destroying Broadcast(3) (from destroy at KMeans.scala:421)

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 27

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 42

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 51

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 124

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 32

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 72

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 47

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 84

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 36

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 73

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 46

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 25

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 130

19/07/10 11:40:30 INFO TorrentBroadcast: Destroying Broadcast(6) (from destroy at KMeans.scala:421)

19/07/10 11:40:30 INFO BlockManagerInfo: Removed broadcast_3_piece0 on 192.168.31.160:44595 in memory (size: 344.0 B, free: 345.0 MB)

19/07/10 11:40:30 INFO BlockManagerInfo: Removed broadcast_2_piece0 on 192.168.31.160:44595 in memory (size: 2.9 KB, free: 345.0 MB)

19/07/10 11:40:30 INFO MemoryStore: Block broadcast_9 stored as values in memory (estimated size 568.0 B, free 344.7 MB)

19/07/10 11:40:30 INFO BlockManagerInfo: Removed broadcast_6_piece0 on 192.168.31.160:44595 in memory (size: 426.0 B, free: 345.0 MB)

19/07/10 11:40:30 INFO MemoryStore: Block broadcast_9_piece0 stored as bytes in memory (estimated size 529.0 B, free 344.7 MB)

19/07/10 11:40:30 INFO BlockManagerInfo: Added broadcast_9_piece0 in memory on 192.168.31.160:44595 (size: 529.0 B, free: 345.0 MB)

19/07/10 11:40:30 INFO SparkContext: Created broadcast 9 from broadcast at KMeans.scala:431

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 116

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 94

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 90

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 96

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 89

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 81

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 121

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 149

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 145

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 50

19/07/10 11:40:30 INFO BlockManagerInfo: Removed broadcast_7_piece0 on 192.168.31.160:44595 in memory (size: 3.1 KB, free: 345.0 MB)

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 135

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 108

19/07/10 11:40:30 INFO BlockManagerInfo: Removed broadcast_8_piece0 on 192.168.31.160:44595 in memory (size: 3.4 KB, free: 345.0 MB)

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 34

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 106

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 70

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 44

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 57

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 105

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 97

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 31

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 33

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 113

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 140

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 100

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 43

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 68

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 133

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 138

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 39

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 129

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 49

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 37

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 85

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 132

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 53

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 82

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 69

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 104

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 52

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 98

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 141

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 76

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 77

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 102

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 134

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 79

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 61

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 59

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 118

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 74

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 54

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 86

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 136

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 110

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 45

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 30

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 60

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 64

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 137

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 95

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 87

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 38

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 29

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 56

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 125

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 131

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 41

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 55

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 128

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 127

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 88

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 91

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 123

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 103

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 115

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 139

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 142

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 147

19/07/10 11:40:30 INFO BlockManagerInfo: Removed broadcast_5_piece0 on 192.168.31.160:44595 in memory (size: 3.3 KB, free: 345.0 MB)

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 26

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 48

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 78

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 93

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 40

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 35

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 63

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 75

19/07/10 11:40:30 INFO ContextCleaner: Cleaned accumulator 112