Diffusers中基于Stable Diffusion的哪些图像操作

2024-10-20 01:39:17

基于Stable Diffusion的哪些图像操作们:

- Text-To-Image generation:

StableDiffusionPipeline - Image-to-Image text guided generation:

StableDiffusionImg2ImgPipeline - In-painting:

StableDiffusionInpaintPipeline - text-guided image super-resolution:

StableDiffusionUpscalePipeline - generate variations from an input image:

StableDiffusionImageVariationPipeline - image editing by following text instructions:

StableDiffusionInstructPix2PixPipeline - ......

辅助函数

import requests

from PIL import Image

from io import BytesIO

def show_images(imgs, rows=1, cols=3):

assert len(imgs) == rows*cols

w_ori, h_ori = imgs[0].size

for img in imgs:

w_new, h_new = img.size

if w_new != w_ori or h_new != h_ori:

w_ori = max(w_ori, w_new)

h_ori = max(h_ori, h_new)

grid = Image.new('RGB', size=(cols*w_ori, rows*h_ori))

grid_w, grid_h = grid.size

for i, img in enumerate(imgs):

grid.paste(img, box=(i%cols*w_ori, i//cols*h_ori))

return grid

def download_image(url):

response = requests.get(url)

return Image.open(BytesIO(response.content)).convert("RGB")

Text-To-Image

根据文本生成图像,在diffusers使用StableDiffusionPipeline实现,必要输入为prompt,示例代码:

from diffusers import StableDiffusionPipeline

image_pipe = StableDiffusionPipeline.from_pretrained("CompVis/stable-diffusion-v1-4")

device = "cuda"

image_pipe.to(device)

prompt = ["a photograph of an astronaut riding a horse"] * 3

out_images = image_pipe(prompt).images

for i, out_image in enumerate(out_images):

out_image.save("astronaut_rides_horse" + str(i) + ".png")

示例输出:

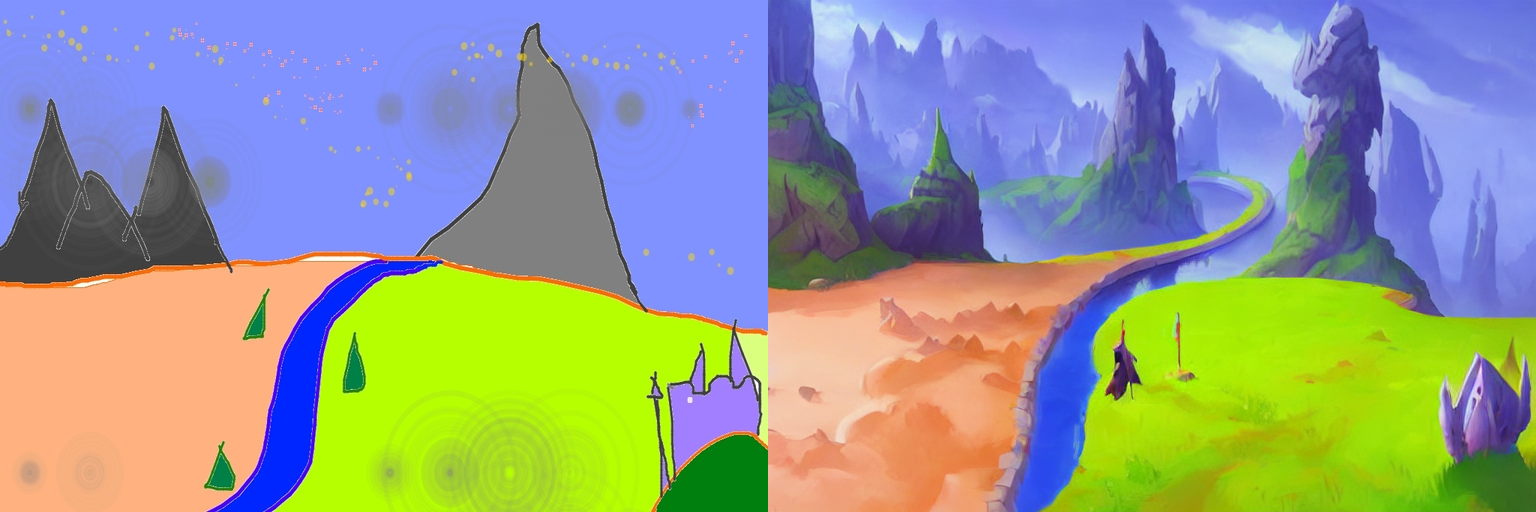

Image-To-Image

根据文本prompt和原始图像,生成新的图像。在diffusers中使用StableDiffusionImg2ImgPipeline类实现,可以看到,pipeline的必要输入有两个:prompt和init_image。示例代码:

import torch

from diffusers import StableDiffusionImg2ImgPipeline

device = "cuda"

model_id_or_path = "runwayml/stable-diffusion-v1-5"

pipe = StableDiffusionImg2ImgPipeline.from_pretrained(model_id_or_path, torch_dtype=torch.float16)

pipe = pipe.to(device)

url = "https://raw.githubusercontent.com/CompVis/stable-diffusion/main/assets/stable-samples/img2img/sketch-mountains-input.jpg"

init_image = download_image(url)

init_image = init_image.resize((768, 512))

prompt = "A fantasy landscape, trending on artstation"

images = pipe(prompt=prompt, image=init_image, strength=0.75, guidance_scale=7.5).images

grid_img = show_images([init_image, images[0]], 1, 2)

grid_img.save("fantasy_landscape.png")

示例输出:

In-painting

给定一个mask图像和一句提示,可编辑给定图像的特定部分。使用StableDiffusionInpaintPipeline来实现,输入包含三部分:原始图像,mask图像和一个prompt,

示例代码:

from diffusers import StableDiffusionInpaintPipeline

img_url = "https://raw.githubusercontent.com/CompVis/latent-diffusion/main/data/inpainting_examples/overture-creations-5sI6fQgYIuo.png"

mask_url = "https://raw.githubusercontent.com/CompVis/latent-diffusion/main/data/inpainting_examples/overture-creations-5sI6fQgYIuo_mask.png"

init_image = download_image(img_url).resize((512, 512))

mask_image = download_image(mask_url).resize((512, 512))

pipe = StableDiffusionInpaintPipeline.from_pretrained("runwayml/stable-diffusion-inpainting", torch_dtype=torch.float16)

pipe = pipe.to("cuda")

prompt = "Face of a yellow cat, high resolution, sitting on a park bench"

images = pipe(prompt=prompt, image=init_image, mask_image=mask_image).images

grid_img = show_images([init_image, mask_image, images[0]], 1, 3)

grid_img.save("overture-creations.png")

示例输出:

Upscale

对低分辨率图像进行超分辨率,使用StableDiffusionUpscalePipeline来实现,必要输入为prompt和低分辨率图像(low-resolution image),示例代码:

from diffusers import StableDiffusionUpscalePipeline

# load model and scheduler

model_id = "stabilityai/stable-diffusion-x4-upscaler"

pipeline = StableDiffusionUpscalePipeline.from_pretrained(model_id, torch_dtype=torch.float16, cache_dir="./models/")

pipeline = pipeline.to("cuda")

# let's download an image

url = "https://huggingface.co/datasets/hf-internal-testing/diffusers-images/resolve/main/sd2-upscale/low_res_cat.png"

low_res_img = download_image(url)

low_res_img = low_res_img.resize((128, 128))

prompt = "a white cat"

upscaled_image = pipeline(prompt=prompt, image=low_res_img).images[0]

grid_img = show_images([low_res_img, upscaled_image], 1, 2)

grid_img.save("a_white_cat.png")

print("low_res_img size: ", low_res_img.size)

print("upscaled_image size: ", upscaled_image.size)

示例输出,默认将一个128 x 128的小猫图像超分为一个512 x 512的:

默认是将原始尺寸的长和宽均放大四倍,即:

input: 128 x 128 ==> output: 512 x 512

input: 64 x 256 ==> output: 256 x 1024

...

个人感觉,prompt没有起什么作用,随便写吧。

关于此模型的详情,参考。

Instruct-Pix2Pix

根据输入的指令prompt对图像进行编辑,使用StableDiffusionInstructPix2PixPipeline来实现,必要输入包括prompt和image,示例代码如下:

import torch

from diffusers import StableDiffusionInstructPix2PixPipeline

model_id = "timbrooks/instruct-pix2pix"

pipe = StableDiffusionInstructPix2PixPipeline.from_pretrained(model_id, torch_dtype=torch.float16, cache_dir="./models/")

pipe = pipe.to("cuda")

url = "https://huggingface.co/datasets/diffusers/diffusers-images-docs/resolve/main/mountain.png"

image = download_image(url)

prompt = "make the mountains snowy"

images = pipe(prompt, image=image, num_inference_steps=20, image_guidance_scale=1.5, guidance_scale=7).images

grid_img = show_images([image, images[0]], 1, 2)

grid_img.save("snowy_mountains.png")

示例输出:

最新文章

- activity通过onActivityResult间数据交互

- JAVA中的枚举小结

- Java开发环境的配置

- android中的事件传递和处理机制

- 《mysql数据库备份小脚本》(转)

- [iOS基础控件 - 6.12.4] NavigationController vs TabBarController

- caffe简易上手指南(一)—— 运行cifar例子

- 24种设计模式--策略模式【Strategy Pattern】

- 西装定制平台Indochino获$1350万B轮融资 - 国际B2C - 亿邦动力网

- UINavigationController  和 UITabBarController

- day20<IO流>

- bzoj:3397 [Usaco2009 Feb]Surround the Islands 环岛篱笆

- ARC089E GraphXY 构造

- 关于.idea未上传导致不能显示项目文件结构的问题

- import os, glob, fnmatch--Python os/glob/fnmatch主要函数总结

- jenkins忘记管理员密码之解决方案

- SpringBoot实战一:发送邮件

- Linux故障:linux中使用ifconfig命令查看网卡信息时显示为eth1,但是在network-scripts中只有ifcfg-eth0的配置文件,并且里面的NAME="eth0"。

- FZU2150 :Fire Game (双起点BFS)

- 7-性能测试i报告