[OpenCV实战]2 人脸识别算法对比

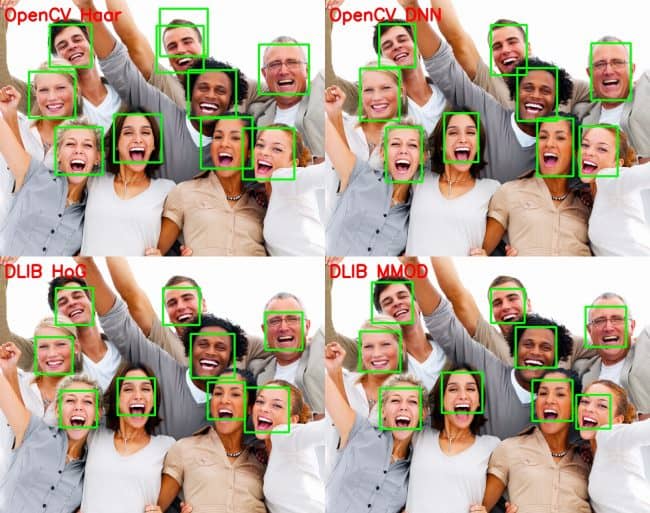

在本教程中,我们将讨论各种人脸检测方法,并对各种方法进行比较。下面是主要的人脸检测方法:

1 OpenCV中的Haar Cascade人脸分类器;

2 OpenCV中的深度学习人脸分类器;

3 Dlib中的hog人脸分类器;

4 Dlib中的深度学习人脸分类器。

Dlib是一个C++工具包(也有python版本),代码地址: http://dlib.net/

本文不涉及任何原理,只讲具体的应用。所有代码模型见:

https://download.csdn.net/download/luohenyj/10997489

https://github.com/luohenyueji/OpenCV-Practical-Exercise

如果没有积分(系统自动设定资源分数)看看参考链接。我搬运过来的,大修改没有。pch是预编译文件。Opencv版本3.4.3以上。

1 OpenCV中的Haar Cascade人脸分类器

基于Haar Cascade的人脸检测器自2001年提出以来,一直是人脸检测领域的研究热点。这种模型和其变种在这里找到:

https://github.com/opencv/opencv/tree/master/data/haarcascades

这种方法优点在CPU上几乎是实时工作的,方法简单可以在不同的尺度上检测人脸。实际就是一个级联分类器,参数可以调整,网上有相关资料。但是不管怎么调整误报率很高,而且人脸框选结果不是那么准确。

代码

C++:

#include "pch.h"

#include "face_detection.h"

/**

* @brief 人脸检测haar级联

*

* @param frame 原图

* @param faceCascadePath 模型文件

* @return Mat

*/

Mat detectFaceHaar(Mat frame, string faceCascadePath)

{

//图像缩放

auto inHeight = 300;

auto inWidth = 0;

if (!inWidth)

{

inWidth = (int)(((float)frame.cols / (float)frame.rows) * inHeight);

}

resize(frame, frame, Size(inWidth, inHeight));

//转换为灰度图

Mat frameGray = frame.clone();

//cvtColor(frame, frameGray, CV_BGR2GRAY);

//级联分类器

CascadeClassifier faceCascade;

faceCascade.load(faceCascadePath);

std::vector<Rect> faces;

faceCascade.detectMultiScale(frameGray, faces);

for (size_t i = 0; i < faces.size(); i++)

{

int x1 = faces[i].x;

int y1 = faces[i].y;

int x2 = faces[i].x + faces[i].width;

int y2 = faces[i].y + faces[i].height;

Rect face_rect(Point2i(x1, y1), Point2i(x2, y2));

rectangle(frameGray, face_rect, cv::Scalar(0, 255, 0), 2, 4);

}

return frameGray;

}

python:

from __future__ import division

import cv2

import time

import sys

def detectFaceOpenCVHaar(faceCascade, frame, inHeight=300, inWidth=0):

frameOpenCVHaar = frame.copy()

frameHeight = frameOpenCVHaar.shape[0]

frameWidth = frameOpenCVHaar.shape[1]

if not inWidth:

inWidth = int((frameWidth / frameHeight) * inHeight)

scaleHeight = frameHeight / inHeight

scaleWidth = frameWidth / inWidth

frameOpenCVHaarSmall = cv2.resize(frameOpenCVHaar, (inWidth, inHeight))

frameGray = cv2.cvtColor(frameOpenCVHaarSmall, cv2.COLOR_BGR2GRAY)

faces = faceCascade.detectMultiScale(frameGray)

bboxes = []

for (x, y, w, h) in faces:

x1 = x

y1 = y

x2 = x + w

y2 = y + h

cvRect = [int(x1 * scaleWidth), int(y1 * scaleHeight),

int(x2 * scaleWidth), int(y2 * scaleHeight)]

bboxes.append(cvRect)

cv2.rectangle(frameOpenCVHaar, (cvRect[0], cvRect[1]), (cvRect[2], cvRect[3]), (0, 255, 0),

int(round(frameHeight / 150)), 4)

return frameOpenCVHaar, bboxes

if __name__ == "__main__" :

source = 0

if len(sys.argv) > 1:

source = sys.argv[1]

faceCascade = cv2.CascadeClassifier('./haarcascade_frontalface_default.xml')

cap = cv2.VideoCapture(source)

hasFrame, frame = cap.read()

vid_writer = cv2.VideoWriter('output-haar-{}.avi'.format(str(source).split(".")[0]),cv2.VideoWriter_fourcc('M','J','P','G'), 15, (frame.shape[1],frame.shape[0]))

frame_count = 0

tt_opencvHaar = 0

while(1):

hasFrame, frame = cap.read()

if not hasFrame:

break

frame_count += 1

t = time.time()

outOpencvHaar, bboxes = detectFaceOpenCVHaar(faceCascade, frame)

tt_opencvHaar += time.time() - t

fpsOpencvHaar = frame_count / tt_opencvHaar

label = "OpenCV Haar ; FPS : {:.2f}".format(fpsOpencvHaar)

cv2.putText(outOpencvHaar, label, (10, 50), cv2.FONT_HERSHEY_SIMPLEX, 1.4, (0, 0, 255), 3, cv2.LINE_AA)

cv2.imshow("Face Detection Comparison", outOpencvHaar)

vid_writer.write(outOpencvHaar)

if frame_count == 1:

tt_opencvHaar = 0

k = cv2.waitKey(10)

if k == 27:

break

cv2.destroyAllWindows()

vid_writer.release()

2 OpenCV中的深度学习人脸分类器

OpenCV3.3以上版本就有该分类器的模型。模型来自论文: https://arxiv.org/abs/1512.02325

但是提供了两种不同的模型。一种是16位浮点数的caffe人脸模型(5.4MB),另外一种是8bit量化后的tensorflow人脸模型(2.7MB)。量化是指比如可以用0~255表示原来32个bit所表示的精度,通过牺牲精度来降低每一个权值所需要占用的空间。通常情况深度学习模型会有冗余计算量,冗余性决定了参数个数。因此合理的量化网络也可保证精度的情况下减小模型的存储体积,不会对网络的精度造成影响。具体可以看看深度学习fine-

tuning的论文。通常这种操作可以稍微降低精度,提高速度,大大减少模型体积。

这种方法速度慢了点,但是精度不错。对于调用模型代码写的很清楚。但是tensorflow模型有点小问题,可能只能在opencv3.4.3以上版本通过readNet函数调用。

代码

C++:

#include "pch.h"

#include "face_detection.h"

//检测图像宽高

const size_t inWidth = 300;

const size_t inHeight = 300;

//缩放比例

const double inScaleFactor = 1.0;

//阈值

const double confidenceThreshold = 0.7;

//均值

const cv::Scalar meanVal(104.0, 177.0, 123.0);

/**

* @brief 人脸检测Opencv ssd

*

* @param frame 原图

* @param configFile 模型结构定义文件

* @param weightFile 模型文件

* @return Mat

*/

Mat detectFaceOpenCVDNN(Mat frame, string configFile, string weightFile)

{

Mat frameOpenCVDNN = frame.clone();

Net net;

Mat inputBlob;

int frameHeight = frameOpenCVDNN.rows;

int frameWidth = frameOpenCVDNN.cols;

//获取文件后缀

string suffixStr = configFile.substr(configFile.find_last_of('.') + 1);

//判断是caffe模型还是tensorflow模型

if (suffixStr == "prototxt")

{

net = dnn::readNetFromCaffe(configFile, weightFile);

inputBlob = cv::dnn::blobFromImage(frameOpenCVDNN, inScaleFactor, cv::Size(inWidth, inHeight), meanVal, false, false);

}

else

{

//bug

//net = dnn::readNetFromTensorflow(configFile, weightFile);

net = dnn::readNet(configFile, weightFile);

inputBlob = cv::dnn::blobFromImage(frameOpenCVDNN, inScaleFactor, cv::Size(inWidth, inHeight), meanVal, true, false);

}

//读图检测

net.setInput(inputBlob, "data");

cv::Mat detection = net.forward("detection_out");

cv::Mat detectionMat(detection.size[2], detection.size[3], CV_32F, detection.ptr<float>());

for (int i = 0; i < detectionMat.rows; i++)

{

//分类精度

float confidence = detectionMat.at<float>(i, 2);

if (confidence > confidenceThreshold)

{

//左上角坐标

int x1 = static_cast<int>(detectionMat.at<float>(i, 3) * frameWidth);

int y1 = static_cast<int>(detectionMat.at<float>(i, 4) * frameHeight);

//右下角坐标

int x2 = static_cast<int>(detectionMat.at<float>(i, 5) * frameWidth);

int y2 = static_cast<int>(detectionMat.at<float>(i, 6) * frameHeight);

//画框

cv::rectangle(frameOpenCVDNN, cv::Point(x1, y1), cv::Point(x2, y2), cv::Scalar(0, 255, 0), 2, 4);

}

}

return frameOpenCVDNN;

}

python

from __future__ import division

import cv2

import time

import sys

def detectFaceOpenCVDnn(net, frame):

frameOpencvDnn = frame.copy()

frameHeight = frameOpencvDnn.shape[0]

frameWidth = frameOpencvDnn.shape[1]

blob = cv2.dnn.blobFromImage(frameOpencvDnn, 1.0, (300, 300), [104, 117, 123], False, False)

net.setInput(blob)

detections = net.forward()

bboxes = []

for i in range(detections.shape[2]):

confidence = detections[0, 0, i, 2]

if confidence > conf_threshold:

x1 = int(detections[0, 0, i, 3] * frameWidth)

y1 = int(detections[0, 0, i, 4] * frameHeight)

x2 = int(detections[0, 0, i, 5] * frameWidth)

y2 = int(detections[0, 0, i, 6] * frameHeight)

bboxes.append([x1, y1, x2, y2])

cv2.rectangle(frameOpencvDnn, (x1, y1), (x2, y2), (0, 255, 0), int(round(frameHeight/150)), 8)

return frameOpencvDnn, bboxes

if __name__ == "__main__" :

# OpenCV DNN supports 2 networks.

# 1. FP16 version of the original caffe implementation ( 5.4 MB )

# 2. 8 bit Quantized version using Tensorflow ( 2.7 MB )

DNN = "TF"

if DNN == "CAFFE":

modelFile = "models/res10_300x300_ssd_iter_140000_fp16.caffemodel"

configFile = "models/deploy.prototxt"

net = cv2.dnn.readNetFromCaffe(configFile, modelFile)

else:

modelFile = "models/opencv_face_detector_uint8.pb"

configFile = "models/opencv_face_detector.pbtxt"

net = cv2.dnn.readNetFromTensorflow(modelFile, configFile)

conf_threshold = 0.7

source = 0

if len(sys.argv) > 1:

source = sys.argv[1]

cap = cv2.VideoCapture(source)

hasFrame, frame = cap.read()

vid_writer = cv2.VideoWriter('output-dnn-{}.avi'.format(str(source).split(".")[0]),cv2.VideoWriter_fourcc('M','J','P','G'), 15, (frame.shape[1],frame.shape[0]))

frame_count = 0

tt_opencvDnn = 0

while(1):

hasFrame, frame = cap.read()

if not hasFrame:

break

frame_count += 1

t = time.time()

outOpencvDnn, bboxes = detectFaceOpenCVDnn(net,frame)

tt_opencvDnn += time.time() - t

fpsOpencvDnn = frame_count / tt_opencvDnn

label = "OpenCV DNN ; FPS : {:.2f}".format(fpsOpencvDnn)

cv2.putText(outOpencvDnn, label, (10,50), cv2.FONT_HERSHEY_SIMPLEX, 1.4, (0, 0, 255), 3, cv2.LINE_AA)

cv2.imshow("Face Detection Comparison", outOpencvDnn)

vid_writer.write(outOpencvDnn)

if frame_count == 1:

tt_opencvDnn = 0

k = cv2.waitKey(10)

if k == 27:

break

cv2.destroyAllWindows()

vid_writer.release()

3 Dlib中的hog人脸分类器和Dlib中的hog人脸分类器

Dlib就没有运行了,因为要编译嫌麻烦。而且opencv自带的已经足够了。Dlib里面人脸分类器调用和opencv一样。

Dlib所用的人脸数据见:

Hog(2825张图像):

http://dlib.net/files/data/dlib_face_detector_training_data.tar.gz

dnn(7220张图像):

http://dlib.net/files/data/dlib_face_detection_dataset-2016-09-30.tar.gz

4 方法比较

OpencvDNN综合来说是最好的方法。不过要opencv3.43以上,对尺寸要求不高,速度精度都不错。如果追求高精度用caffe模型就行了,opencv3.4.1以上就可以了。OpenCV

DNN低版本对tensorflow模型支持不好。

Dlib Hog在CPU下,检测速度最快但是小图像(人脸像素70以下)是无效的。因此第二个推荐是Hog。

Dlib DNN在GPU下,应该是最好的选择,精度都是最高的,但是有点慢。

Haar Cascade不推荐太古老了,而且错误率很高。

参考

https://www.learnopencv.com/face-detection-opencv-dlib-and-deep-learning-c-python/

最新文章

- stackoverfow访问 ajax.googleapis.com

- Could not open Hibernate Session for transaction;

- 使用FFmpeg解码H264-2016.01.14

- 流式计算之Storm简介

- ImageSource使用心得(转)

- 构建高效安全的Nginx Web服务器

- arcgis10 安装1721错误

- 类似与fiddler的抓包工具 burp suite free edition

- group by、order by 先后顺序问题

- uva 12300 - Smallest Regular Polygon

- shiro基础学习(二)—shiro认证

- php 数据连接 基础

- Linux系统下virtuoso数据库安装与使用

- flutter常规错误

- Visual Studio 向工程中添加文件夹

- 七牛免费SSL证书申请全流程

- Spring Boot + Jpa + Thymeleaf 增删改查示例

- spring部分注解

- Mybatis多个in查询

- python读取剪贴板报错 pywintypes.error: (1418, 'GetClipboardData', '\xcf\xdf\xb3\xcc\xc3\xbb\xd3\xd0\xb4\xf2\xbf\xaa\xb5\x