在kubernetes中搭建harbor,并利用MinIO对象存储保存镜像文件

前言:此文档是用来在线下环境harbor利用MinIO做镜像存储的,至于那些说OSS不香吗?或者单机harbor的,不用看了。此文档对你没啥用,如果是采用单机的harbor连接集群MinIO,请看我的另一篇博文。

环境:

应用版本:

helm v3.2.3

kubernetes 1.14.3

nginx-ingress 1.39.1

harbor 2.0

nginx 1.15.3

MinIO RELEASE.2020-05-08T02-40-49Z

### 这里就不讲解kubernetes集群怎么搭建了。我们kubernetes共享存储为了简单,采用的是nfs。我们先讲解一下怎么采用nfs做k8s持久存储。

### 注意执行主机,除了nfs-server是在94那台服务器执行了相关命令,其他的大部分是在master1上面执行

## 一、nfs-client-provisioner

### 1、在nfs-server安装nfs服务

yum -y install nfs-utils rpcbind

mkdir /nfs/data

chmod 777 /nfs/data

echo '/nfs/data *(rw,no_root_squash,sync)' > /etc/exports

exportfs -r

systemctl restart rpcbind && systemctl enable rpcbind

systemctl restart nfs && systemctl enable nfs

rpcinfo -p localhost

showmount -e 10.0.0.94

### 2、在其他服务器安装nfs-client

yum install -y nfs-utils

### 3、在k8s-master1上安装nfs-client-provisioner 实现动态持久存储,nfs-client-provisioner 是一个Kubernetes的简易NFS的外部provisioner,本身不提供NFS

cd /usr/local/src && mkdir nfs-client-provisioner && cd nfs-client-provisioner

### 注意deployment.yaml文件中,IP对应的是nfs-server的,PATH路径对应的是nfs-server的/etc/exports的路径

cat > deployment.yaml << EOF

apiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-client-provisioner

---

kind: Deployment

apiVersion: apps/v1

metadata:

name: nfs-client-provisioner

spec:

replicas: 1

selector:

matchLabels:

app: nfs-client-provisioner

strategy:

type: Recreate

template:

metadata:

labels:

app: nfs-client-provisioner

spec:

serviceAccount: nfs-client-provisioner

containers:

- name: nfs-client-provisioner

image: quay-mirror.qiniu.com/external_storage/nfs-client-provisioner:latest

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

env:

- name: PROVISIONER_NAME

value: fuseim.pri/ifs

- name: NFS_SERVER

value: 10.0.0.94

- name: NFS_PATH

value: /nfs/data

volumes:

- name: nfs-client-root

nfs:

server: 10.0.0.94

path: /nfs/data

EOF

cat > rbac.yaml << EOF

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: nfs-client-provisioner-runner

rules:

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["create", "update", "patch"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: run-nfs-client-provisioner

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

namespace: default

- kind: ServiceAccount

name: nfs-client-provisioner

namespace: kube-system

roleRef:

kind: ClusterRole

name: nfs-client-provisioner-runner

apiGroup: rbac.authorization.k8s.io

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

rules:

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch"]

---

kind: RoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

roleRef:

kind: Role

name: leader-locking-nfs-client-provisioner

apiGroup: rbac.authorization.k8s.io

EOF

cat > StorageClass.yaml << EOF

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: managed-nfs-storage

provisioner: fuseim.pri/ifs

parameters:

archiveOnDelete: "false"

EOF

kubectl apply -f deployment.yaml

kubectl apply -f rbac.yaml

kubectl apply -f StorageClass.yaml

### 稍等片刻,检查nfs-client-provisioner是否正常,出现下面的输出说明正常,如果不正常请检查上面的步骤,是否存在问题

kubectl get pods -n kube-system | grep nfs nfs-client-provisioner-7778496f89-kthnj / Running 169m

## 二、安装helm3

cd /usr/local/src &&\

wget https://get.helm.sh/helm-v3.2.3-linux-amd64.tar.gz &&\

tar xf helm-v3.2.3-linux-amd64.tar.gz &&\

cp linux-amd64/helm /usr/bin/ &&\

helm version

## 三、安装nginx-controller-manager

helm repo add stable http://mirror.azure.cn/kubernetes/charts

helm pull stable/nginx-ingress &&\

docker pull fungitive/defaultbackend-amd64 &&\

docker tag fungitive/defaultbackend-amd64 k8s.gcr.io/defaultbackend-amd64:1.5 &&\

helm template guoys nginx-ingress-*.tgz | kubectl apply -f -

## 四、安装MinIO

### 1、在准备的4台服务器安装minio Server,官方建议是准备最低4台服务器,并且是单独的磁盘空间存放minio数据

cd /usr/local/src &&\

wget https://dl.min.io/server/minio/release/linux-amd64/minio &&\

chmod +x minio && cp minio /usr/bin

cat > /etc/systemd/system/minio.service <<EOF

[Unit]

Description=Minio

Documentation=https://docs.minio.io

Wants=network-online.target

After=network-online.target

AssertFileIsExecutable=/usr/bin/minio [Service]

EnvironmentFile=-/etc/minio/minio.conf

ExecStart=/usr/bin/minio server $ENDPOINTS # Let systemd restart this service always

Restart=always

# Specifies the maximum file descriptor number that can be opened by this process

LimitNOFILE=

# Disable timeout logic and wait until process is stopped

TimeoutStopSec=infinity

SendSIGKILL=no

[Install]

WantedBy=multi-user.target

EOF

### 此处IP地址要与自己的机器地址对应或者采用域名,后缀是minio存储路径

mkdir -p /etc/minio cat > /etc/minio/minio.conf <<EOF

MINIO_ACCESS_KEY=guoxy

MINIO_SECRET_KEY=guoxy321export

ENDPOINTS="http://10.0.0.91/minio http://10.0.0.92/minio http://10.0.0.93/minio http://10.0.0.94/minio"

EOF systemctl daemon-reload && systemctl start minio && systemctl enable minio

### 2、在k8s-master1安装mc命令,并创建bucket harbor

cd /usr/local/src && \

wget https://dl.min.io/client/mc/release/linux-amd64/mc && \

chmod +x mc && cp mc /usr/bin/ && \

mc config host add minio "http://10.0.0.91:9000 http://10.0.0.92:9000 http://10.0.0.93:9000/ http://10.0.0.94:9000" guoxy guoxy321export && \

mc mb minio/harbor

## 五、在k8s中安装harbor

### 1、先在k8s中创建harbor要使用的TLS证书的secret,证书如果没有可以let's encrypt申请

kubectl create secret tls guofire.xyz --key privkey.pem --cert fullchain.pem

### 2、克隆harbor-helm

cd /usr/local/src && \

git clone -b 1.4. https://github.com/goharbor/harbor-helm

### 3、修改harbor-helm/values.yaml,由于内容太多了,我只把需要修改的内容贴出来

vim harbor-helm/values.yaml

### secretName对应刚刚创建的secret名称,core为harbor访问域名

secretName: "guofire.xyz"

core: harbor.guofire.xyz

notary: notary.guofire.xyz

externalURL: https://harbor.guofire.xyz

### 下面是nfs持久化存储

persistentVolumeClaim:

registry:

storageClass: "managed-nfs-storage"

subPath: "registry"

storageClass: "managed-nfs-storage"

subPath: "chartmuseum"

storageClass: "managed-nfs-storage"

subPath: "jobservice"

storageClass: "managed-nfs-storage"

subPath: "database"

storageClass: "managed-nfs-storage"

subPath: "redis"

storageClass: "managed-nfs-storage"

subPath: "trivy"

### 这往下最重要,regionendpoint地址可以写nginx代理的地址和端口,我这里只写了minio Server其中一台

imageChartStorage:

disableredirect: true

type: s3

filesystem:

rootdirectory: /storage

#maxthreads:

s3:

region: us-west-

bucket: harbor

accesskey: guoys!

secretkey: guoys321export

regionendpoint: http://10.0.0.92:9000

encrypt: false

secure: false

v4auth: true

chunksize: ""

rootdirectory: /

redirect:

disabled: false

maintenance:

uploadpurging:

enabled: false

delete:

enabled: true

### 4、通过helm在k8s中安装harbor

helm install harbor harbor-helm/

### 5、最后稍等3、5分钟,查看harbor应用是否正常

kubectl get pods

### 出现下面类似的输出,基本上说明harbor已经正常启动

NAME READY UP-TO-DATE AVAILABLE AGE

harbor-harbor-chartmuseum / 13h

harbor-harbor-clair / 13h

harbor-harbor-core / 13h

harbor-harbor-jobservice / 13h

harbor-harbor-notary-server / 13h

harbor-harbor-notary-signer / 13h

harbor-harbor-portal / 13h

harbor-harbor-registry / 13h

zy-nginx-ingress-controller / 32h

zy-nginx-ingress-default-backend / 32h

## 六、安装nginx 4层转发,否则无法通过nginx-ingress访问harbor

### 1、由于nginx-ingress默认是LoadBalancer模式,在线下环境无法正常使用。我们需要改为NodePort

kubectl edit svc guoys-nginx-ingress-controller

### 修改.spec.type的值为NodePort,并保存

### 2、查看nginx-ingress-controller的nodeport端口,记住80和443对应的端口

kubectl get svc | grep 'ingress-controller'

guoys-nginx-ingress-controller NodePort 10.200.248.214 <none> :32492/TCP,:30071/TCP 32h

### 3、安装nginx4层代理

yum install -y gcc make

mkdir /apps

cd /usr/local/src/

wget http://nginx.org/download/nginx-1.15.3.tar.gz

tar xf nginx-1.15..tar.gz

cd nginx-1.15.

./configure --with-stream --without-http --prefix=/apps/nginx --without-http_uwsgi_module --without-http_scgi_module --without-http_fastcgi_module

make && make install

### 下面upstream中的端口一定要跟上面2步骤NodePort的相对应

cat > /apps/nginx/conf/nginx.conf <<EOF

worker_processes ; events {

worker_connections ;

} stream {

log_format tcp '$remote_addr [$time_local] '

'$protocol $status $bytes_sent $bytes_received '

'$session_time "$upstream_addr" '

'"$upstream_bytes_sent" "$upstream_bytes_received" "$upstream_connect_time"'; upstream https_default_backend {

server 10.0.0.91:;

server 10.0.0.92:;

server 10.0.0.93:;

} upstream http_backend {

server 10.0.0.91:;

server 10.0.0.92:;

server 10.0.0.93:;

} server {

listen ;

proxy_pass https_default_backend;

access_log logs/access.log tcp;

error_log logs/error.log;

} server {

listen ;

proxy_pass http_backend;

} }

EOF

### 测试并启动nginx

/apps/nginx/sbin/nginx -t

/apps/nginx/sbin/nginx

echo '/apps/nginx/sbin/nginx' >> /etc/rc.local

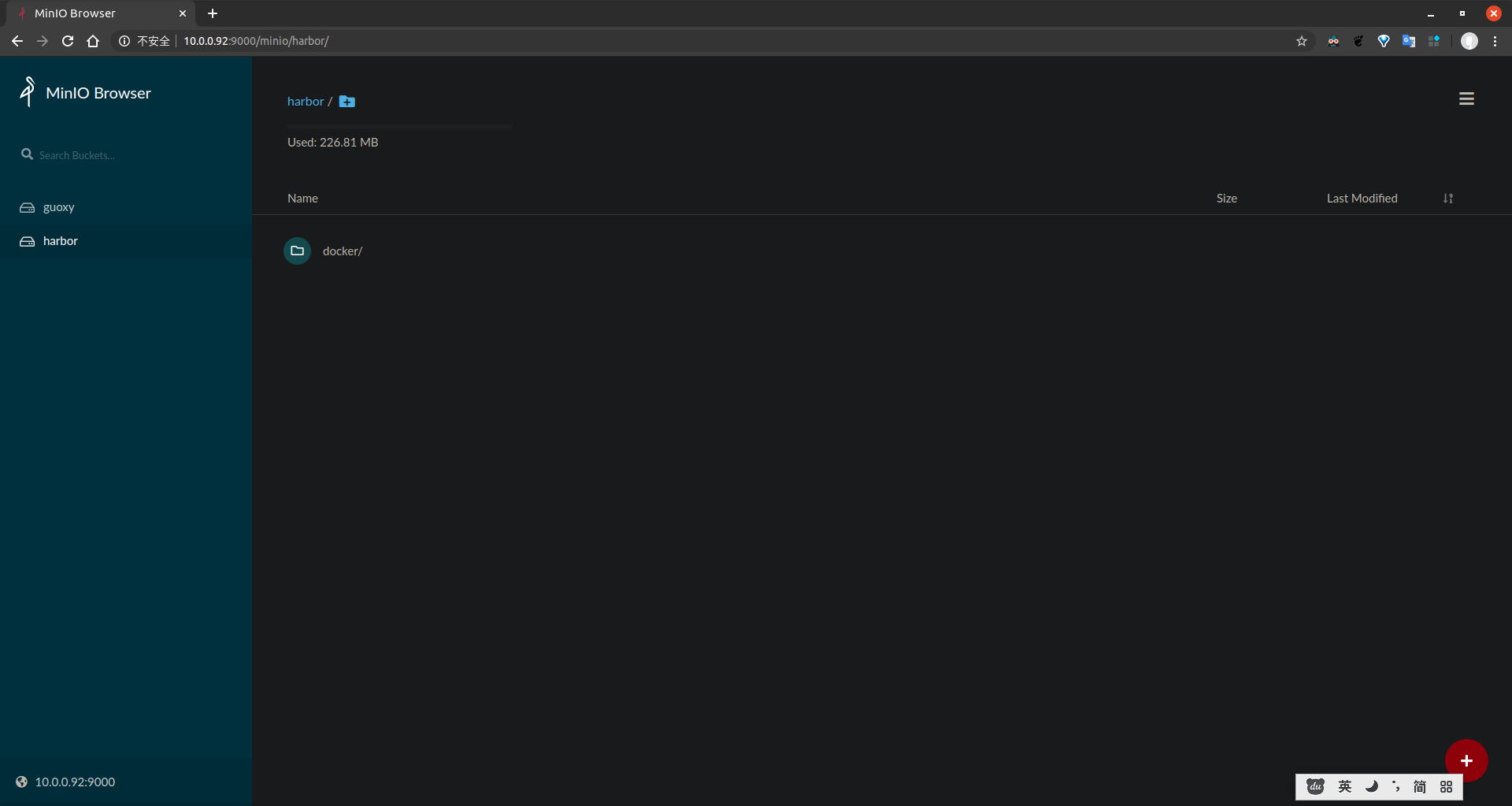

## 七、最后进行测试,推送镜像到harbor。成功后查看minio的harbor bucket是否存在docker目录。如果存在说明成

## 如果此文档对你有所帮助,请不吝打赏

最新文章

- StrategyPattern (策略模式)

- BaaS、IaaS、PaaS、SaaS

- [LeetCode]444. Sequence Reconstruction

- 一次性插入多条sql语句的几种方法

- JQuery-事件(部分)

- Uiautomator ——API详解

- [设计模式] Javascript 之 外观模式

- HDU 1517 (类巴什博奕) A Multiplication Game

- HTML5新增标签属性

- Java 向Hbase表插入数据报(org.apache.hadoop.hbase.client.HTablePool$PooledHTable cannot be cast to org.apac)

- [C# 网络编程系列]专题九:实现类似QQ的即时通信程序

- sicily 1007 To and Fro

- Microsoft Visual C++ Runtime Library Runtime Error解决的方式

- Java 学习路线以及各阶段学习书籍,博文,视频的分享

- sql语句的一些案列!

- 第三节 pandas续集

- CentOS更换源

- 其他-n个互相独立的连续随机变量中第i小的数值期望

- Android App签名打包

- java框架之SpringBoot(6)-Restful风格的CRUD示例

热门文章

- MySQL(9)— 规范数据库设计

- Windows系统下pthread环境配置

- A+B Coming(hdu1720)

- ImportError: /lib64/libstdc++.so.6: version `CXXABI_1.3.9' not found

- Java——删除Map集合中key-value值

- akka-typed(0) - typed-actor, typed messages

- Linux操作系统分析 | 深入理解系统调用

- 【半译】扩展shutdown超时设置以保证IHostedService正常关闭

- [COCOS2DX-LUA]0-004.cocos2dx中的DrawNode的init的方法问题

- [注]一条牛B的游戏推送要具备哪些条件?